The rapid advancement of large language models has paved the way for advances in natural language processing, enabling applications ranging from chatbots to machine translation. However, these models often need help processing long sequences efficiently, which is essential for many real-world tasks. As the length of the input sequence grows, the attention mechanisms in these models become increasingly computationally expensive. Researchers have been exploring ways to address this challenge and make large language models more practical for various applications.

A research team recently presented an innovative solution called “HyperAttention.” This innovative algorithm aims to efficiently approximate attention mechanisms in large language models, particularly when dealing with long sequences. It simplifies existing algorithms and leverages various techniques to identify dominant entries in attention matrices, ultimately speeding up calculations.

HyperAttention’s approach to solving the efficiency problem in large language models involves several key elements. Let’s dig into the details:

- Spectral guarantees: HyperAttention focuses on achieving spectral guarantees to ensure the reliability of its approximations. Using parameterizations based on condition number reduces the need for certain assumptions that are typically made in this domain.

- SortLSH to identify dominant entries: HyperAttention uses the Hamming-ordered Locality Sensitive Hashing (LSH) technique to improve efficiency. This method allows the algorithm to identify the most significant entries in the attention matrices, aligning them with the diagonal for more efficient processing.

- Efficient sampling techniques: HyperAttention efficiently approximates the diagonal entries in the attention matrix and optimizes the product of the matrix with the value matrix. This step ensures that large language models can process long sequences without significantly reducing performance.

- Versatility and flexibility: HyperAttention is designed to offer flexibility in handling different use cases. As demonstrated in the article, it can be effectively applied when using a predefined mask or generating a mask using the sortLSH algorithm.

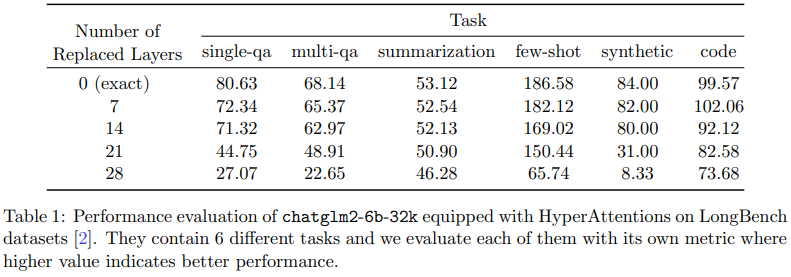

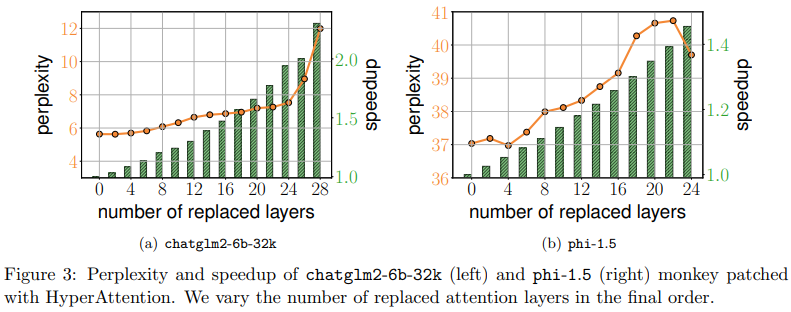

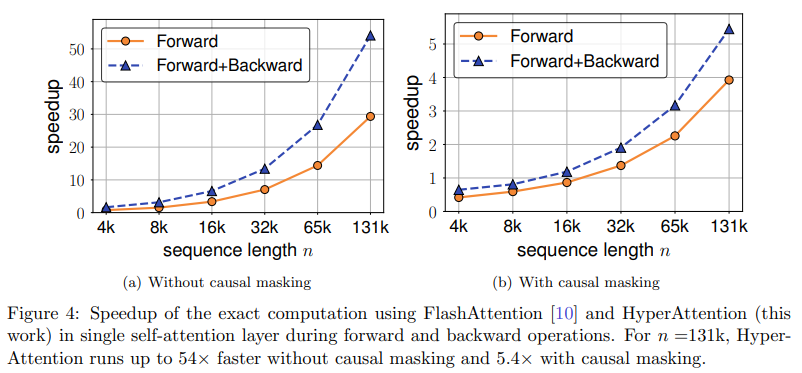

HyperAttention’s performance is impressive. It enables substantial speedups in both inference and training, making it a valuable tool for large language models. By simplifying complex attention calculations, it addresses the problem of long-range sequence processing, improving the practical usability of these models.

In conclusion, the research team behind HyperAttention has made significant progress in addressing the challenge of efficient long-range sequence processing in large language models. Their algorithm simplifies the complex calculations involved in attention mechanisms and offers spectral guarantees for their approximations. By leveraging techniques such as Hamming-ordered LSH, HyperAttention identifies dominant inputs and optimizes matrix products, leading to substantial speedups in inference and training.

This development is a promising development for natural language processing, where large language models play a central role. It opens up new possibilities for expanding self-attention mechanisms and makes these models more practical for various applications. As the demand for efficient and scalable language models continues to grow, HyperAttention represents an important step in the right direction, ultimately benefiting researchers and developers in the NLP community.

Review the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to join. our 31k+ ML SubReddit, Facebook community of more than 40,000 people, Discord Channel, and Electronic newsletterwhere we share the latest news on ai research, interesting ai projects and more.

If you like our work, you’ll love our newsletter.

We are also on WhatsApp. Join our ai channel on Whatsapp.

![]()

Madhur Garg is a consulting intern at MarktechPost. He is currently pursuing his Bachelor’s degree in Civil and Environmental Engineering from the Indian Institute of technology (IIT), Patna. He shares a great passion for machine learning and enjoys exploring the latest advancements in technologies and their practical applications. With a keen interest in artificial intelligence and its various applications, Madhur is determined to contribute to the field of data science and harness the potential impact of it in various industries.

<!– ai CONTENT END 2 –>

NEWSLETTER

NEWSLETTER