Introduction

LLMs are all the rage, and the tool-calling feature has broadened the scope of large language models. Instead of generating only texts, it enabled LLMs to accomplish complex automation tasks that were previously impossible, such as dynamic UI generation, agentic automation, etc.

These models are trained over a huge amount of data. Hence, they understand and can generate structured data, making them ideal for tool-calling applications requiring precise outputs. This has driven the widespread adoption of LLMs in ai-driven software development, where tool-calling—ranging from simple functions to sophisticated agents—has become a focal point.

In this article, you will go from learning the fundamentals of LLM tool calling to implementing it to build agents using open-source tools.

Learning Objectives

- Learn what LLM tools are.

- Understand the fundamentals of tool calling and use cases.

- Explore how tool calling works in OpenAI (ChatCompletions API, Assistants API, Parallel tool calling, and Structured Output), Anthropic models, and LangChain.

- Learn to build capable ai agents using open-source tools.

This article was published as a part of the Data Science Blogathon.

Tools are objects that allow LLMs to interact with external environments. These tools are functions made available to LLMs, which can be executed separately whenever the LLM determines that their use is appropriate.

Usually, there are three elements of a tool definition.

- Name: A meaningful name of the function/tool.

- Description: A detailed description of the tool.

- Parameters: A JSON schema of parameters of the function/tool.

Tool calling enables the model to generate a response for a prompt that aligns with a user-defined schema for a function. In other words, when the LLM determines that a tool should be used, it generates a structured output that matches the schema for the tool’s arguments.

For instance, if you have provided a schema of a get_weather function to the LLM and ask it for the weather of a city, instead of generating a text response, it returns a formatted schema of functions arguments, which you can use to execute the function to get the weather of a city.

Despite the name “tool calling,” the model does not actually execute any tool itself. Instead, it produces a structured output formatted according to the defined schema. Then, You can supply this output to the corresponding function to run it on your end.

ai labs like OpenAI and Anthropic have trained models so that you can provide the LLM with many tools and have it select the right one according to the context.

Each provider has a different way of handling tool invocations and response handling. Here’s the general flow of how tool calling works when you pass a prompt and tools to the LLM:

- Define Tools and Provide a User Prompt

- Define tools and functions with names, descriptions, and structured schema for arguments.

- Also include a user-provided text, e.g., “What’s the weather like in New York today?”

- The LLM Decides to Use a Tool

- The Assistant assesses if a tool is required.

- If yes, it halts the text generation.

- The Assistant generates a JSON formatted response with the tool’s parameter values.

- Extract Tool Input, Run Code, and Return Outputs

- Extract the parameters provided in the function call.

- Run the function by passing the parameters.

- Pass the outputs back to the LLM.

- Generate Answers from Tool Outputs

- The LLM uses the tool outputs to formulate a general answer.

Example Use Cases

- Enabling LLMs to take action: Connect LLMs with external applications like Gmail, GitHub, and Discord to automate actions such as sending an email, pushing a PR, and sending a message.

- Providing LLMs with data: Fetch data from knowledge bases like the web, Wikipedia, and Weather APIs to provide niche information to LLMs.

- Dynamic UIs: Updating UIs of your applications based on user inputs.

Different model providers take different approaches to handling tool calling. This article will discuss the tool-calling approaches of OpenAI, Anthropic, and LangChain. You can also use open-source models like Llama 3 and inference providers like Groq for tool calling.

Currently, OpenAI has four different models (GPT-4o. GPT-4o-mini, GPT-4-turbo, and GPT-3.5-turbo). All these models support tool calling.

Let’s understand it using a simple calculator function example.

def calculator(operation, num1, num2):

if operation == "add":

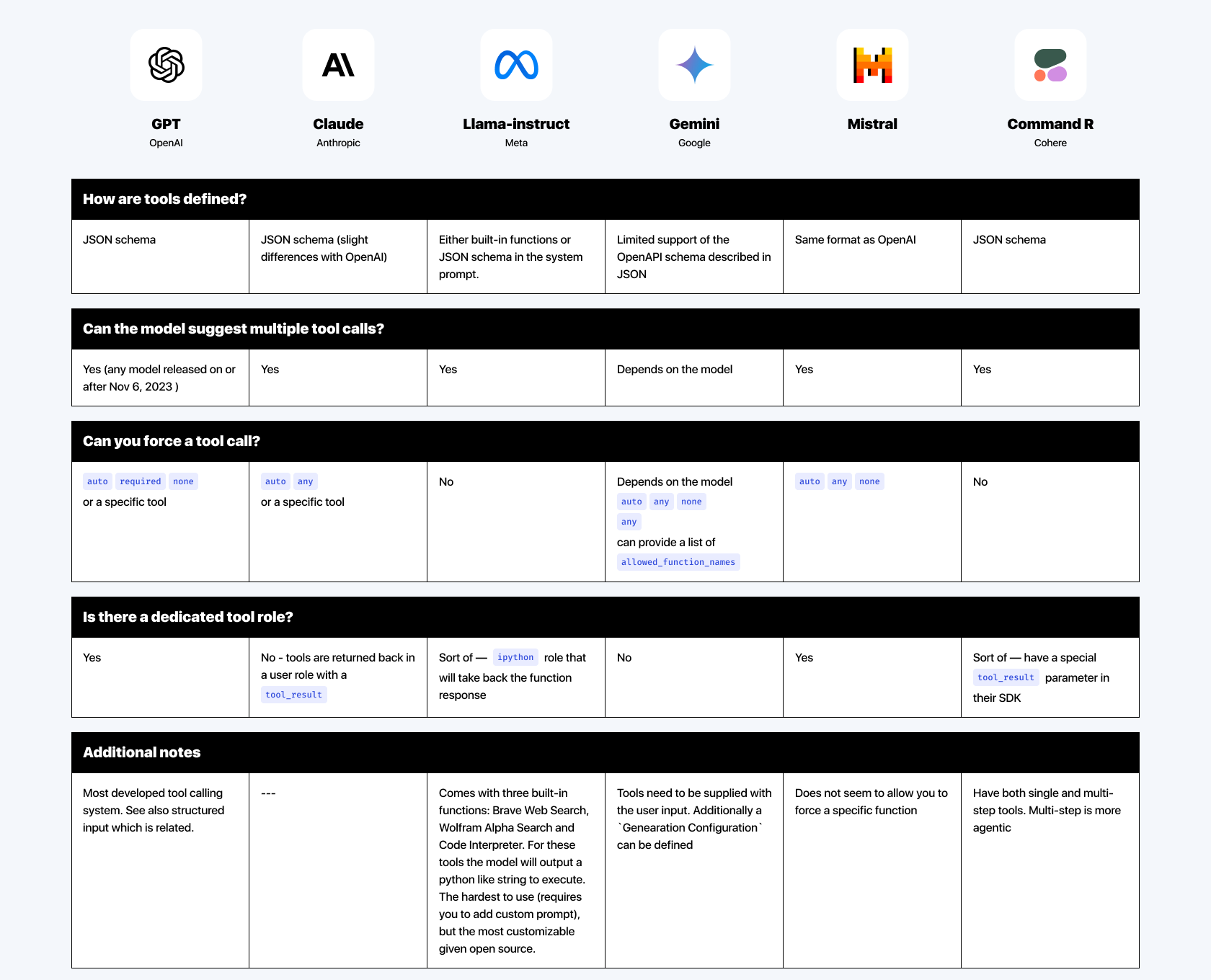

return num1 + num2

elif operation == "subtract":

return num1 - num2

elif operation == "multiply":

return num1 * num2

elif operation == "divide":

return num1 / num2Create a tool calling schema for the Calculator function.

import openai

openai.api_key = OPENAI_API_KEY

# Define the function schema (this is what GPT-4 will use to understand how to call the function)

calculator_function = {

"name": "calculator",

"description": "Performs basic arithmetic operations",

"parameters": {

"type": "object",

"properties": {

"operation": {

"type": "string",

"enum": ("add", "subtract", "multiply", "divide"),

"description": "The operation to perform"

},

"num1": {

"type": "number",

"description": "The first number"

},

"num2": {

"type": "number",

"description": "The second number"

}

},

"required": ("operation", "num1", "num2")

}

}A typical OpenAI function/tool calling schema has a name, description, and parameter section. Inside the parameters section, you can provide the details for the function’s arguments.

- Each property has a data type and description.

- Optionally, an enum which defines specific values the parameter expects. In this case, the “operation” parameter expects any of “add”, “subtract”, multiply, and “divide”.

- Required sections indicate the parameters the model must generate.

Now, use the defined schema of the function to get response from the chat completion endpoint.

# Example of calling the OpenAI API with a tool

response = openai.chat.completions.create(

model="gpt-4-0613", # You can use any version that supports function calling

messages=(

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is 3 plus 4?"},

),

functions=(calculator_function),

function_call={"name": "calculator"}, # Instruct the model to call the calculator function

)

# Extracting the function call and its arguments from the response

function_call = response.choices(0).message.function_call

name = function_call.name

arguments = function_call.argumentsYou can now pass the arguments to the Calculator function to get an output.

import json

args = json.loads(arguments)

result = calculator(args('operation'), args('num1'), args('num2'))

# Output the result

print(f"Result: {result}")This is the simplest way to use tool calling using OpenAI models.

Using the Assistant API

You can also use tool calling with the Assistant API. This provides more freedom and control over the entire workflow, allowing you to” build agents to accomplish complex automation tasks.

Here is how to use tool calling with Assistant API.

We will use the same calculator example.

from openai import OpenAI

client = OpenAI(api_key=OPENAI_API_KEY)

assistant = client.beta.assistants.create(

instructions="You are a weather bot. Use the provided functions to answer questions.",

model="gpt-4o",

tools=({

"type":"function",

"function":{

"name": "calculator",

"description": "Performs basic arithmetic operations",

"parameters": {

"type": "object",

"properties": {

"operation": {

"type": "string",

"enum": ("add", "subtract", "multiply", "divide"),

"description": "The operation to perform"

},

"num1": {

"type": "number",

"description": "The first number"

},

"num2": {

"type": "number",

"description": "The second number"

}

},

"required": ("operation", "num1", "num2")

}

}

}

)

)Create a thread and a message

thread = client.beta.threads.create()

message = client.beta.threads.messages.create(

thread_id=thread.id,

role="user",

content="What is 3 plus 4?",

)Initiate a run

run = client.beta.threads.runs.create_and_poll(

thread_id=thread.id,

assistant_id="assistant.id")Retrieve the arguments and run the Calculator function

arguments = run.required_action.submit_tool_outputs.tool_calls(0).function.arguments

import json

args = json.loads(arguments)

result = calculator(args('operation'), args('num1'), args('num2'))Loop through the required action and add it to a list

#tool_outputs = ()

# Loop through each tool in the required action section

for tool in run.required_action.submit_tool_outputs.tool_calls:

if tool.function.name == "calculator":

tool_outputs.append({

"tool_call_id": tool.id,

"output": str(result)

})Submit the tool outputs to the API and generate a response

# Submit the tool outputs to the API

client.beta.threads.runs.submit_tool_outputs_and_poll(

thread_id=thread.id,

run_id=run.id,

tool_outputs=tool_outputs

)

messages = client.beta.threads.messages.list(

thread_id=thread.id

)

print(messages.data(0).content(0).text.value)This will output a response `3 plus 4 equals 7`.

Parallel Function Calling

You can also use multiple tools simultaneously for more complicated use cases. For instance, getting the current weather at a location and the chances of precipitation. To achieve this, you can use the parallel function calling feature.

Define two dummy functions and their schemas for tool calling

from openai import OpenAI

client = OpenAI(api_key=OPENAI_API_KEY)

def get_current_temperature(location, unit="Fahrenheit"):

return {"location": location, "temperature": "72", "unit": unit}

def get_rain_probability(location):

return {"location": location, "probability": "40"}

assistant = client.beta.assistants.create(

instructions="You are a weather bot. Use the provided functions to answer questions.",

model="gpt-4o",

tools=(

{

"type": "function",

"function": {

"name": "get_current_temperature",

"description": "Get the current temperature for a specific location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g., San Francisco, CA"

},

"unit": {

"type": "string",

"enum": ("Celsius", "Fahrenheit"),

"description": "The temperature unit to use. Infer this from the user's location."

}

},

"required": ("location", "unit")

}

}

},

{

"type": "function",

"function": {

"name": "get_rain_probability",

"description": "Get the probability of rain for a specific location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g., San Francisco, CA"

}

},

"required": ("location")

}

}

}

)

)Now, create a thread and initiate a run. Based on the prompt, this will output the required JSON schema of function parameters.

thread = client.beta.threads.create()

message = client.beta.threads.messages.create(

thread_id=thread.id,

role="user",

content="What's the weather in San Francisco today and the likelihood it'll rain?",

)

run = client.beta.threads.runs.create_and_poll(

thread_id=thread.id,

assistant_id=assistant.id,

)Parse the tool parameters and call the functions

import json

location = json.loads(run.required_action.submit_tool_outputs.tool_calls(0).function.arguments)

weather = json.loa"s(run.requir"d_action.submit_t"ol_out"uts.tool_calls(1).function.arguments)

temp = get_current_temperature(location('location'), location('unit'))

rain_p"ob = get_rain_pro"abilit"(weather('location'))

# Output the result

print(f"Result: {temp}")

print(f"Result: {rain_prob}")Define a list to store tool outputs

# Define the list to store tool outputs

tool_outputs = ()

# Loop through each tool in the required action section

for tool in run.required_action.submit_tool_outputs.tool_calls:

if tool.function.name == "get_current_temperature":

tool_outputs.append({

"tool_call_id": tool.id,

"output": str(temp)

})

elif tool.function.name == "get_rain_probability":

tool_outputs.append({

"tool_call_id": tool.id,

"output": str(rain_prob)

})Submit tool outputs and generate an answer

# Submit all tool outputs at once after collecting them in tool_outputs:

try:

run = client.beta.threads.runs.submit_tool_outputs_and_poll(

thread_id=thread.id,

run_id=run.id,

tool_outputs=tool_outputs

)

print("Tool outputs submitted successfully.")

except Exception as e:

print("Failed to submit tool outputs:", e)

else:

print("No tool outputs to submit.")

if run.status == 'completed':

messages = client.beta.threads.messages.list(

thread_id=thread.id

)

print(messages.data(0).content(0).text.value)

else:

print(run.status)The model will generate a complete answer based on the tool’s outputs. `The current temperature in San Francisco, CA, is 72°F. There is a 40% chance of rain today.`

Refer to the official documentation for more.

Structured Output

Recently, OpenAI introduced structured output, which ensures that the arguments generated by the model for a function call precisely match the JSON schema you provided. This feature prevents the model from generating incorrect or unexpected enum values, keeping its responses aligned with the specified schema.

To use Structured Output for tool calling, set strict: True. The API will pre-process the supplied schema and constrain the model to adhere strictly to your schema.

from openai import OpenAI

client = OpenAI()

assistant = client.beta.assistants.create(

instructions="You are a weather bot. Use the provided functions to answer questions.",

model="gpt-4o-2024-08-06",

tools=(

{

"type": "function",

"function": {

"name": "get_current_temperature",

"description": "Get the current temperature for a specific location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g., San Francisco, CA"

},

"unit": {

"type": "string",

"enum": ("Celsius", "Fahrenheit"),

"description": "The temperature unit to use. Infer this from the user's location."

}

},

"required": ("location", "unit"),

"additionalProperties": False

},

"strict": True

}

},

{

"type": "function",

"function": {

"name": "get_rain_probability",

"description": "Get the probability of rain for a specific location",

"parameters": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g., San Francisco, CA"

}

},

"required": ("location"),

"additionalProperties": False

},

// highlight-start

"strict": True

// highlight-end

}

}

)

)The initial request will take a few seconds. However, subsequently, the cached artefacts will be used for tool calls.

Anthropic’s Claude family of models is efficient at tool calling as well.

The workflow for calling tools with Claude is similar to that of OpenAI. However, the critical difference is in how tool responses are handled. In OpenAI’s setup, tool responses are managed under a separate role, whereas in Claude’s models, tool responses are incorporated directly within the User roles.

A typical tool definition in Claude includes the function’s name, description, and JSON schema.

import anthropic

client = anthropic.Anthropic()

response = client.messages.create(

model="claude-3-5-sonnet-20240620",

max_tokens=1024,

tools=(

{

"name": "get_weather",

"description": "Get the current weather in a given location",

"input_schema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA"

},

"unit": {

"type": "string",

"enum": ("celsius", "fahrenheit"),

"description": "The unit of temperature, either 'Celsius' or 'fahrenheit'"

}

},

"required": ("location")

}

},

),

messages=(

{

"role": "user",

"content": "What is the weather like in New York?"

}

)

)

print(response)The functions’ schema definition is similar to the schema definition in OpenAI’s chat completion API, which we discussed earlier.

However, the response differentiates Claude’s models from those of OpenAI.

{

"id": "msg_01Aq9w938a90dw8q",

"model": "claude-3-5-sonnet-20240620",

"stop_reason": "tool_use",

"role": "assistant",

"content": (

{

"type": "text",

"text": "I need to call the get_weather function, and the user wants SF, which is likely San Francisco, CA."

},

{

"type": "tool_use",

"id": "toolu_01A09q90qw90lq917835lq9",

"name": "get_weather",

"input": {"location": "San Francisco, CA", "unit": "celsius"}

}

)

}You can extract the arguments, execute the original function, and pass the output to LLM for a text response with added information from function calls.

response = client.messages.create(

model="claude-3-5-sonnet-20240620",

max_tokens=1024,

tools=(

{

"name": "get_weather",

"description": "Get the current weather in a given location",

"input_schema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "The city and state, e.g. San Francisco, CA"

},

"unit": {

"type": "string",

"enum": ("celsius", "fahrenheit"),

"description": "The unit of temperature, either 'celsius' or 'fahrenheit'"

}

},

"required": ("location")

}

}

),

messages=(

{

"role": "user",

"content": "What's the weather like in San Francisco?"

},

{

"role": "assistant",

"content": (

{

"type": "text",

"text": "I need to use get_weather, and the user wants SF, which is likely San Francisco, CA."

},

{

"type": "tool_use",

"id": "toolu_01A09q90qw90lq917835lq9",

"name": "get_weather",

"input": {"location": "San Francisco, CA", "unit": "celsius"}

}

)

},

{

"role": "user",

"content": (

{

"type": "tool_result",

"tool_use_id": "toolu_01A09q90qw90lq917835lq9", # from the API response

"content": "65 degrees" # from running your tool

}

)

}

)

)

print(response)Here, you can observe that we passed the tool-calling output under the user role.

For more on Claude’s tool calling, refer to the official documentation.

Here is a comparative overview of tool-calling features across different LLM providers.

Managing multiple LLM providers can quickly become difficult while building complex ai applications. Hence, frameworks like LangChain have created a unified interface for handling tool calls from multiple LLM providers.

Create a custom tool using @tool decorator in LangChain.

from langchain_core.tools import tool

@tool

def add(a: int, b: int) -> int:

"""Adds a and b.

Args:

a: first int

b: second int

"""

return a + b

@tool

def multiply(a: int, b: int) -> int:

"""Multiplies a and b.

Args:

a: first int

b: second int

"""

return a * b

tools = (add, multiply)Initialise an LLM,

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-3.5-turbo-0125")Use the bind tool method to add the defined tools to the LLMs.

llm_with_tools = llm.bind_tools(tools)Sometimes, you should force the LLMs to use certain tools. Many LLM providers allow this behaviour. To acheive this in LangChain, use

always_multiply_llm = llm.bind_tools((multiply), tool_choice="multiply")And if you want to call any of the tools provided

always_call_tool_llm = llm.bind_tools((add, multiply), tool_choice="any")Schema Definition Using Pydantic

You can also use Pydantic to define tool schema. This is useful when the tool has a complex schema.

from langchain_core.pydantic_v1 import BaseModel, Field

# Note that the docstrings here are crucial, as they will be passed along

# to the model and the class name.

class add(BaseModel):

"""Add two integers together."""

a: int = Field(..., description="First integer")

b: int = Field(..., description="Second integer")

class multiply(BaseModel):

"""Multiply two integers together."""

a: int = Field(..., description="First integer")

b: int = Field(..., description="Second integer")

tools = (add, multiply)Ensure detailed docstring and clear parameter descriptions for optimal results.

Agents are automated programs powered by LLMs that interact with external environments. Instead of executing one action after another in a chain, the agents can decide which actions to take based on some conditions.

Getting structured responses from LLMs to work with ai agents used to be tedious. However, tool calling made getting the desired structured response from LLMs reasonably simple. This primary feature is leading the ai agent revolution now.

So, let’s see how you can build a real-world agent, such as a GitHub PR reviewer using OpenAI SDK and an open-source toolset called Composio.

What is Composio?

Composio is an open-source tooling solution for building ai agents. To construct complex agentic automation, it offers out-of-the-box integrations for applications like GitHub, Notion, Slack, etc. It lets you integrate tools with agents without worrying about complex app authentication methods like OAuth.

These tools can be used with LLMs. They are optimized for agentic interactions, which makes them more reliable than simple function calls. They also handle user authentication and authorization.

You can use these tools with OpenAI SDK, LangChain, LlamaIndex, etc.

Let’s see an example where you will build a GitHub PR review agent using OpenAI SDK.

Install OpenAI SDK and Composio.

pip install openai composioLogin to your Composio user account.

composio loginAdd GitHub integration by completing the integration flow.

composio add github composio apps updateEnable a trigger to receive PRs when created.

composio triggers enable github_pull_request_eventCreate a new file, import libraries, and define the tools.

import os

from composio_openai import Action, ComposioToolSet

from openai import OpenAI

from composio.client.collections import TriggerEventData

composio_toolset = ComposioToolSet()

pr_agent_tools = composio_toolset.get_actions(

actions=(

Action.GITHUB_GET_CODE_CHANGES_IN_PR, # For a given PR, it gets all the changes

Action.GITHUB_PULLS_CREATE_REVIEW_COMMENT, # For a given PR, it creates a comment

Action.GITHUB_ISSUES_CREATE, # If required, allows you to create issues on github

)

)Initialise an OpenAI instance and define a prompt.

openai_client = OpenAI()

code_review_assistant_prompt = (

"""

You are an experienced code reviewer.

Your task is to review the provided file diff and give constructive feedback.

Follow these steps:

1. Identify if the file contains significant logic changes.

2. Summarize the changes in the diff in clear and concise English within 100 words.

3. Provide actionable suggestions if there are any issues in the code.

Once you have decided on the changes for any TODOs, create a Github issue.

"""

)Create an OpenAI assistant thread with the prompts and the tools.

# Give openai access to all the tools

assistant = openai_client.beta.assistants.create(

name="PR Review Assistant",

description="An assistant to help you with reviewing PRs",

instructions=code_review_assistant_prompt,

model="gpt-4o",

tools=pr_agent_tools,

)

print("Assistant is ready")Now, set up a webhook to receive the PRs fetched by the triggers and a callback function to process them.

## Create a trigger listener

listener = composio_toolset.create_trigger_listener()

## Triggers when a new PR is opened

@listener.callback(filters={"trigger_name": "github_pull_request_event"})

def review_new_pr(event: TriggerEventData) -> None:

# Using the information from Trigger, execute the agent

code_to_review = str(event.payload)

thread = openai_client.beta.threads.create()

openai_client.beta.threads.messages.create(

thread_id=thread.id, role="user", content=code_to_review

)

## Let's print our thread

url = f"https://platform.openai.com/playground/assistants?assistant={assistant.id}&thread={thread.id}"

print("Visit this URL to view the thread: ", url)

# Execute Agent with integrations

# start the execution

run = openai_client.beta.threads.runs.create(

thread_id=thread.id, assistant_id=assistant.id

)

composio_toolset.wait_and_handle_assistant_tool_calls(

client=openai_client,

run=run,

thread=thread,

)

print("Listener started!")

print("Create a pr to get the review")

listener.listen()Here is what is going on in the above code block

- Initialize Listener and Define Callback: We defined an event listener with a filter with the trigger name and a callback function. The callback function is called when the event listener receives an event from the specified trigger, i,e. github_pull_request_event.

- Process PR Content: Extracts the code diffs from the event payload.

- Run Assistant Agent: Create a new OpenAI thread and send the codes to the GPT model.

- Manage Tool Calls and Start Listening: Handles tool calls during execution and activates the listener for ongoing PR monitoring.

With this, you will have a fully functional ai agent to review new PR requests. Whenever a new pull request is raised, the webhook triggers the callback function, and finally, the agent posts a summary of the code diffs as a comment to the PR.

Conclusion

Tool calling by the Large Language Model is at the forefront of the agentic revolution. It has enabled use cases that were previously impossible, such as letting machines interact with external applications as and when needed, dynamic UI generation, etc. Developers can build complex agentic automation processes by leveraging tools and frameworks like OpenAI SDK, LangChain, and Composio.

Key Takeaways

- Tools are objects that let the LLMs interface with external applications.

- Tool calling is the method where LLMs generate structured schema for a required function based on user message.

- However, major LLM providers such as OpenAI and Anthropic offer function calling with different implementations.

- LangChain offers a unified API for tool calling using LLMs.

- Composio offers tools and integrations like GitHub, Slack, and Gmail for complex agentic automation.

Frequently Asked Questions

A. Tools are objects that let the LLMs interact with external environments, such as Code interpreters, GitHub, Databases, the Internet, etc.

A. LLMs, or Large Language Models, are advanced ai systems designed to understand, generate, and respond to human language by processing vast amounts of text data.

A. Tool calling enables LLMs to generate the structured schema of function arguments as and when needed.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

NEWSLETTER

NEWSLETTER