Introduction

Running large language models (LLMs) locally can be a game-changer, whether you’re experimenting with ai or building advanced applications. But let’s be honest: setting up your environment and getting these models to run smoothly on your machine can be a real pain.

Enter Ollama, the platform that makes working with open-source LLM models easy. Imagine having everything you need, from model weights to configuration files, neatly packaged into a single Modelfile. It’s like Docker for LLM models! Ollama brings the power of advanced ai models directly to your local machine, giving you unparalleled transparency, control, and customization.

In this guide, we’ll explore the world of Ollama, explain how it works, and provide step-by-step instructions for effortlessly installing and running models. Are you ready to revolutionize your LLM experience? Let’s dive in and see how Ollama transforms the way developers and enthusiasts work with ai!

General description

- Revolutionize your ai projects: Learn how Ollama simplifies running large language models locally.

- Local ai made simple: Find out how Ollama makes complex LLM configurations a breeze.

- Optimize LLM implementation: Learn how Ollama brings powerful ai models to your local machine.

- Your guide to Ollama: Step-by-step instructions for installing and running open source LLM.

- Transform your experience with ai: See how Ollama provides transparency, control and customization to LLMs.

What is Ollama?

Be is a software platform designed to streamline the process of running open source LLMs on personal computers. It removes the complexities of managing model weights, configurations, and dependencies, allowing users to focus on interacting with and exploring the capabilities of LLMs.

Main features of Ollama

These are the key features of Ollama:

- Local model in operation:Ollama allows you to run ai language models directly on your computer instead of relying on cloud services. This approach improves data privacy and enables offline use, giving you greater control over your ai applications.

- Open source models:Ollama supports open-source ai models, ensuring transparency and flexibility. Users can inspect, modify, and contribute to the development of these models, fostering a collaborative and innovative environment.

- Easy setup:Ollama simplifies the installation and configuration process, making it accessible even to those with limited technical knowledge. The user-friendly interface and comprehensive documentation guide you through every step, from downloading the model to actually running it.

- Variety of models:Ollama offers a variety of language models tailored to different needs. Whether you need models for text generation, summarization, translation, or other natural language processing tasks, Ollama offers multiple options for different applications and industries.

- PersonalizationWith Ollama, you can tune the performance of ai models using Modelfiles. This feature allows you to fine-tune parameters, integrate additional data, and optimize models for specific use cases, ensuring that ai behaves according to your requirements.

- API for developers:Ollama offers a robust API that developers can leverage to integrate ai capabilities into their software. This API supports multiple programming languages and frameworks, making it easy to incorporate sophisticated language models into applications and enhance their capabilities with ai-powered features.

- Multi platform:Ollama is designed to run seamlessly across a variety of operating systems, including Windows, Mac, and Linux. This cross-platform compatibility ensures that users can deploy and run ai models on their preferred hardware and operating environment.

- Resource management:Ollama optimizes the use of your computer's resources, ensuring that ai models run efficiently without overloading your system. This feature includes intelligent CPU and GPU resource allocation and memory management to maintain performance and stability.

- UpdatesStaying up to date with the latest advances in ai is easy with Ollama. The platform allows you to download and install newer versions of models as they become available, ensuring you benefit from constant improvements and innovations in the field.

- Offline use:Ollama ai models can operate without an internet connection once installed and configured. This capability is particularly valuable for environments with limited or unreliable internet access, as it ensures continued ai functionality regardless of connectivity issues.

How does Ollama work?

Ollama works by creating a containerized environment for LLMs. This container includes all the necessary components:

- Model weights: The data that defines the LLM capabilities.

- Configuration files: Settings that dictate how the model works.

- Dependencies: Required software libraries and tools.

By containerizing these elements, Ollama guarantees a consistent and isolated environment for each model, simplifying implementation and avoiding potential software conflicts.

Workflow Overview

- Choose an open source LLM: Compatible with models such as Llama 3, Mistral, Phi-3, Code Llama and Gemma.

- Define model settings (optional): Advanced users can customize model behavior through a Modelfile, specifying model versions, hardware acceleration, and other details.

- Run the LLM: Easy-to-use commands create the container, download the model weights, and launch the LLM.

- Interact with the LLM: Use Ollama libraries or a user interface to send prompts and receive responses.

Here is the GitHub link for Ollama: Link

Installation of Ollama

Here are the system requirements

- Compatible with macOS, Linux and Windows (preview).

- For Windows, version 10 or later is required.

Installation steps

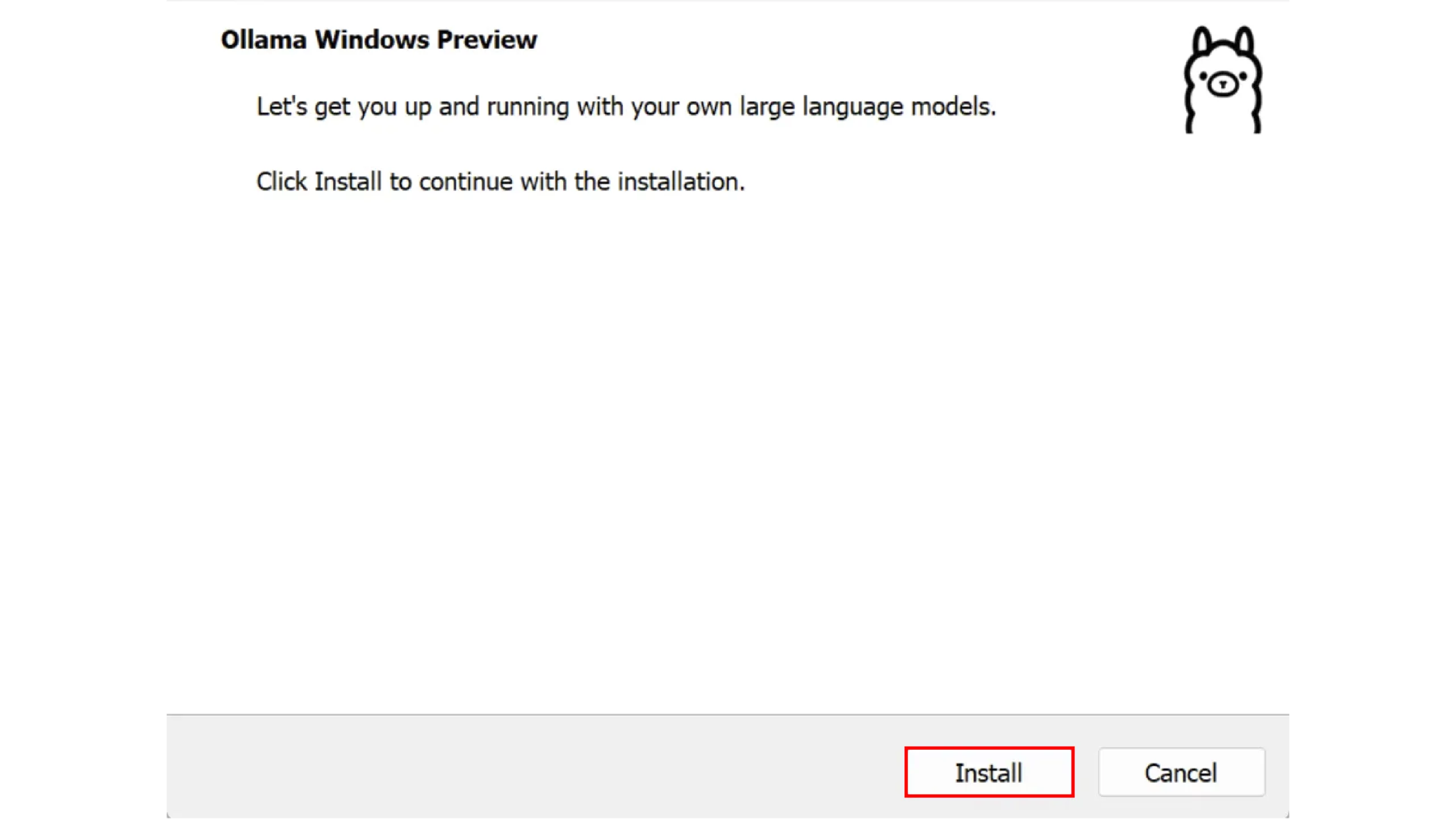

- Download and installation

Visit the Ollama website to download the appropriate version.

Follow the standard installation process.

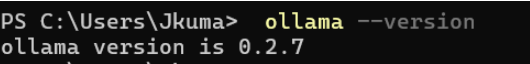

- check

Open a terminal or command prompt.

Guy ollama --version to verify the installation.

Running a model with Ollama

Loading a model

- Load a model:Use the CLI to load the desired model:

ollama run llama2 - Generate text:Generate text by sending prompts, for example, “Write a poem about the flower.”

Running your first model with customization

Ollama offers a simple method for managing LLMs. Here's how:

- Choose a model: Select from available open source resources LLM Options Based on your needs.

- Create a model file: Customize the model settings as needed, specifying details such as model version and hardware acceleration. Create a model file based on Ollama Documentation.

- Create the model container: Wear

ollama createwith the name of the model to start the container creation process.

ollama create model_name (-f path/to/Modelfile)- Run the model: Start the LLM with

ollama run model_name.

ollama run modedl_name- Interact with the LLM: Depending on the model, interact through a command-line interface or integrate with Python libraries.

Example of interaction

- Sending prompts via the command line interface:

ollama prompt model_name "Write a song on flower"Benefits and challenges of Ollama

These are the benefits and challenges of Ollama:

Benefits of Ollama

- Data Privacy: Your instructions and results remain on your machine, reducing data exposure.

- Performance: Local processing can be faster, especially for frequent queries.

- Cost efficiency: No ongoing cloud fees, just your initial hardware investment.

- Personalization: It's easier to fine-tune models or experiment with different versions.

- Offline use: The models work without an Internet connection once downloaded.

- Learning opportunity: Hands-on experience with LLM implementation and operation.

The challenges of Ollama

- Hardware Requirements: Powerful GPUs are often needed for good performance.

- Storage space: Large models require a lot of disk space.

- Configuration complexity: Initial setup can be tricky for beginners.

- Update Management: You are responsible for keeping the models and software up to date.

- Limited resources: Your PC's capabilities may restrict the model size or performance.

- Troubleshooting: Local issues may require more technical expertise to resolve.

Conclusion

Ollama is a revolutionary tool for enthusiasts and professionals alike. It allows for local deployment, customization, and a deep understanding of large language models. By focusing on open-source models and offering an intuitive user interface, Ollama makes advanced ai technology more accessible and transparent to everyone.

Frequent questions

Answer: It depends on the model. Smaller models may work on average computers, but larger, more complex models may require a computer with a good graphics card (GPU).

Answer: Yes, it is free. You only pay for the electricity of your computer and the upgrades needed to run larger models.

Answer: Yes, once you have downloaded a template, you can use it without internet access.

Answer: You can use it to help with writing, answer questions, provide coding assistance, translate, and perform other text-based tasks that language models can handle.

Answer: Yes, to a certain extent. You can adjust certain settings and parameters. Some models also allow you to make adjustments with your own data, but this requires more technical knowledge.