Introduction

Large language models (LLMs) are crucial in various applications such as chatbots, search engines, and coding assistants. Improving the efficiency of LLM inference is vital due to the significant computational and memory demands during the “decode” phase of LLM operations, which handles the processing of tokens one at a time per request. Batching, a key technique, helps manage the costs associated with fetching model weights from memory, increasing performance by optimizing memory bandwidth utilization.

The Bottleneck of Large Language Models (LLM)

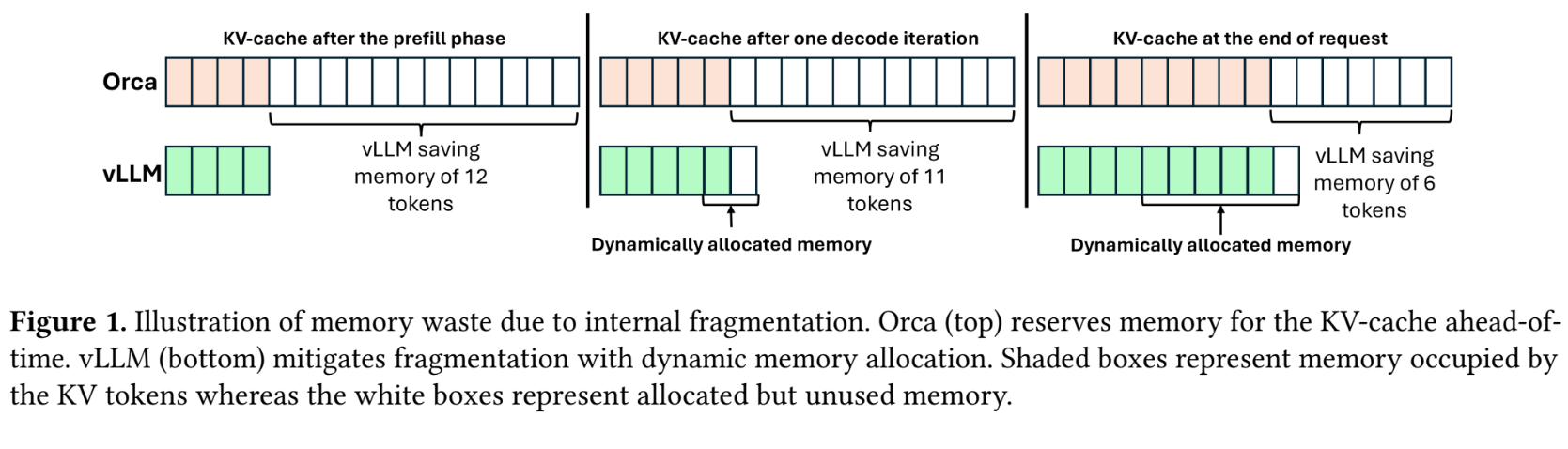

One of the main challenges in implementing LLM efficiently is memory management, particularly during the “decode” phase, which is memory-bound. Traditional methods involve reserving a fixed amount of GPU memory for the KV cache and maintaining the state in memory for each inference request. While simple, this approach results in significant memory waste due to internal fragmentation; Requests typically use less memory than is reserved, and much of it remains unused, hampering performance as systems cannot effectively support large batch sizes.

Traditional approaches and their limitations

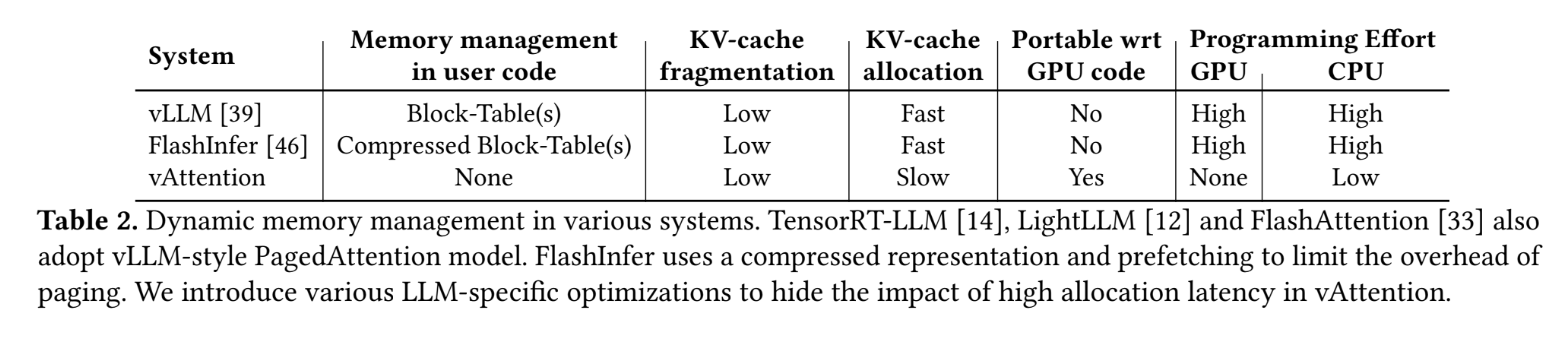

To address the inefficiencies of fixed memory allocation, the PagedAttention method was introduced. Inspired by virtual memory management in operating systems, PagedAttention enables dynamic memory allocation for the KV cache, significantly reducing memory waste by allocating small blocks of memory dynamically as needed instead of reserving large amounts of memory in advance. Despite its advantages in reducing fragmentation, PagedAttention presents its own set of challenges. It requires changes in memory layout from contiguous to non-contiguous virtual memory, which requires modifications to the attention cores to accommodate these changes. Additionally, it complicates software architecture by adding memory management layers that traditionally belong to operating systems, resulting in increased software complexity and potential performance overhead because additional memory management tasks are handled in the user space.

A game changer for LLM memory management

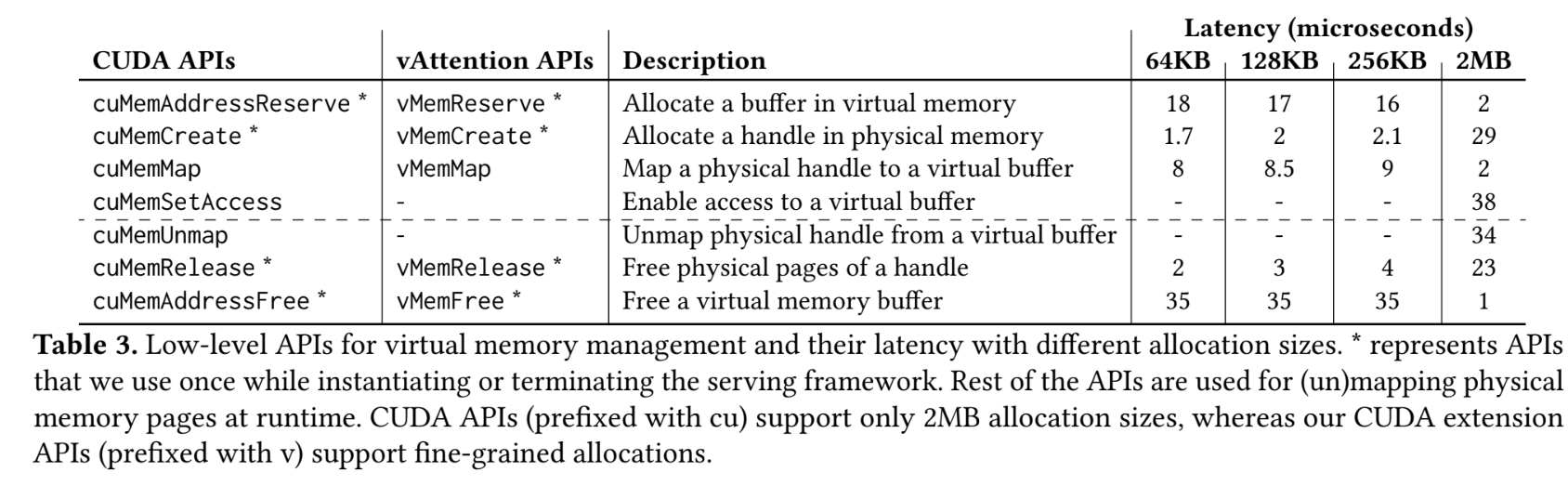

vAttention It marks a significant advance in memory management for large language models (LLM), improving the speed and efficiency of model operations without the need for a comprehensive system overhaul. By maintaining virtual memory contiguity, vAttention ensures a more streamlined approach, leveraging existing system support for dynamic memory allocation, which is less complex and more manageable than previous methods.

What is vAttention?

vAttention introduces a refined strategy for memory management in LLMs by utilizing a system that keeps virtual memory contiguous while allowing dynamic allocation of physical memory on demand. This approach simplifies the management of KV caches without committing physical memory upfront, mitigating common fragmentation problems and allowing for greater flexibility and efficiency. The system integrates seamlessly with existing server frameworks, requiring minimal changes to the focus core or memory management practices.

Key Advantages of vAttention: Speed, Efficiency and Simplicity

Key benefits of vAttention include improved processing speed, operational efficiency, and simplified integration. By avoiding non-contiguous memory allocation, vAttention improves the runtime performance of LLMs, which are capable of generating tokens up to almost twice as fast as previous methods. This speed improvement does not sacrifice efficiency, as the system effectively manages GPU memory usage to accommodate different batch sizes without excessive waste. Additionally, the simplicity of vAttention integration helps preserve the original structure of LLMs, facilitating easier upgrades and maintenance without the need for major code rewrites or specialized memory management. This simplification extends to the system's ability to work with unchanged attention cores, reducing the learning curve and implementation time for developers.

How does vAttention work?

The vAttention mechanism is designed to optimize performance during various phases of computational tasks, particularly focusing on memory management and maintaining consistent output quality. This deep dive into how vAttention works will explore its different phases and strategies to improve system efficiency.

Preload phase: Optimizing memory allocation for faster startup

The prefetch phase of vAttention addresses the problem of internal fragmentation in memory allocation. By adopting an adaptive memory allocation strategy, vAttention ensures that smaller memory blocks are used efficiently, minimizing space waste. This approach is critical for applications that require high-density memory, allowing them to run more effectively on constrained systems.

Another key feature of the prefetch phase is the ability to overlap memory allocation with processing tasks. This overlay technique speeds up system startup and maintains a smooth operation flow. By initiating memory allocation during idle processing cycles, vAttention can take advantage of processor time that would otherwise be wasted, improving overall system performance.

Intelligent recovery is an integral part of the prefetch phase, where vAttention actively monitors memory usage and recovers unused memory segments. This dynamic reallocation helps prevent system overload and memory leaks, ensuring that resources are available for critical tasks when needed. The mechanism is designed to be proactive, keeping the system agile and efficient.

Decoding phase: Maintain maximum performance throughout inference

During the decoding phase, vAttention focuses on maintaining peak performance to ensure consistent performance. This is achieved through a finely tuned orchestration of computational resources, ensuring that each component functions optimally and without bottlenecks. The decoding phase is crucial for applications that require real-time processing and high data throughput, as it balances speed and accuracy.

Through these phases, vAttention demonstrates its effectiveness in improving system performance, making it a valuable tool for various applications that require sophisticated processing and memory management.

Read also: What are the different types of attention mechanisms?

vAttention vs. Paginated attention

Significant differences in performance and usability reveal a clear preference in most scenarios when comparing vAttention and PagedAttention. vAttention, with its simplified approach to managing attention mechanisms in neural networks, has demonstrated greater efficiency and effectiveness than PagedAttention. This is particularly evident in tasks involving large data sets where attention span must be dynamically adjusted to optimize computational resources.

Speed gains in different scenarios

Performance benchmarks show that vAttention provides notable speed gains across various tasks. In natural language processing tasks, vAttention reduced training time by up to 30% compared to PagedAttention. Similarly, in image recognition tasks, the speed improvement was approximately 25%. These gains are attributed to vAttention's ability to allocate computational resources more efficiently by dynamically adjusting its approach based on data complexity and relevance.

The ease of use factor: vAttention's simplicity wins

One of the standout features of vAttention is its user-friendly design. Unlike PagedAttention, which often requires extensive configuration and adjustments, vAttention is designed with simplicity in mind. It requires fewer parameters and less manual intervention, making it more accessible to users with varying levels of machine learning experience. This simplicity doesn't come at the cost of performance, making vAttention the preferred choice for developers looking for an efficient yet manageable solution.

Conclusion

As we continue to explore the capabilities of large language models (LLMs), their integration into various sectors promises substantial benefits. The future lies in improving its understanding of complex data, perfecting its ability to generate human-like responses, and expanding its application in healthcare, finance, and education.

To fully realize the potential of ai, we must focus on ethical practices. This includes ensuring that models do not perpetuate biases and that their implementation considers social impacts. Collaboration between academia, industry and regulators will be vital to develop guidelines that encourage innovation while protecting individual rights.

Furthermore, improving the efficiency of LLMs will be crucial for their scalability. Research into more energy-efficient models and methods that reduce computational burden can make these tools accessible to more users around the world, thus democratizing the benefits of ai.

For more articles like this, explore our blog section today!