In a significant development, Alibaba has successfully addressed the long-standing challenge of integrating coherent and readable text into images with the introduction of AnyText. This state-of-the-art framework for multilingual visual text generation and editing marks a notable advance in the field of text-to-image synthesis. Let's delve into the intricacies of AnyText, exploring its methodology, core components, and practical applications.

Also read: Decoding Google VideoPoet: A Complete Guide to ai Video Generation

Alibaba AnyText Core Components

- Broadcast-based architecture: AnyText's innovative technology revolves around a broadcast-based architecture, which consists of two main modules: the auxiliary latent module and the text embedding module.

- Auxiliary Latent Module: Responsible for handling inputs such as text glyphs, positions, and masked images, the auxiliary latent module plays a critical role in generating latent features essential for text generation or editing. By integrating various features into the latent space, it provides a solid foundation for the visual representation of text.

- Text embedding module: Leveraging an optical character recognition (OCR) model, the text embedding module encodes stroke data into embeddings. These embeds, combined with image caption embeds from a tokenizer, result in texts that blend seamlessly with the background. This innovative approach ensures accurate and consistent text integration.

- Text control broadcast channel: At the core of AnyText is the text control broadcast channel. It's what makes it easy to integrate high-fidelity text into images. This pipeline employs a combination of diffusion loss and text perceptual loss during training to improve the accuracy of the generated text. The result is a visually pleasing and contextually relevant addition of text to images.

AnyText Multilingual Capabilities

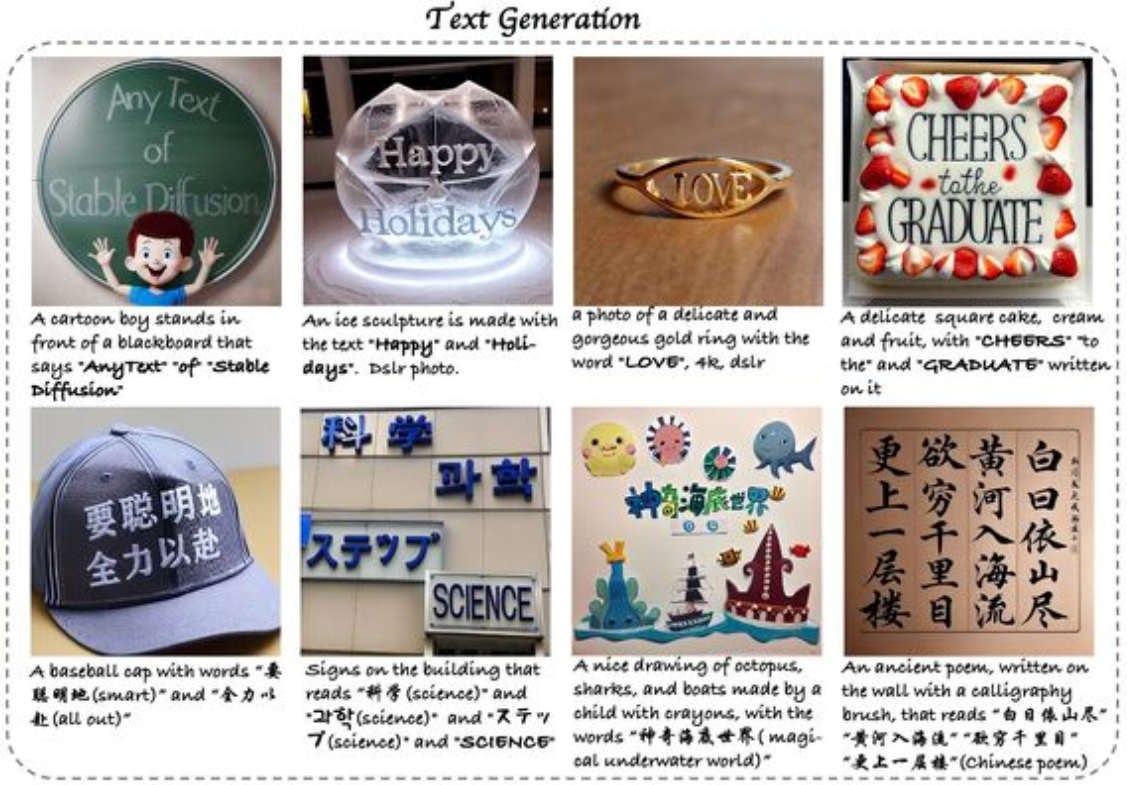

A notable feature of AnyText is its ability to write characters in multiple languages, making it the first framework to address the challenge of multilingual visual text generation. The model supports Chinese, English, Japanese, Korean, Arabic, Bengali and Hindi, offering a wide range of language options for users.

Also read: MidJourney v6 is here to revolutionize ai imaging

Practical applications and results

AnyText's versatility extends beyond basic text addition. It can imitate various text materials, including chalk characters on a blackboard and traditional calligraphy. The model demonstrated superior accuracy compared to ControlNet in both Chinese and English, with significantly reduced FID errors.

Our opinion

Alibaba's AnyText emerges as a game-changer in the field of text-to-image synthesis. Its ability to seamlessly integrate text into images in multiple languages, along with its versatile applications, positions it as a powerful tool for visual storytelling. The open source nature of the framework, available on GitHub, further encourages collaboration and development in the ever-evolving field of text generation technology. AnyText heralds a new era in multilingual visual text editing, paving the way for enhanced visual storytelling and creative expression in the digital landscape.

NEWSLETTER

NEWSLETTER