In the world of automatic learning, we become obsessed with model architectures, training pipes and hyperparaméter adjustment, but we often overlook a fundamental aspect: how our characteristics live and breathe throughout their life cycle. From calculations in memory that disappear after each prediction to the challenge of reproducing the values of exact characteristics months later, the way we handle the characteristics can make or break the reliability and scalability of our ML systems.

Who should read this

- ML engineers who evaluate their feature management approach

- Data scientists who experience bias problems serve for training

- Small technicians who plan to climb their ML operations

- Teams that consider the implementation of the functions store

Starting point: the invisible approach

Many ML teams, especially those in their early stages or without dedicated ML engineers, begin with what I call “the invisible approach” to present engineering. It is misleading: obtaining without processing, transforming them into memory and creating features on the fly. The resulting data set, although functional, is essentially a black box of short -term calculations, characteristics that exist only for a moment before disappearing after each prediction or training execution.

While this approach may seem to do the job, it is based on unstable terrain. As the teams climb their ML operations, the models that served brilliantly in the tests suddenly behave unpredictably in production. The characteristics that worked perfectly during training mysteriously produce different values in live inference. When interested parties ask why a specific prediction was made last month, the teams cannot reconstruct the values of exact characteristics that led to that decision.

Central Challenges in Characteristics Engineering

These weak points are not exclusive to any team; They represent fundamental challenges that each growing ML team finally faces.

- Observability

Without materialized characteristics, purification becomes a detective mission. Imagine trying to understand why a model made a specific prediction months ago, just to discover that the characteristics behind that decision have disappeared a long time ago. The observability of the characteristics also allows continuous monitoring, which allows the equipment to detect the deterioration or worrying trends in their distributions of characteristics over time. - Point correction in time

When the characteristics used in training do not match those generated during inference, which leads to notorious bias that serves training. It is not just about data precision, it is about ensuring that your model finds the same calculations of production characteristics as during training. - Reuse

Repeatedly calculate the same characteristics in different models becomes increasingly wasteful. When the calculations of characteristics involve heavy computational resources, this inefficiency is not just an inconvenience, it is a significant drainage for resources.

Evolution of solutions

Focus 1: Generation of characteristics at request

The simplest solution begins where many ML equipment begins: create features at the request for immediate use in the prediction. The unprocessed data flow through transformations to generate characteristics, which are used for inference, and only then, after the predictions are already performed, these are typically stored characteristics in parquet files. While this method is simple, with the equipment that often chooses parquet files because they are easy to create from memory data, it comes with limitations. The approach partially solves observability since the characteristics are saved, but the analysis of these characteristics later becomes challenging: the consultation of data in multiple parquet files requires specific tools and a careful organization of its saved files.

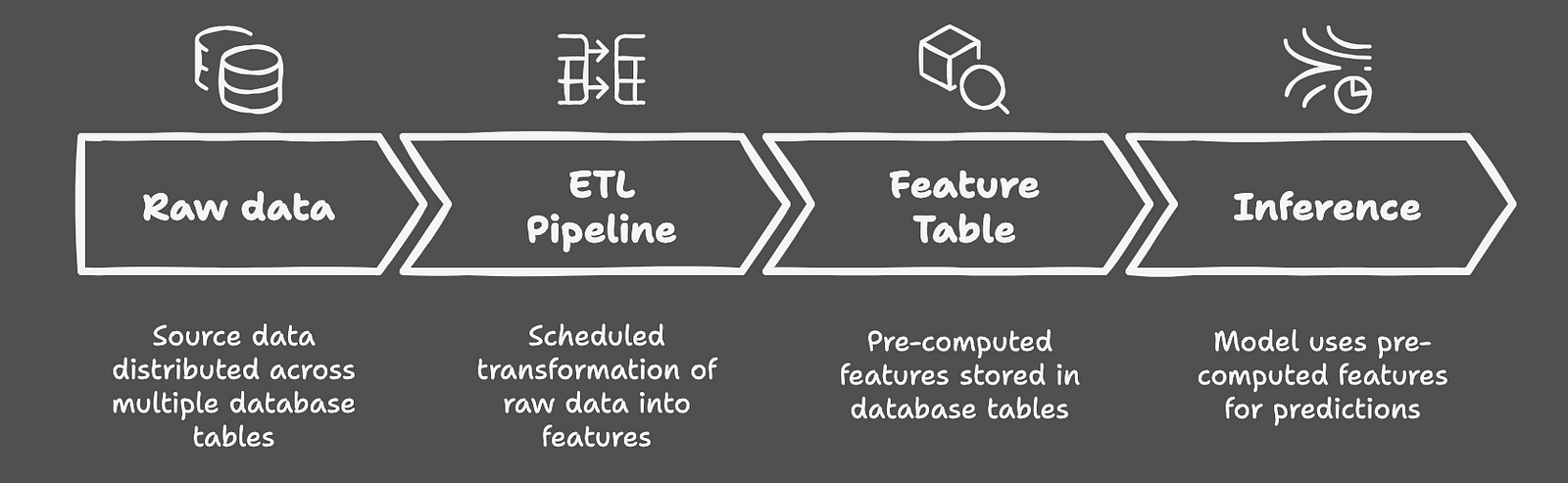

Focus 2: Materialization of the characteristics table

As the equipment evolves, many go to what is commonly discussed online as an alternative to complete features stores: materialization of the characteristics table. This approach takes advantage of the existing data warehouse infrastructure to transform and store the characteristics before they are necessary. Think about it as a central repository where the characteristics are consistently calculated through established ETL pipes, then they are used for both training and inference. This solution elegantly addresses the correction and observability of the point in time: its characteristics are always available for inspection and are constantly generated. However, it shows its limitations when dealing with the evolution of functions. As its model ecosystem grows, adding new features, modifying existing ones or administering different versions becomes increasingly complex, especially due to the restrictions imposed by the evolution of the database scheme.

APPROACH 3: Feature store

At the end of the spectrum is the functions store, usually part of an integral ML platform. These solutions offer the complete package: characteristics versions, efficient online/offline and perfect integration with broader ML flows. They are the equivalent of a well -greased machine, solving our central challenges in an integral way. The characteristics are controlled by version, easily observable and inherently reusable in all models. However, this power has a significant cost: technological complexity, resource requirements and the need for dedicated engineering ML.

Make the right decision

Contrary to what ML's blog posts could suggest, not all teams need a functions store. In my experience, the materialization of the characteristics table often provides the optimal point, especially when your organization already has a robust ETL infrastructure. The key is to understand your specific needs: if you are managing multiple models that frequently share and modify the characteristics, a functions store could use the investment. But for equipment with limited model interdependence or those that still establish their ML practices, the simplest solutions often provide a better return on investment. Of course, you could Stay with the generation of characteristics at request: If the debugging career conditions at 2 am is your idea of a good moment.

The decision is finally reduced to maturity, the availability of resources and the specific use cases of your equipment. Function stores are powerful tools, but like any sophisticated solution, they require significant investment in both human capital and infrastructure. Sometimes, the pragmatic route of the materialization of the table of features, despite its limitations, offers the best balance of capacity and complexity.

Remember: the success in the management of ML characteristics is not about choosing the most sophisticated solution, but to find the adequate adjustment for the needs and abilities of your team. The key is to honestly evaluate your needs, understand your limitations and choose a route that allows your equipment to build reliable, observable and maintainable ML systems.

NEWSLETTER

NEWSLETTER