Knowledge graphs (KGs) are structured representations of facts consisting of entities and relationships between them. These graphs have become fundamental in artificial intelligence, natural language processing, and recommendation systems. By organizing data in this structured way, knowledge graphs enable machines to understand and reason about the world more efficiently. This reasoning ability is crucial for predicting missing facts or making inferences based on existing knowledge. KGs are employed in applications ranging from search engines to virtual assistants, where the ability to draw logical conclusions from interconnected data is vital.

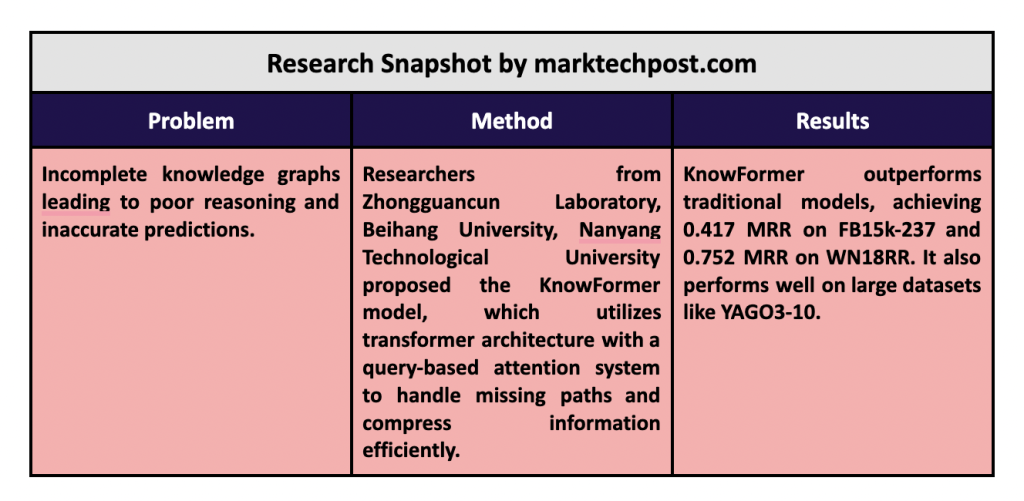

One of the main challenges of knowledge graphs is that they are often incomplete. Many real-world knowledge graphs need important relationships, making it difficult for systems to infer new facts or generate accurate predictions. These information gaps hinder the overall reasoning process, and traditional methods often need help to address this problem. Path-based methods, which attempt to infer missing facts by examining the shortest paths between entities, are especially prone to incomplete or oversimplified paths. Furthermore, these methods often face the problem of “information overcompression,” where too much information is compressed into too few connections, leading to inaccurate results.

Current approaches to address these problems include embedding-based methods that convert the entities and relations of a knowledge graph into a low-dimensional space. These techniques, such as TransE, DistMult, and RotatE, have successfully preserved the structure of knowledge graphs and enabled reasoning. However, embedding-based models have limitations. They often fail in inductive scenarios where new, unseen entities or relations must be reasoned about, as they cannot effectively leverage local structures within the graph. Similar to those proposed in DRUM and CompGCN, path-based methods focus on extracting relevant paths between entities. However, they also need help with missing or incomplete paths and the aforementioned problem of information over-compression.

Researchers from Zhongguancun Laboratory, Beihang University and Nanyang Technological University presented a new MeetEx model, which uses the Transformer architecture to improve knowledge graph reasoning. This model shifts the focus from traditional path- and embedding-based methods to a structure-aware approach. KnowFormer leverages the Transformer’s self-attention mechanism, which allows it to analyze relationships between any pair of entities within a knowledge graph. This architecture makes it very effective in addressing the limitations of path-based models, allowing the model to perform reasoning even when paths are missing or incomplete. By using a query-based attention system, KnowFormer calculates attention scores between entity pairs based on their connection plausibility, offering a more flexible and efficient way to infer missing facts.

The KnowFormer model incorporates a query function and a value function to generate informative representations of entities. The query function helps the model identify relevant entity pairs by analyzing the structure of the knowledge graph, while the value function encodes the structural information required for accurate reasoning. This dual-function mechanism enables KnowFormer to effectively handle the complexity of large-scale knowledge graphs. The researchers introduced an approximation method to improve the scalability of the model. KnowFormer can process knowledge graphs with millions of facts while maintaining low time complexity, allowing it to effectively handle large datasets such as FB15k-237 and YAGO3-10.

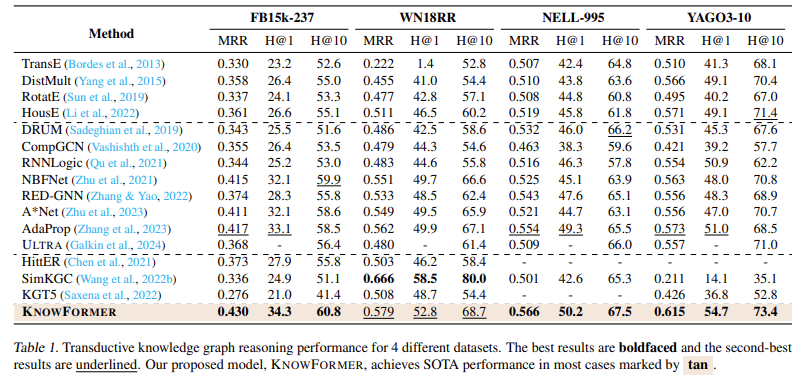

In terms of performance, KnowFormer demonstrated its superiority on a variety of benchmarks. On the FB15k-237 dataset, for example, the model achieved a mean reciprocal rank (MRR) of 0.417, significantly outperforming other models such as TransE (MRR: 0.333) and DistMult (MRR: 0.330). Similarly, on the WN18RR dataset, KnowFormer achieved an MRR of 0.752, outperforming baseline methods such as DRUM and SimKGC. The model’s performance was equally impressive on the YAGO3-10 dataset, where it recorded a Hits@10 score of 73.4%, outperforming the results of leading models in the field. KnowFormer also showed exceptional performance on inductive reasoning tasks, where it achieved an MRR of 0.827 on the NELL-995 dataset, far outperforming the scores of existing methods.

In conclusion, KnowFormer, by moving away from purely path-based methods and embedding-based approaches, the researchers developed a model that leverages transformer architecture to improve reasoning capabilities. KnowFormer’s attention mechanism, combined with its scalable design, makes it highly effective in addressing the problems of missing paths and information compression. With superior performance on multiple datasets, including an MRR of 0.417 on FB15k-237 and an MRR of 0.752 on WN18RR, KnowFormer has established itself as a state-of-the-art model in knowledge graph reasoning. Its ability to handle both transductive and inductive reasoning tasks positions it as a strong tool for future ai and machine learning applications.

Take a look at the PaperAll credit for this research goes to the researchers of this project. Also, don't forget to follow us on twitter.com/Marktechpost”>twitter and join our Telegram Channel and LinkedIn GrAbove!. If you like our work, you will love our fact sheet..

Don't forget to join our SubReddit of over 50,000 ml

FREE ai WEBINAR: 'SAM 2 for Video: How to Optimize Your Data' (Wednesday, September 25, 4:00 am – 4:45 am EST)

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary engineer and entrepreneur, Asif is committed to harnessing the potential of ai for social good. His most recent initiative is the launch of an ai media platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is technically sound and easily understandable to a wide audience. The platform has over 2 million monthly views, illustrating its popularity among the public.

<script async src="//platform.twitter.com/widgets.js” charset=”utf-8″>

NEWSLETTER

NEWSLETTER