The large Language Model (LLM) has changed the way people work. With a model like the GPT family being widely used, everyone has become accustomed to these models. By leveraging the power of LLM, we can quickly get answers to our questions, debug code, and more. This makes the model useful in many applications.

One of the challenges of LLM is that the model is not suitable for streaming applications due to the model’s inability to handle long conversations that exceed the length of the predefined training sequence. Additionally, there is a problem with increased memory consumption.

That is why these above problems generate research to solve them. What is this research? Let’s get into it.

StreamingLLM is a framework established by xiao et al. (2023) research to address the problems of streaming applications. Existing methods are challenged because the window of attention restricts LLMs during pre-training.

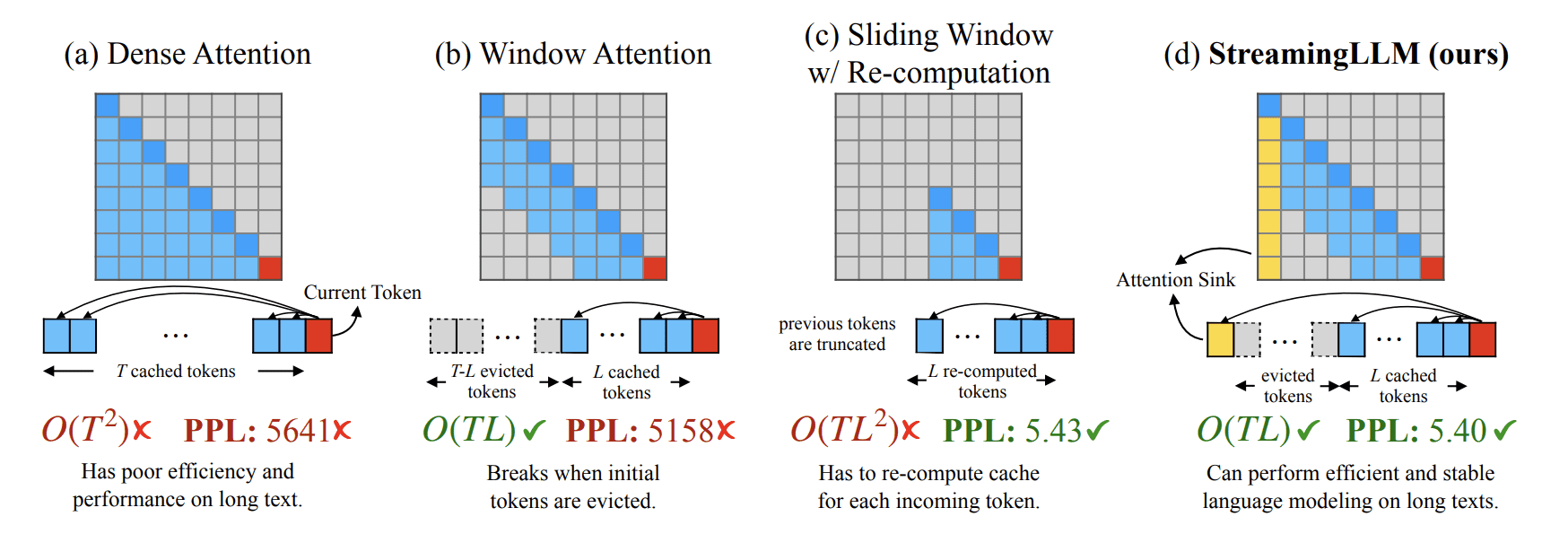

The attention window The technique can be efficient, but suffers when handling texts longer than its cache size. That is why the researcher tried to use the Key and Value states of several initial tokens (attention sink) with the recent tokens. The comparison of StreamingLLM and the other techniques can be seen in the image below.

StreamingLLM vs existing method (xiao et al. (2023))

We can see how StreamingLLM addresses the challenge using the attention sink method. This attention sink (initial tokens) is used for stable attention calculation and combines it with recency tokens to achieve efficiency and maintain stable performance on longer texts.

Furthermore, existing methods suffer from memory optimization. However, LLM avoids these problems by maintaining a fixed-size window on the key and value states of the most recent tokens. The author also mentions the benefit of StreamingLLM as a sliding window recomputation baseline with up to 22.2x speedup.

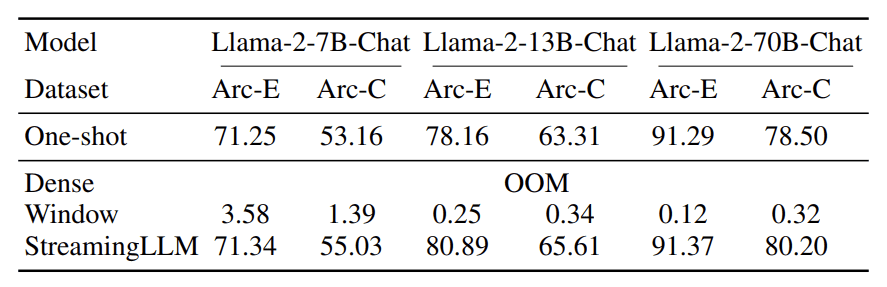

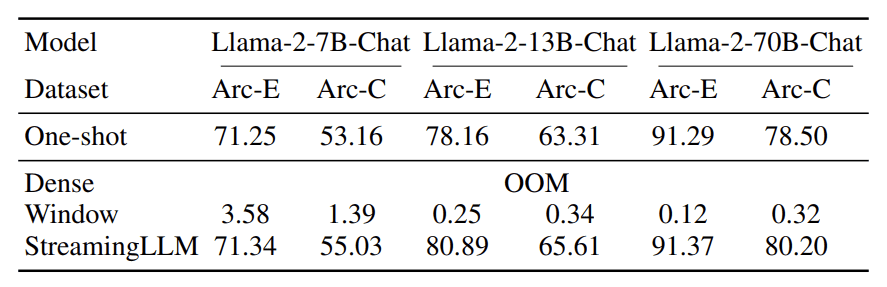

In terms of performance, StreamingLLM provides excellent accuracy compared to the existing method, as seen in the table below.

StreamingLLM Accuracy (xiao et al. (2023))

The above table shows that the accuracy of StreamingLLM can outperform the other methods on the benchmark data sets. That’s why StreamingLLM could have potential for many streaming applications.

To try StreamingLLM, you can visit their GitHub page. Clone the repository to the desired directory and use the following code in your CLI to configure the environment.

conda create -yn streaming python=3.8

conda activate streaming

pip install torch torchvision torchaudio

pip install transformers==4.33.0 accelerate datasets evaluate wandb scikit-learn scipy sentencepiece

python setup.py developThen, you can use the following code to run the Llama chatbot with LLMstreaming.

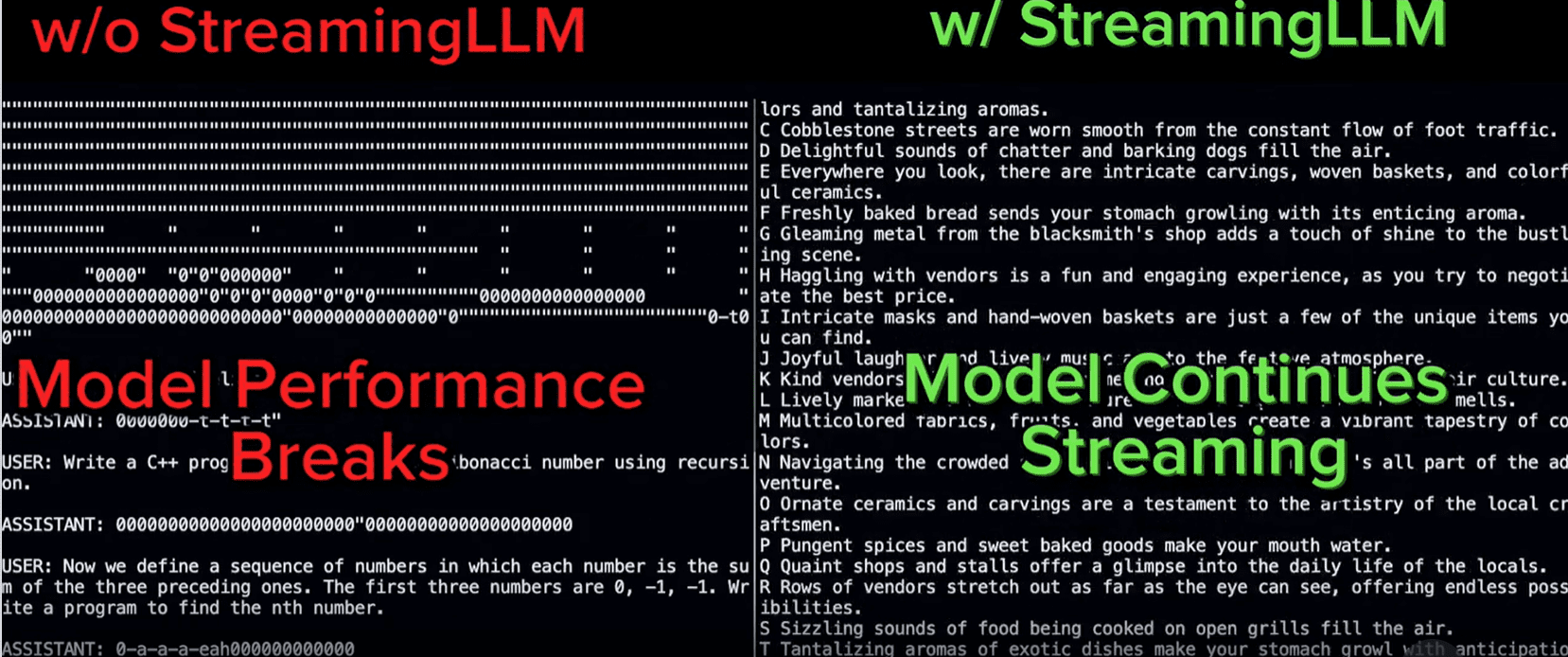

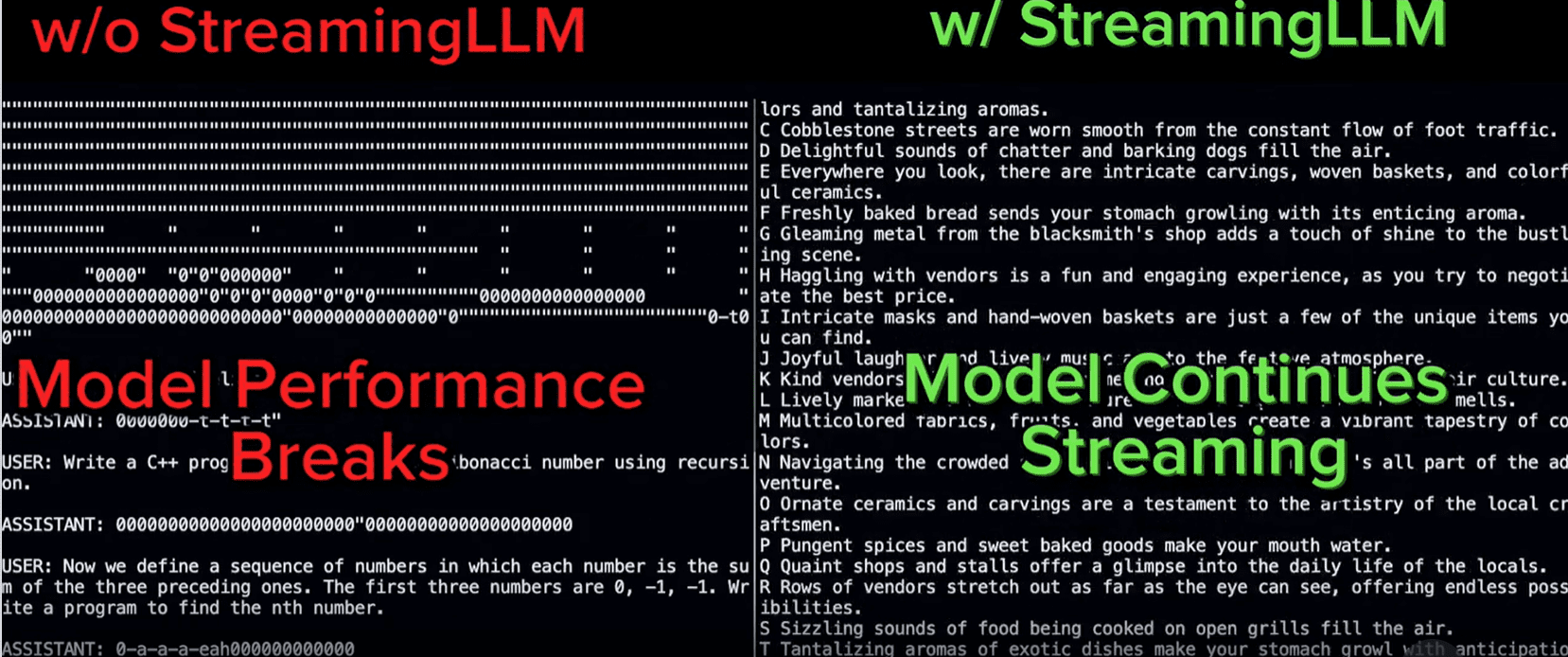

CUDA_VISIBLE_DEVICES=0 python examples/run_streaming_llama.py --enable_streamingThe overall sample comparison with StreamingLLM can be shown in the image below.

StreamingLLM showed outstanding performance in longer conversations (Streaming-llm)

That’s all for the introduction of StreamingLLM. Overall, I think StreamingLLM can have a place in streaming apps and help change how the app works in the future.

Having an LLM in streaming applications would help the business in the long run; however, there are challenges to implement. Most LLMs cannot exceed the duration of the predefined training sequence and have higher memory consumption. xiao et al. (2023) Developed a new framework called StreamingLLM to handle these problems. By using StreamingLLM, it is now possible to have an LLM up and running in the streaming application.

Cornellius Yudha Wijaya He is an assistant data science manager and data writer. While working full-time at Allianz Indonesia, she loves sharing Python tips and data through social media and print media.