We live in an era where the machine learning model is at its peak. Compared to decades ago, most people would have never heard of ChatGPT or artificial intelligence. However, those are the issues that people continue to talk about. Because? Because the given values are very significant compared to the effort.

The advancement of ai in recent years could be attributed to many things, but one of them is the large language model (LLM). Many people using text generation ai work with the LLM model; For example, ChatGPT uses their GPT model. As LLM is an important subject, we should learn about it.

This article will discuss large language models at 3 levels of difficulty, but we will only touch on a few aspects of LLMs. We will only differentiate ourselves in a way that allows each reader to understand what LLM is. With that in mind, let's get into it.

At the first level, we assume that the reader does not know about LLM and may know a little about the field of data science/machine learning. Therefore, I would briefly introduce ai and machine learning before moving on to LLMs.

artificial intelligence It is the science of developing intelligent computer programs. It is intended for the program to perform intelligent tasks that humans could perform but has no limitations on human biological needs. Machine learning is a field of artificial intelligence that focuses on data generalization studies with statistical algorithms. In a way, machine learning tries to achieve artificial intelligence through studying data so that the program can perform intelligence tasks without instruction.

Historically, the field that intersects between computer science and linguistics is called Natural. Language processing field. This field primarily refers to any mechanical human text processing activity, such as text documents. Previously, this field was only limited to rule-based system, but it became broader with the introduction of advanced semi-supervised and unsupervised algorithms that allow the model to learn without any direction. One of the advanced models to do this is the Language Model.

Language model is a probabilistic NLP model for performing many human tasks such as translation, grammar correction, and text generation. The old form of the language model uses purely statistical approaches such as the n-gram method, where the probability of the next word is assumed to depend only on the fixed-size data of the previous word.

However, the introduction of Neural network has dethroned the previous approach. An artificial neural network, or NN, is a computer program that imitates the neural structure of the human brain. It is good to use the neural network approach because it can handle complex pattern recognition from text data and handle sequential data such as text. This is why the current language model is generally based on NN.

Large language models, or LLM, are machine learning models that learn from a large amount of data documents to perform general-purpose language generation. They are still a language model, but the large number of parameters learned by NN makes them considered large. In simple terms, the model could very well represent how humans write by very well predicting the next words from the given input words.

Examples of LLM tasks include language translation, automated chatbot, question answering, and many more. From any sequence of input data, the model could identify relationships between words and generate appropriate results from the instruction.

Almost all generative ai products that have something that uses text generation work with LLM. Big products like ChatGPT, Google's Bard, and many more use LLM as the foundation of their product.

The reader has knowledge of data science but needs to learn more about the LLM at this level. At a minimum, the reader can understand the terms used in the data field. At this level, we would delve into the base architecture.

As explained above, LLM is a neural network model trained on massive amounts of text data. To better understand this concept, it would be beneficial to understand how neural networks and deep learning work.

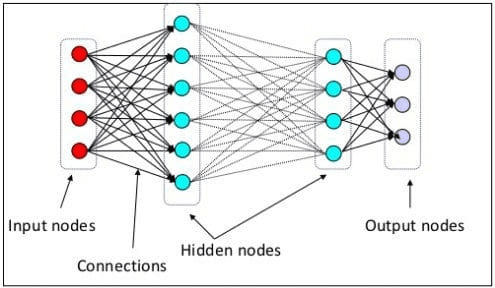

In the previous level we explained that a neuronal neuron is a model that imitates the neuronal structure of the human brain. The main element of the neural network is neurons, often called nodes. To better explain the concept, check out the typical neural network architecture in the image below.

Neural network architecture (image source: KDnuggets)

As we can see in the image above, the Neural Network consists of three layers:

- Input layer where it receives the information and transfers it to the other nodes of the next layer.

- Hidden node layers where all calculations are done.

- Output node layer where the computational outputs are.

It is called deep learning when we train our neural network model with two or more hidden layers. It is called deep because it uses many intermediate layers. The advantage of deep learning models is that they automatically learn and extract features from data that traditional machine learning models are incapable of doing.

In big language model, deep learning is important as the model is based on deep neural network architectures. So why is it called LLM? This is because billions of layers are trained on massive amounts of text data. The layers would produce model parameters that would help the model learn complex patterns in the language, including grammar, writing style, and many more.

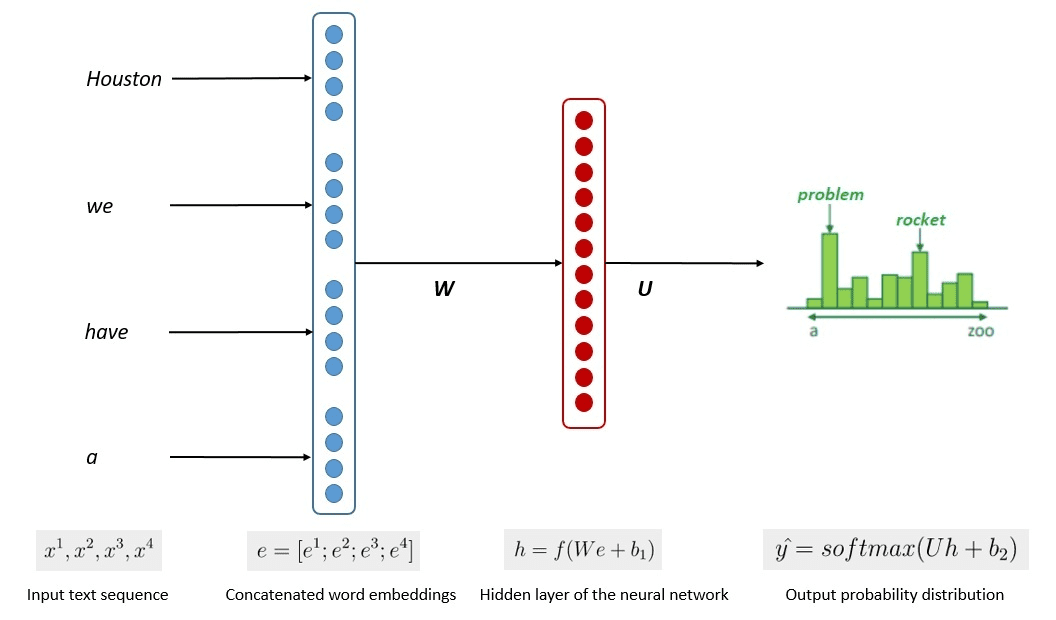

The simplified model training process is shown in the following image.

Image by Kumar Chandrakant (Source: Baeldung.com)

The process demonstrated that the models could generate relevant text based on the probability of each word or sentence in the input data. In LLMs, the advanced approach uses self supervised learning and semi-supervised learning to achieve general purpose capability.

Self-supervised learning is a technique where we do not have labels and instead the training data provides the training feedback. It is used in the LLM training process as the data typically lacks labels. In LLM, the surrounding context could be used as a cue to predict the next words. In contrast, semi-supervised learning combines the concepts of supervised and unsupervised learning with a small amount of labeled data to generate new labels for a large amount of unlabeled data. Semi-supervised learning is generally used for LLMs with specific context or domain needs.

At the third level, we would discuss the LLM more deeply, especially addressing the structure of the LLM and how it could achieve human-like generation capability.

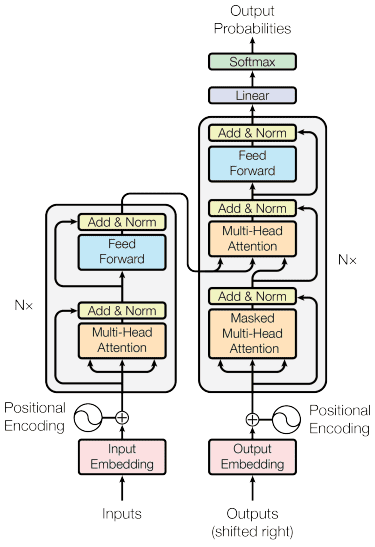

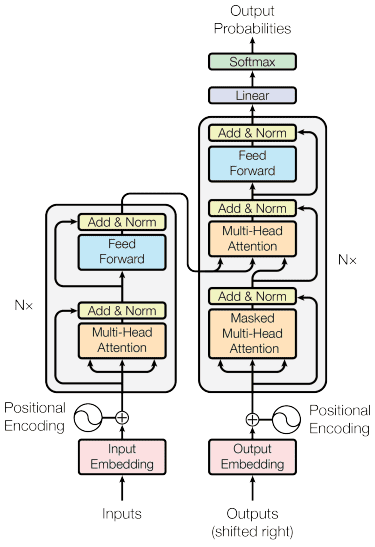

We have commented that LLM is based on the Neural Network model with Deep Learning techniques. The LLM has generally been built on transformer based architecture in recent years. The transformer is based on the multi-head attention mechanism introduced by Vaswani et al. (2017) and has been used in many LLMs.

Transformers is a model architecture that attempts to solve the sequential tasks previously found in RNNs and LSTMs. The old way of the language model was to use RNN and LSTM to process data sequentially, where the model would use each output word and repeat them so that the model would not forget them. However, they have problems with long sequence data once transformers are introduced.

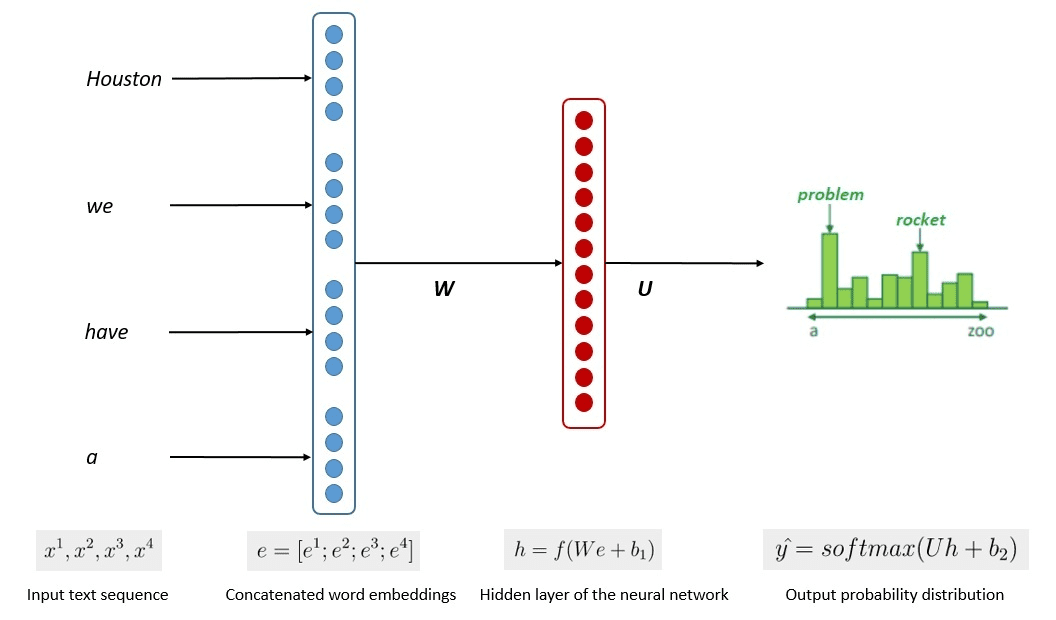

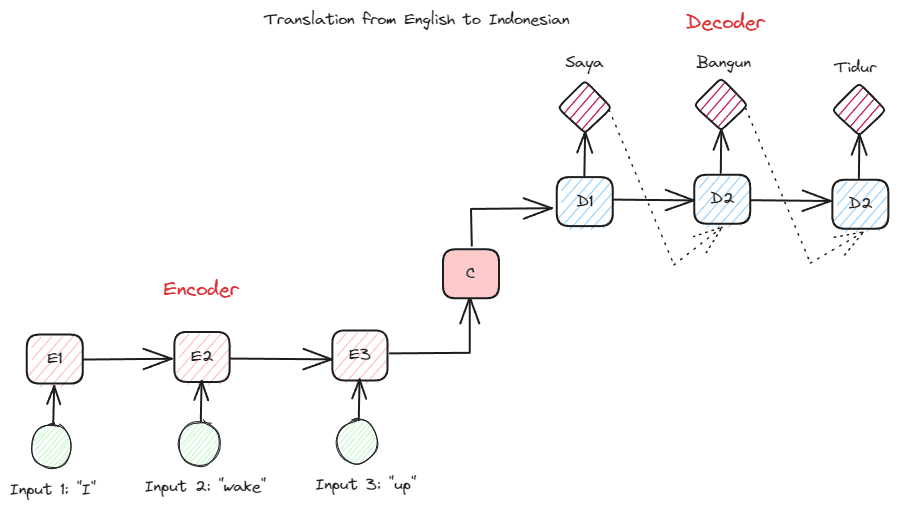

Before delving into Transformers, I want to introduce the concept of encoder-decoder that was previously used in RNN. The encoder-decoder structure allows the input and output text to not have the same length. The example use case is translating a language, which often has a different sequence size.

The structure can be divided into two. The first part is called Encoder, which is a part that receives a sequence of data and creates a new representation based on it. The representation would be used in the second part of the model, which is the decoder.

Image by author

The problem with RNN is that the model might need help remembering longer sequences, even with the above encoder-decoder structure. This is where the attention mechanism could help solve the problem, a layer that could solve long input problems. The attention mechanism is introduced in the article by Bahdanau et al. (2014) to solve encoder-decoder type RNNs focusing on an important part of the model input while having the output prediction.

The transformer structure is inspired by the encoder-decoder type and built with attention mechanism techniques, so it does not need to process data in sequential order. The general model of transformers is structured like the image below.

Transformer architecture (Vaswani et al. (2017))

In the above structure, transformers encode the data vector sequence into the word embedding while using decoding to transform the data to the original format. Encoding can assign some importance to the input with the attention mechanism.

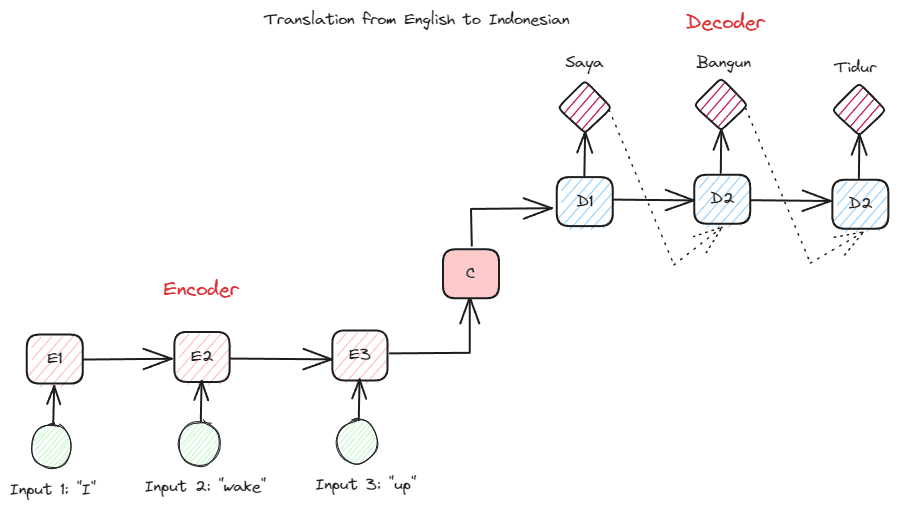

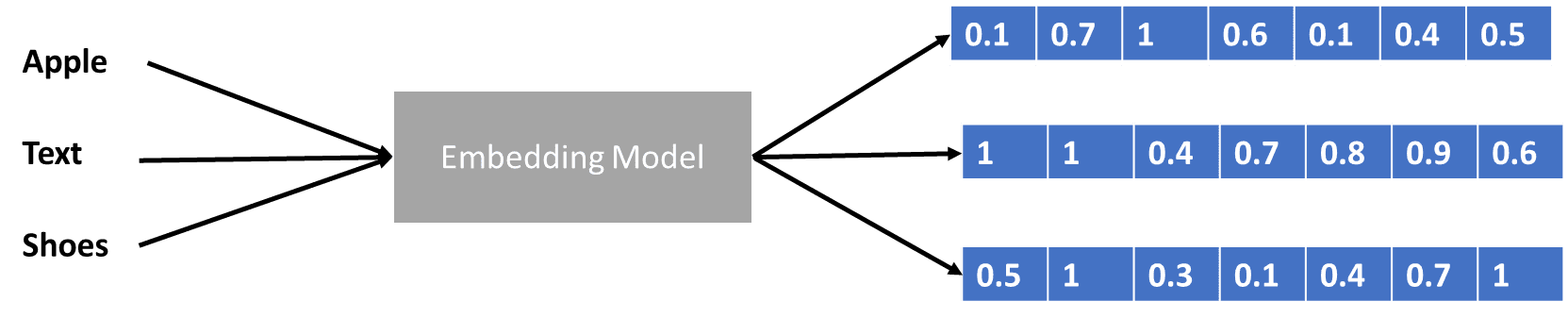

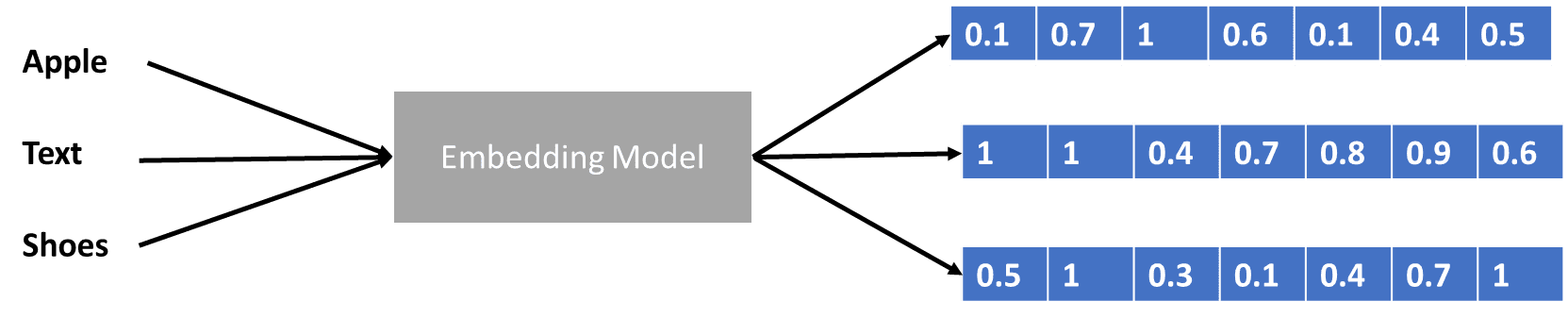

We've talked a bit about transformers that encode the data vector, but what is a data vector? Let's discuss it. In the machine learning model, we cannot input the raw natural language data into the model, so we need to transform it into numerical forms. The transformation process is called word embedding, where each input word is processed through the word embedding model to obtain the data vector. We can use many initial word embeddings, such as word2time either Glove, but many advanced users try to perfect them using their vocabulary. In basic terms, the word embedding process can be shown in the following image.

Image by author

Transformers could accept the input and provide more relevant context by presenting the words in numerical forms like the data vector above. In LLMs, word embeddings are typically context-dependent and are typically refined based on use cases and intended outcome.

We discuss the big language model in three levels of difficulty, from beginner to advanced. From the general use of LLM to how it is structured, you can find an explanation that explains the concept in more detail.

Cornellius Yudha Wijaya He is an assistant data science manager and data writer. While working full-time at Allianz Indonesia, she loves sharing Python tips and data through social media and print media.