This post is co-authored by Tristan Miller of Best Egg.

best egg is a leading financial confidence platform offering loan products and resources focused on helping people feel more secure while managing their daily finances. Since March 2014, Best Egg has delivered $22 billion in personal consumer loans with strong credit performance, welcomed nearly 637,000 members to the recently launched Best Egg Financial Health platform, and empowered more than 180,000 cardholders carrying the new Best Egg credit card in your wallet.

Amazon SageMaker is a fully managed machine learning (ML) service that provides various tools for building, training, optimizing, and deploying ML models. SageMaker provides automated model tuning, which handles the undifferentiated heavy lifting of provisioning and managing the IT infrastructure to run multiple iterations and select the optimized model candidate from training.

To help you efficiently tune your required hyperparameters and determine the best performing model, this post will discuss how best egg used SageMaker hyperparameter tuning with warm pools and achieved a 3-fold improvement in model training time.

Use Case Overview

Credit risk analysts use credit scoring models when lending or offering a credit card to customers based on a variety of user attributes. This statistical model generates a final score, or Good Bad Indicator (GBI), which determines whether a credit application is approved or rejected. ML insights facilitate decision making. To assess the risk of credit applications, ML uses various data sources, thus predicting the risk of a customer being delinquent.

The challenge

A significant problem in the financial industry is that there is no universally accepted method or framework for handling the overwhelming variety of possibilities that must be considered at any given time. It’s hard to standardize the tools teams use to promote transparency and monitoring across the board. The application of ML can help those in the financial industry make better judgments regarding pricing, risk management, and consumer behavior. Data scientists train multiple ML algorithms to sift through millions of consumer data records, identify anomalies, and assess whether a person is eligible for credit.

SageMaker can run automated hyperparameter tuning based on multiple optimization techniques such as grid, Bayesian, random, and hyperband search. Automatic model tuning makes it easy to focus on optimal model settings, freeing up time and money for better use in other parts of the financial industry. As part of hyperparameter tuning, SageMaker runs multiple iterations of the training code on the training dataset with various combinations of hyperparameters. SageMaker then determines the best candidate model with the optimal hyperparameters based on the configured objective metric.

Best Egg was able to automate the hyperparameter tuning with SageMaker’s Automated Hyperparameter Optimization (HPO) feature and parallelize it. However, each hyperparameter tuning job could take hours, and selecting the best candidate model required many hyperparameter tuning jobs over the course of several days. Hyperparameter tuning jobs could be slow due to the nature of the iterative tasks that HPO performs under the hood. Every time a training job starts, a new provisioning of resources occurs, consuming a significant amount of time before training actually begins. This is a common problem data scientists face when training their models. Time efficiency was a huge pain point because these long-duration training jobs were impeding productivity and data scientists were stuck on these jobs for hours.

Solution Overview

The following diagram represents the different components used in this solution.

Best Egg’s data science team uses Amazon SageMaker Studio to build and run Jupyter notebooks. SageMaker Processing Jobs run feature engineering pipelines on the input dataset to generate features. Best Egg trains multiple credit models using classification and regression algorithms. The data science team must sometimes work with limited training data on the order of tens of thousands of records given the nature of their use cases. Best Egg runs SageMaker training jobs with automated hyperparameter tuning using Bayesian optimization technology. To reduce variance, Best Egg uses k-fold cross-validation as part of its custom wrapper to evaluate the trained model.

The trained model artifact is registered and versioned in the SageMaker model registry. The inference is executed in two ways, real-time and batch, depending on the user’s requirements. The trained model artifact is hosted on a real-time SageMaker endpoint using built-in autoscaling and load balancing features. The model is also qualified through daily scheduled batch transformation jobs. The entire pipeline is orchestrated through Amazon SageMaker Pipelines, consisting of a sequence of steps, such as a processing step for feature engineering, a tuning step for training and automated model tuning, and a model step. to register the artifact.

Regarding the core issue of long-running hyperparameter tuning jobs, Best Egg explored the recently released warm pools feature managed by SageMaker. SageMaker Managed Warm Pools allow you to persist and reuse provisioned infrastructure after completing a training job to reduce latency for repetitive workloads, such as iterative experimentation or consecutive execution of jobs where job-specific configuration parameters , such as instance type or count, match previous runs. This allowed Best Egg to reuse existing infrastructure for its repetitive training jobs without wasting time provisioning infrastructure.

Deep Dive into Model Fit and the Benefits of Tempered Pools

SageMaker Automated Model Tuning leverages Warm Pools by default for any tuning work starting in August 2022 (announcement). This makes it easy to get the benefits of Warm Pools, as you only need to start one tuning job and SageMaker Automatic Model Tuning will automatically use Warm Pools between subsequent training jobs launched as part of the tuning. When each training job ends, the provisioned resources are kept alive in a warm pool so that the next training job that is started as part of the optimization starts in the same pool with minimal startup overhead.

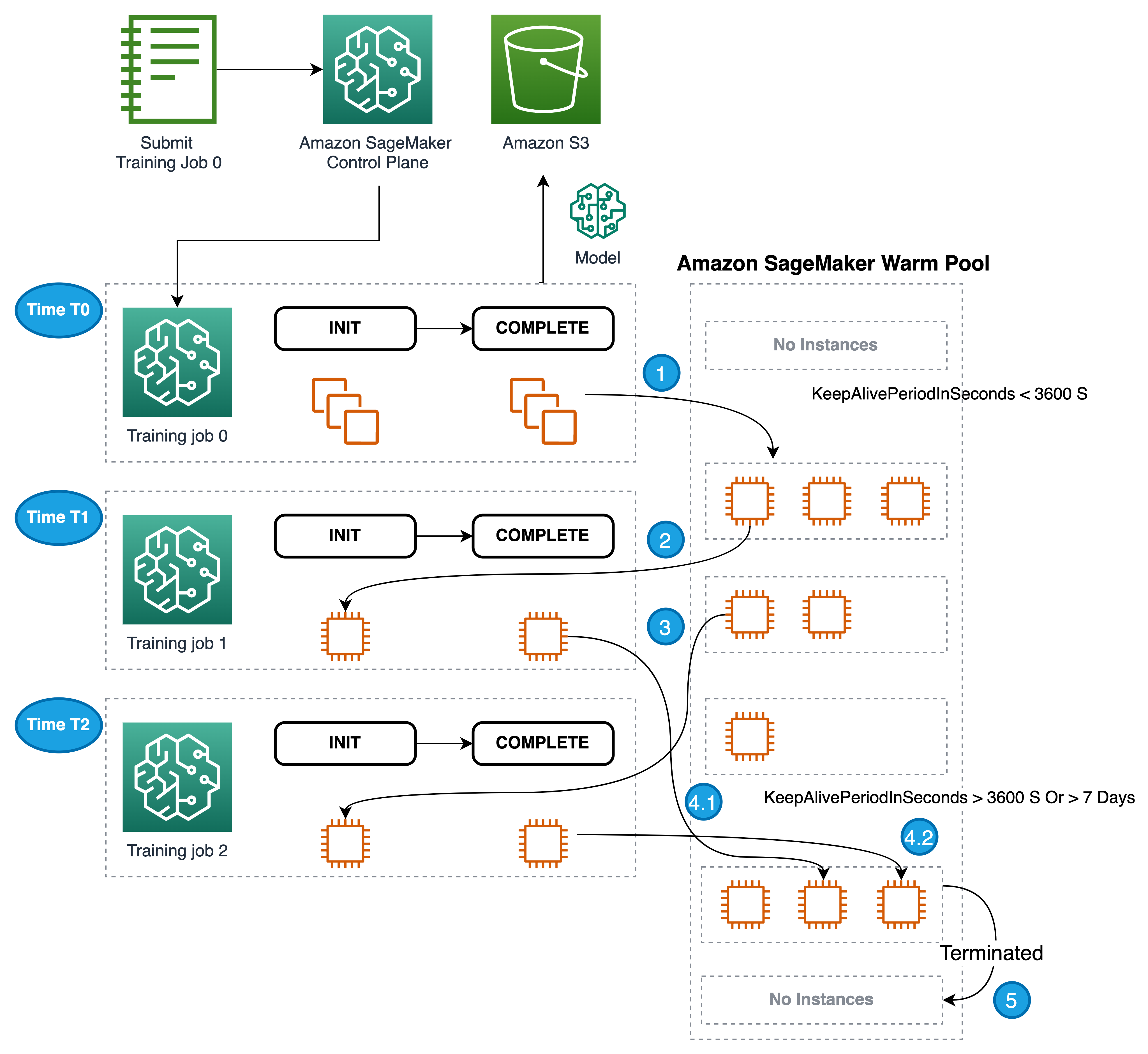

The following workflow shows a series of training job runs using a warm pool.

- After the first training job completes, the instances used for training are retained in the warm pool cluster.

- The next activated training job will use the instance in the warm pool to run, eliminating the cold start time needed to prepare the instance for startup.

- Similarly, if more training jobs arrive with similar instance type, number of instances, volume, and network criteria to the resources of the warm pool cluster, then the matching instances will be used to run the jobs.

- After the training job completes, the instances will be held in the warm pool awaiting new jobs.

- The maximum amount of time that a warm pool cluster can continue to run consecutive training jobs is 7 days.

- As long as the cluster is healthy and the warm pool is within the specified time duration, the warm pool’s status is

Available. - The hot pool stays

Availableuntil you identify a matching training job for reuse. If the state of the warm pool isTerminatedthen this is the end of the life cycle of the warm pool.

- As long as the cluster is healthy and the warm pool is within the specified time duration, the warm pool’s status is

The following diagram illustrates this workflow.

How Best Egg benefited: improvements and data points

Best Egg found that with warm pools, their SageMaker training jobs ran faster by a factor of 3. In a credit modeling project, the best model was selected from eight different HPO jobs, each of which had 40 iterations with five parallel jobs at a time. Each iteration took about 1 minute to compute, while without warm pools they typically took 5 minutes each. In total, the process took 2 hours of compute time, with additional input from the data scientist adding up to approximately half a business day. Without warm pools, we estimate that the calculation would have taken only 6 hours, likely spread over the course of 2-3 business days.

Resume

In conclusion, this post discusses the elements of Best Egg’s business and the company’s ML landscape. We reviewed how Best Egg was able to speed up its model training and tuning by enabling warm groups for its hyperparameter tuning jobs in SageMaker. We also explain how easy it is to implement hot pools for your training work with a simple configuration. At AWS, we encourage our readers to start exploring hot groups for iterative and repetitive training jobs.

About the authors

Tristan Miller is a Lead Data Scientist at Best Egg. He builds and implements ML models to make important subscription and marketing decisions. He develops bespoke solutions to address specific problems, as well as automation to increase efficiency and scale. He is also a skilled origamist.

Tristan Miller is a Lead Data Scientist at Best Egg. He builds and implements ML models to make important subscription and marketing decisions. He develops bespoke solutions to address specific problems, as well as automation to increase efficiency and scale. He is also a skilled origamist.

Valerio Perrone is a Manager of Applied Sciences at AWS. She leads the science and engineering team that owns the automatic model tuning service in Amazon SageMaker. Valerio’s background lies in the development of algorithms for large-scale machine learning and statistical models, with a focus on data-driven decision-making and the democratization of artificial intelligence.

Ganapati Krishnamoorthi He is a Senior Machine Learning Solutions Architect at AWS. Ganapathi provides prescriptive guidance to enterprise and startup clients, helping them design and deploy cloud applications at scale. He is specialized in machine learning and focuses on helping clients use AI/ML for their business results. When he is not at work, he likes to explore the outdoors and listen to music.

Ajay Govindaram is a Sr. Solutions Architect at AWS. He works with strategic clients using AI/ML to solve complex business problems. His experience lies in providing technical leadership and design assistance for modest to large scale AI/ML application implementations. His knowledge ranges from application architecture to big data, analytics, and machine learning. He enjoys listening to music while he rests, experiencing the outdoors, and spending time with his loved ones.

Ajay Govindaram is a Sr. Solutions Architect at AWS. He works with strategic clients using AI/ML to solve complex business problems. His experience lies in providing technical leadership and design assistance for modest to large scale AI/ML application implementations. His knowledge ranges from application architecture to big data, analytics, and machine learning. He enjoys listening to music while he rests, experiencing the outdoors, and spending time with his loved ones.

Hariharan Suresh He is a Senior Solutions Architect at AWS. He is passionate about databases, machine learning, and designing innovative solutions. Prior to joining AWS, Hariharan was a product architect, core banking implementation specialist, and developer, working with BFSI organizations for more than 11 years. Outside of technology, he enjoys paragliding and biking.

NEWSLETTER

NEWSLETTER