Introduction

A specific category of artificial intelligence models known as large language models (LLMs) is designed to understand and generate human-like text. The term “large” is often quantified by the number of parameters they possess. For example, OpenAI’s GPT-3 model has 175 billion parameters. Use it for a variety of tasks, like translating text, answering questions, writing essays, summarizing text. Despite the abundance of resources demonstrating the capabilities of LLMs and providing guidance on setting up chat applications with them, there are few endeavors that thoroughly examine their suitability for real-life business scenarios. In this article, you will learn how to create document querying system using LangChain & Flan-T5 XXL leveraging in building large-language based applications.

Learning Objectives

Prior to delving into the technical intricacies, let us establish the learning goals of this article:

- Understanding how LangChain can be leveraged in building large-language based applications

- A concise overview of the text-to-text framework and the Flan-T5 model

- How to create a document query system using LangChain & any LLM model

Let us now dive into these sections to understand each of these concepts.

This article was published as a part of the Data Science Blogathon.

Role of LangChain in Building LLM Applications

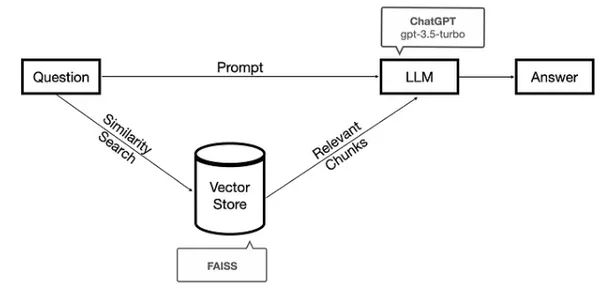

The framework LangChain has been designed for developing various applications such as chatbots, Generative Question-Answering (GQA), and summarization that harness the capabilities of large language models (LLMs). LangChain provides a comprehensive solution for constructing document querying systems. This involves preprocessing a corpus through chunking, converting these chunks into vector space, identifying similar chunks when a query is posed, and leveraging a language model to refine the retrieved documents into a suitable answer.

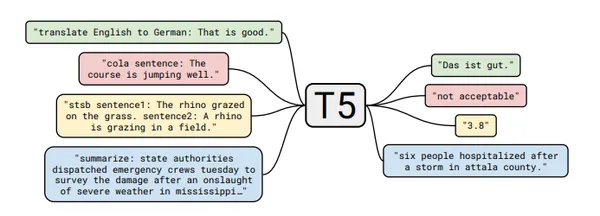

Overview of the Flan-T5 Model

Flan-T5 is a commercially available open-source LLM by Google researchers. It is a variant of the T5 (Text-To-Text Transfer Transformer) model. T5 is a state-of-the-art language model that is trained in a “text-to-text” framework. It is trained to perform a variety of NLP tasks by converting the tasks into a text-based format. FLAN is an abbreviation for Finetuned Language Net.

Let’s Dive into Building the Document Query System

We can build this document query system by leveraging the LangChain and Flan-T5 XXL model in Google Colab’s Free Tier itself. To execute the following code in Google Colab, we must choose the “T4 GPU” as our runtime. Follow the below steps to build the document query system:

1: Importing the Necessary Libraries

We would need to import the following libraries:

from langchain.document_loaders import TextLoader #for textfiles

from langchain.text_splitter import CharacterTextSplitter #text splitter

from langchain.embeddings import HuggingFaceEmbeddings #for using HugginFace models

from langchain.vectorstores import FAISS

from langchain.chains.question_answering import load_qa_chain

from langchain.chains.question_answering import load_qa_chain

from langchain import HuggingFaceHub

from langchain.document_loaders import UnstructuredPDFLoader #load pdf

from langchain.indexes import VectorstoreIndexCreator #vectorize db index with chromadb

from langchain.chains import RetrievalQA

from langchain.document_loaders import UnstructuredURLLoader #load urls into docoument-loader

from langchain.chains.question_answering import load_qa_chain

from langchain import HuggingFaceHub

import os

os.environ["HUGGINGFACEHUB_API_TOKEN"] = "xxxxx"

2: Loading the PDF Using PyPDFLoader

We use the PyPDFLoader from the LangChain library here to load our PDF file – “Data-Analysis.pdf”. The “loader” object has an attribute called “load_and_split()” that splits the PDF based on the pages.

#import csvfrom langchain.document_loaders import PyPDFLoader

# Load the PDF file from current working directory

loader = PyPDFLoader("Data-Analysis.pdf")

# Split the PDF into Pages

pages = loader.load_and_split()3: Chunking the Text Based on a Chunk Size

Use the models to generate embedding vectors have maximum limits on the text fragments provided as input. If we are using these models to generate embeddings for our text data, it becomes important to chunk the data to a specific size before passing the data to these models. that We use the RecursiveCharacterTextSplitter here to split the data which works by taking a large text and splitting it based on a specified chunk size. It does this by using a set of characters.

#import from langchain.text_splitter import RecursiveCharacterTextSplitter

# Define chunk size, overlap and separators

text_splitter = RecursiveCharacterTextSplitter(

chunk_size=1024,

chunk_overlap=64,

separators=['\n\n', '\n', '(?=>\. )', ' ', '']

)

docs = text_splitter.split_documents(pages)

4: Fetching Numerical Embeddings for the Text

In order to numerically represent unstructured data like text, documents, images, audio, etc., we need embeddings. The numerical form captures the contextual meaning of what we are embedding. Here, we use the HuggingFaceHubEmbeddings object to create embeddings for each document. This object uses the “all-mpnet-base-v2” sentence transformer model for mapping sentences & paragraphs to a 768-dimensional dense vector space.

# Embeddings

from langchain.embeddings import HuggingFaceEmbeddings

embeddings = HuggingFaceEmbeddings()5: Storing the Embeddings in a Vector Store

Now we need a Vector Store for our embeddings. Here we are using FAISS. FAISS, short for Facebook AI Similarity Search, is a powerful library designed for efficient searching and clustering of dense vectors that offers a range of algorithms that can search through sets of vectors of any size, even those that may exceed the available RAM capacity.

#Create the vectorized db

# Vectorstore: https://python.langchain.com/en/latest/modules/indexes/vectorstores.html

from langchain.vectorstores import FAISS

db = FAISS.from_documents(docs, embeddings)6: Similarity Search with Flan-T5 XXL

We connect here to the hugging face hub to fetch the Flan-T5 XXL model.

We can define a host of model settings for the model, such as temperature and max_length.

The load_qa_chain function provides a simple method for feeding documents to an LLM. By utilizing the chain type as “stuff”, the function takes a list of documents, combines them into a single prompt, and then passes that prompt to the LLM.

llm=HuggingFaceHub(repo_id="google/flan-t5-xxl", model_kwargs={"temperature":1, "max_length":1000000})

chain = load_qa_chain(llm, chain_type="stuff")

#QUERYING

query = "Explain in detail what is quantitative data analysis?"

docs = db.similarity_search(query)

chain.run(input_documents=docs, question=query)

7: Creating QA Chain with Flan-T5 XXL Model

Use the RetrievalQAChain to retrieve documents using a Retriever and then uses a QA chain to answer a question based on the retrieved documents. It combines the language model with the VectorDB’s retrieval capabilities

from langchain.chains import RetrievalQA

qa = RetrievalQA.from_chain_type(llm=llm, chain_type="stuff",

retriever=db.as_retriever(search_kwargs={"k": 3}))

8: Querying Our PDF

query = "What are the different types of data analysis?"

qa.run(query)#Output

"Descriptive data analysis Theory Driven Data Analysis Data or narrative driven analysis"query = "What is the meaning of Descriptive Data Analysis?"

qa.run(query)#import csv#Output

"Descriptive data analysis is only concerned with processing and summarizing the data."Real World Applications

In the present age of data inundation, there is a constant challenge of obtaining relevant information from an overwhelming amount of textual data. Traditional search engines often fail to give accurate and context-sensitive responses to specific queries from users. Consequently, an increasing demand for sophisticated natural language processing (NLP) methodologies has emerged, with the aim of facilitating precise document question answering (DQA) systems. A document querying system, just like the one we built, could be extremely useful to automate interaction with any kind of document like PDF, excel sheets, html files amongst others. Using this approach, a lot of context-aware extract valuable insights from extensive document collections.

Conclusion

In this article, we began by discussing how we could leverage LangChain to load data from a PDF document. Extend this capability to other document types such as CSV, HTML, JSON, Markdown, and more. We further learned ways to carry out the splitting of the data based on a specific chunk size which is a necessary step before generating the embeddings for the text. Then, fetched the embeddings for the documents using HuggingFaceHubEmbeddings. Post storing the embeddings in a vector store, we combined Retrieval with our LLM model ‘Flan-T5 XXL’ in question answering. The retrieved documents and an input question from the user were passed to the LLM to generate an answer to the asked question.

Key Takeaways

- LangChain offers a comprehensive framework for seamless interaction with LLMs, external data sources, prompts, and user interfaces. It allows for the creation of unique applications built around an LLM by “chaining” components from multiple modules.

- Flan-T5 is a commercially available open-source LLM. It is a variant of the T5 (Text-To-Text Transfer Transformer) model developed by Google Research.

- A vector store stores data in the form of high-dimensional vectors. These vectors are mathematical representations of various features or attributes. Design the vector stores to efficiently manage dense vectors and provide advanced similarity search capabilities.

- The process of building a document-based question-answering system using LLM model and Langchain entails fetching and loading a text file, dividing the document into manageable sections, converting these sections into embeddings, storing them in a vector database and creating a QA chain to enable question answering on the document.

Frequently Asked Questions

A. Flan-T5 is a commercially available open-source LLM. It is a variant of the T5 (Text-To-Text Transfer Transformer) model developed by Google Research.

A. Flan-T5 is released with different sizes: Small, Base, Large, XL and XXL. XXL is the biggest version of Flan-T5, containing 11B parameters.

google/flan-t5-small: 80M parameters

google/flan-t5-base: 250M parameters

google/flan-t5-large: 780M parameters

google/flan-t5-xl: 3B parameters

google/flan-t5-xxl: 11B parameters

A. One of the most common ways to store and search over unstructured data is to embed it and store the resulting embedding vectors, and then at query time to embed the unstructured query and retrieve the embedding vectors that are ‘most similar’ to the embedded query. A vector store takes care of storing embedded data and performing vector search for you.

A. LangChain streamlines the development of diverse applications, such as chatbots, Generative Question-Answering (GQA), and summarization. By “chaining” components from multiple modules, it allows for the creation of unique applications built around an LLM.

A. load_qa_chain is one of the ways for answering questions in a document. It works by loading a chain that can do question answering on the input documents. load_qa_chain uses all of the text in the document. One of the other ways for question answering is RetrievalQA chain that uses load_qa_chain under the hood. However, it retrieves the most relevant chunk of text and inputs only those to the large language model.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

NEWSLETTER

NEWSLETTER