Introduction

Imagine walking through an art gallery, surrounded by vivid paintings and sculptures. Now, what if you could ask each piece a question and get a meaningful answer? You might ask, “What story are you telling?” or “Why did the artist choose this color?” That’s where Vision Language Models (VLMs) come into play. These models, like expert guides in a museum, can interpret images, understand the context, and communicate that information using human language. Whether it’s identifying objects in a photo, answering questions about visual content, or even generating new images from descriptions, VLMs merge the power of vision and language in ways that were once thought impossible.

In this guide, we’ll explore the fascinating world of VLMs, how they work, their capabilities, and the breakthrough models like CLIP, PaLaMa, and Florence that are transforming how machines understand and interact with the world around them.

This article is based on a recent talk give Aritra Roy Gosthipaty and Ritwik Raha on A Comprehensive Guide to Vision Language Models, in the DataHack Summit 2024.

Learning Objectives

- Understand the core concepts and capabilities of Vision Language Models (VLMs).

- Explore how VLMs merge visual and linguistic data for tasks like object detection and image segmentation.

- Learn about key VLM architectures such as CLIP, PaLaMa, and Florence, and their applications.

- Gain insights into various VLM families, including pre-trained, masked, and generative models.

- Discover how contrastive learning enhances VLM performance and how fine-tuning improves model accuracy.

What are Vision Language Models?

Vision Language Models (VLMs) refer to artificial intelligence systems in a particular category that is aimed at handling videos or videos and texts as inputs. When we combine these two modalities, the VLMs can perform tasks that involve the model to map the meaning between images and text, for example; descripting the images, answering questions based on the image and vice versa.

The core strength of VLMs lies in their ability to bridge the gap between computer vision and NLP. Traditional models typically excelled in only one of these domains—either recognizing objects in images or understanding human language. However, VLMs are specifically designed to combine both modalities, providing a more holistic understanding of data by learning to interpret images through the lens of language and vice versa.

The architecture of VLMs typically involves learning a joint representation of both visual and textual data, allowing the model to perform cross-modal tasks. These models are pre-trained on large datasets containing pairs of images and corresponding textual descriptions. During training, VLMs learn the relationships between the objects in the images and the words used to describe them, which enables the model to generate text from images or understand textual prompts in the context of visual data.

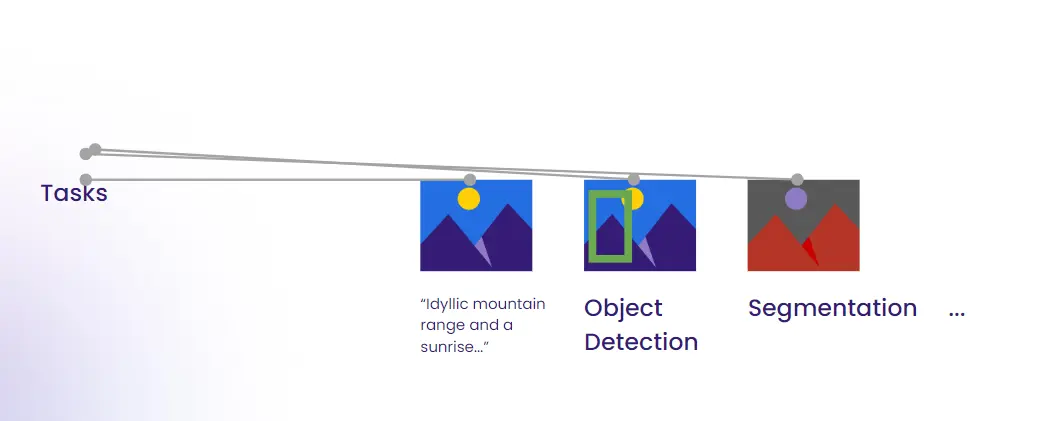

Examples of key tasks that VLMs can handle include:

- Vision Question Answering (VQA): Answering questions about the content of an image.

- Image Captioning: Generating a textual description of what is seen in an image.

- Object Detection and Segmentation: Identifying and labeling different objects or parts of an image, often with textual context.

Capabilities of Vision Language Models

Vision Language Models (VLMs) have evolved to address a wide array of complex tasks by integrating both visual and textual information. They function by leveraging the inherent relationship between images and language, enabling groundbreaking capabilities across several domains.

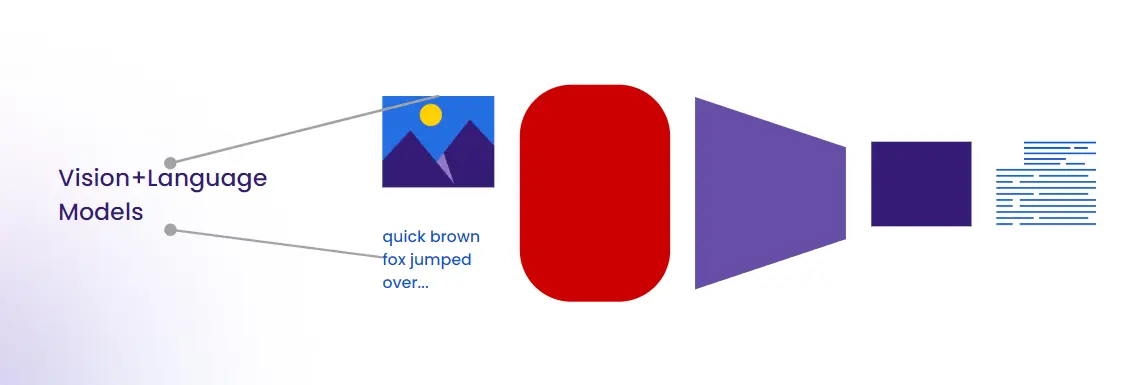

Vision Plus Language

The cornerstone of VLMs is their ability to understand and operate with both visual and textual data. By processing these two streams simultaneously, VLMs can perform tasks such as generating captions for images, recognizing objects with their descriptions, or associating visual information with textual context. This cross-modal understanding enables richer and more coherent outputs, making them highly versatile across real-world applications.

Object Detection

Object detection is a vital capability of VLMs. It allows the model to recognize and classify objects within an image, grounding its visual understanding with language labels. By combining language understanding, VLMs don’t just detect objects but can also comprehend and describe their context. This could include identifying not only the “dog” in an image but also associating it with other scene elements, making object detection more dynamic and informative.

Image Segmentation

VLMs enhance traditional vision models by performing image segmentation, which divides an image into meaningful segments or regions based on its content. In VLMs, this task is augmented by textual understanding, meaning the model can segment specific objects and provide contextual descriptions for each section. This goes beyond merely recognizing objects, as the model can break down and describe the fine-grained structure of an image.

Embeddings

Another very important principle in VLMs is an embedding role as it provide the shared space for interaction between visual and textual data. This is because by associating images and words the model is able to perform operations such as querying an image given a text and vice versa. This is due to the fact that VLMs produce very effective representations of the images and therefore they can help in closing the gap between vision and language in cross modal processes.

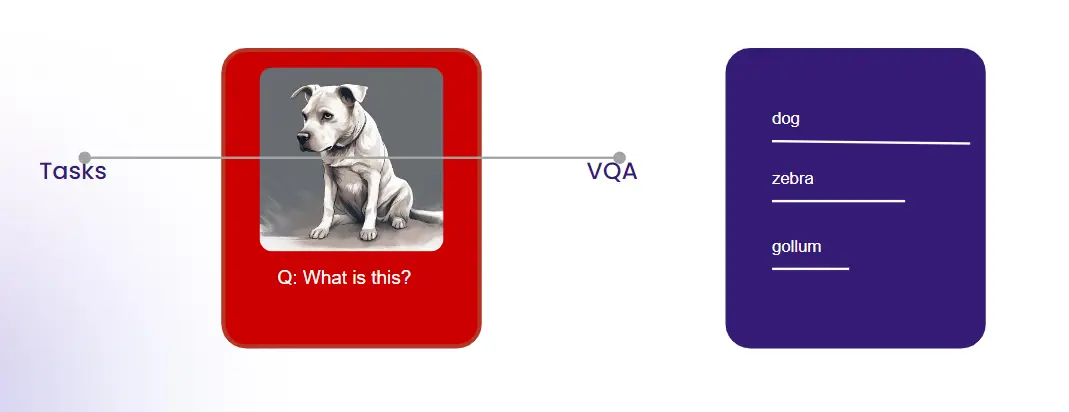

Vision Question Answering (VQA)

Of all the forms of working with VLMs, one of the more complex forms is given by using VQAs, which means a VLM is presented with an image and a question related to the image. The VLM employs the acquired picture interpretation in the image and employs the natural language processing understanding at answering the query appropriately. For example, if given an image of a park with a following question, “How many benches can you see in the picture?” the model is capable of solving the counting problem and give the answer, which demonstrates not only vision but also reasoning from the model.

Notable VLM Models

Several Vision Language Models (VLMs) have emerged, pushing the boundaries of what is possible in cross-modal learning. Each model offers unique capabilities that contribute to the broader vision-language research landscape. Below are some of the most significant VLMs:

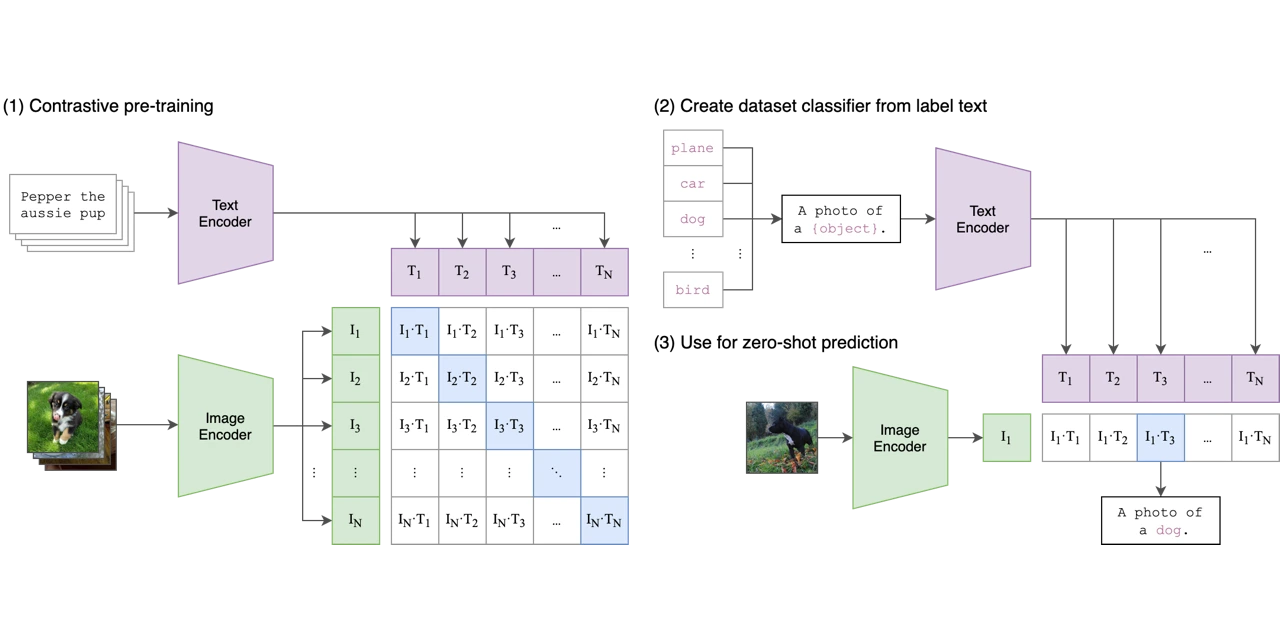

CLIP (Contrastive Language-Image Pre-training)

CLIP is one of the pioneering models in the VLM space. It utilizes a contrastive learning approach to connect visual and textual data by learning to match images with their corresponding descriptions. The model processes large-scale datasets consisting of images paired with text and learns by optimizing the similarity between the image and its text counterpart, while distinguishing between non-matching pairs. This contrastive approach allows CLIP to handle a wide range of tasks, including zero-shot classification, image captioning, and even visual question answering without explicit task-specific training.

Read more about CLIP from here.

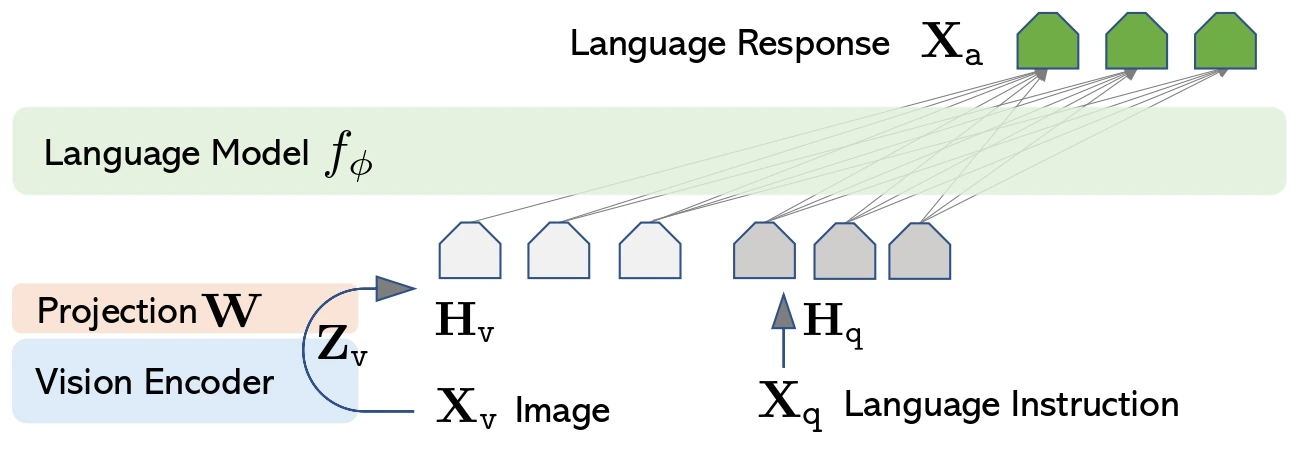

LLaVA (Large Language and Vision Assistant)

LLaVA is a sophisticated model designed to align both visual and language data for complex multimodal tasks. It uses a unique approach that fuses image processing with large language models to enhance its ability to interpret and respond to image-related queries. By leveraging both textual and visual representations, LLaVA excels in visual question answering, interactive image generation, and dialogue-based tasks involving images. Its integration with a powerful language model enables it to generate detailed descriptions and assist in real-time vision-language interaction.

Read mode about Llava from here.

LaMDA (Language Model for Dialogue Applications)

Although LaMDA was mostly discussed in terms of language, it can also be used in vision-language tasks. LaMDA is very friendly for dialogue systems, and when combined with vision models. It can perform visual question answering, image-controlled dialogues and other combined modal tasks. LaMDA is an improvement since it tends to provide human-like and contextually related answers which would benefit any application that requires discussion of visual data such as automated image or video analyzing virtual assistants.

Read more about LaMDA from here.

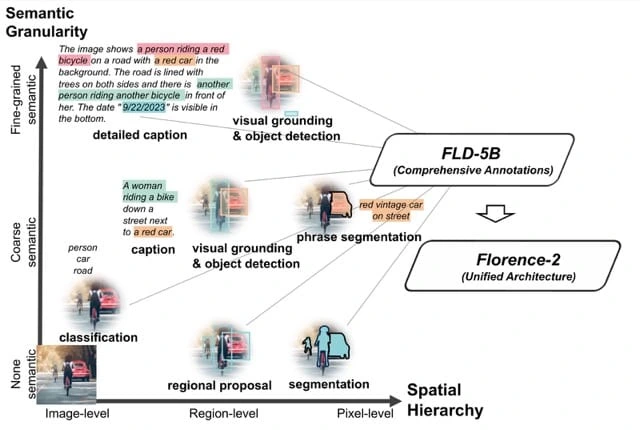

Florence

Florence is another robust VLM that incorporates both vision and language data to perform a wide range of cross-modal tasks. It is particularly known for its efficiency and scalability when dealing with large datasets. The model’s design is optimized for fast training and deployment, allowing it to excel in image recognition, object detection, and multimodal understanding. Florence can integrate vast amounts of visual and textual data. This makes it versatile in tasks like image retrieval, caption generation, and image-based question answering.

Read more about Florence from here.

Families of Vision Language Models

Vision Language Models (VLMs) are categorized into several families based on how they handle multimodal data. These include Pre-trained Models, Masked Models, Generative Models, and Contrastive Learning Models. Each family utilizes different techniques to align vision and language modalities, making them suitable for various tasks.

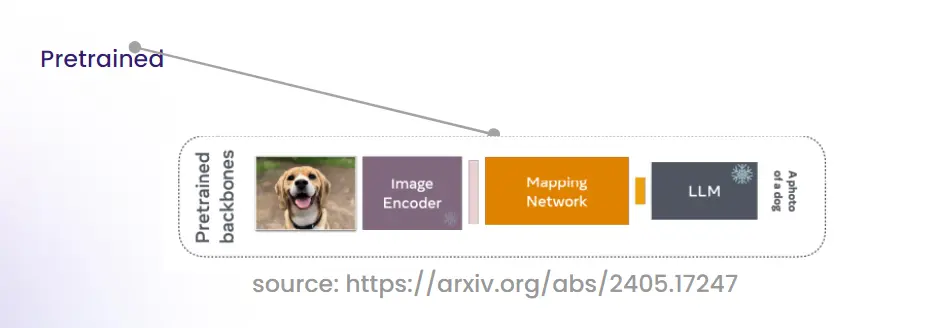

Pre-trained Model Family

Pre-trained models are built on large datasets of paired vision and language data. These models are trained on general tasks, allowing them to be fine-tuned for specific applications without needing massive datasets each time.

How it Works

The pre-trained model family uses large datasets of images and text. The model is trained to recognize images and match them with textual labels or descriptions. After this extensive pre-training, the model can be fine-tuned for specific tasks like image captioning or visual question answering. Pre-trained models are effective because they are initially trained on rich data and then fine-tuned on smaller, specific domains. This approach has led to significant performance improvements in various tasks.

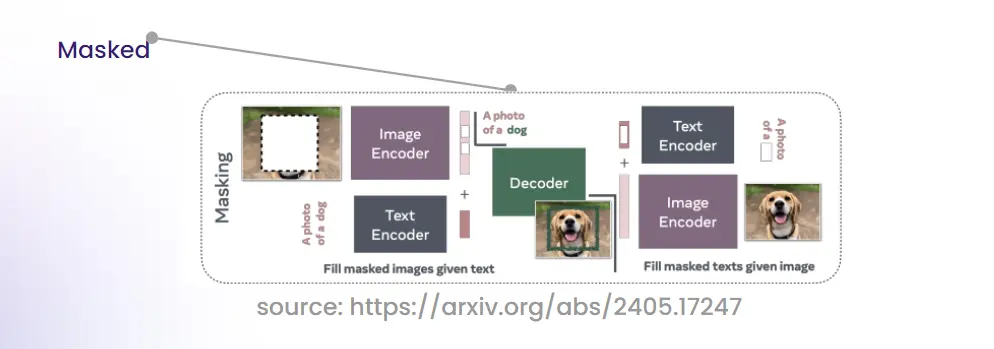

Masked Model Family

Masked models use masking techniques to train VLMs. These models randomly mask portions of the input image or text and require the model to predict the masked content, forcing it to learn deeper contextual relationships.

How it Works (Image Masking)

Masked image models operate by concealing random regions of the input image. The model is then tasked with predicting the missing pixels. This approach forces the VLM to focus on the surrounding visual context to reconstruct the image. As a result, the model gains a stronger understanding of both local and global visual features. Image masking helps the model develop a robust understanding of spatial relationships within images. This improved understanding enhances performance on tasks such as object detection and segmentation.

How it Works (Text Masking)

In masked language modeling, parts of the input text are hidden. The model is tasked with predicting the missing tokens. This encourages the VLM to understand complex linguistic structures and relationships. Masked text models are crucial for grasping nuanced linguistic features. They enhance the model’s performance on tasks like image captioning and visual question answering, where understanding both visual and textual data is essential.

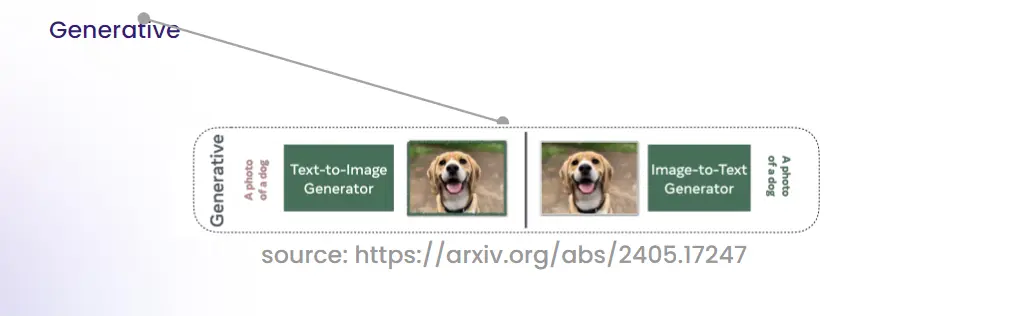

Generative Families

Generative models deal with the generation of new data which include text from images or images from text. These models are particularly applied in text to image and image to text generation that involves synthesizing new outputs from the input modality.

Text-to-Image Generation

When using text-to-image generator, input into the model is text and the output is the resulting image. This task is critically dependent on the concepts that pertain to semantic encoding of words and the features of an image. The model analyzes the semantical meaning of the text to produce a fidelity model, which corresponds to the description given as input.

Image-to-Text Generation

In image-to-text generation, the model takes an image as input and produces text output, such as captions. First, it analyzes the visual content of the image. Next, it identifies objects, scenes, and actions. The model then transcribes these elements into text. These generative models are useful for automatic caption generation, scene description, and creating stories from video scenes.

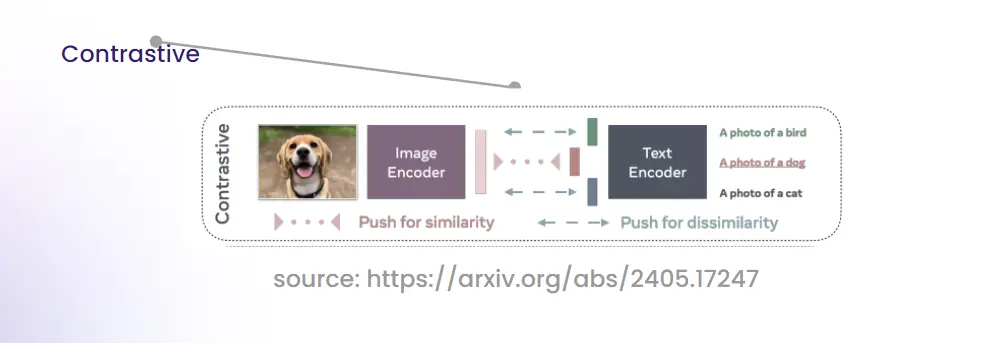

Contrastive Learning

Contrastive models including the CLIP identify them through the training of matching and non-matching image-text pairs. This forces the model to map images to their descriptions while at the same time purging off wrong mappings leading to good correspondence of the vision to language.

How it Works?

Contrastive learning maps an image and its correct description into the same vision-language semantic space. It also increases the discrepancy between vision-language semantically toxic samples. This process helps the model understand both the image and its associated text. It is useful for cross-modal tasks such as image retrieval, zero-shot classification, and visual question answering.

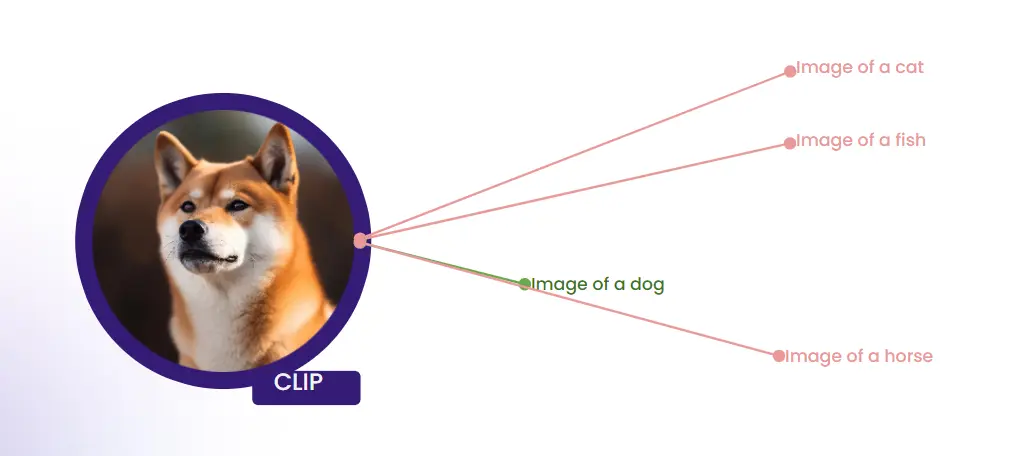

CLIP (Contrastive Language-Image Pretraining)

CLIP, or Contrastive Language-Image Pretraining, is a model developed by OpenAI. It is one of the leading models in the Vision Language Models (VLM) field. CLIP handles both images and text as inputs. The model is trained on image-text datasets. It uses contrastive learning to match images with their text descriptions. At the same time, it distinguishes between unrelated image-text pairs.

How CLIP Works

CLIP operates using a dual-encoder architecture: one for images and another for text. The core idea is to embed both the image and its corresponding textual description into the same high-dimensional vector space, enabling the model to compare and contrast different image-text pairs.

Key Steps in CLIP’s Functioning

- Image Encoding: Like the CLIP model, this model also encodes images using a vision transformer which is called ViT.

- Text Encoding: At the same time, the model encode the corresponding text through a transformer based text encoder as well.

- Contrastive Learning: It then compares the similarity between the encoded image and text so that it can give results accordingly. It maximizes similarity on pairs where images belong to the same class as descriptions while it minimizes it on the pairs where it is not the case.

- Cross-Modal Alignment: The tradeoff yields a model that is superb in tasks that involve the matching of vision with language such as zero shot learning, image retrieval and even inverse image synthesis.

Applications of CLIP

- Image Retrieval: Given a description, CLIP can find images that match it.

- Zero-Shot Classification: CLIP can classify images without any additional training data for the specific categories.

- Visual Question Answering: CLIP can understand questions about visual content and provide answers.

Code Example: Image-to-Text with CLIP

Below is an example code snippet for performing image-to-text tasks using CLIP. This example demonstrates how CLIP encodes an image and a set of text descriptions and calculates the probability that each text matches the image.

import torch

import clip

from PIL import Image

# Check if GPU is available, otherwise use CPU

device = "cuda" if torch.cuda.is_available() else "cpu"

# Load the pre-trained CLIP model and preprocessing function

model, preprocess = clip.load("ViT-B/32", device=device)

# Load and preprocess the image

image = preprocess(Image.open("CLIP.png")).unsqueeze(0).to(device)

# Define the set of text descriptions to compare with the image

text = clip.tokenize(("a diagram", "a dog", "a cat")).to(device)

# Perform inference to encode both the image and the text

with torch.no_grad():

image_features = model.encode_image(image)

text_features = model.encode_text(text)

# Compute similarity between image and text features

logits_per_image, logits_per_text = model(image, text)

# Apply softmax to get the probabilities of each label matching the image

probs = logits_per_image.softmax(dim=-1).cpu().numpy()

# Output the probabilities

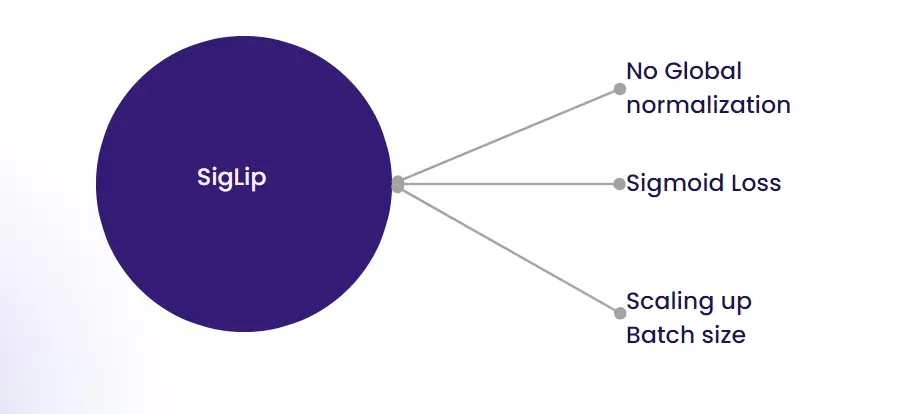

print("Label probabilities:", probs)SigLip (Siamese Generalized Language Image Pretraining)

Siamese Generalized Language Image Pretraining, is an advanced model developed by Google that builds on the capabilities of models like CLIP. SigLip enhances image classification tasks by leveraging the strengths of contrastive learning with improved architecture and pretraining techniques. It aims to improve the efficiency and accuracy of zero-shot image classification.

How SigLip Works

SigLip utilizes a Siamese network architecture, which involves two parallel networks that share weights and are trained to differentiate between similar and dissimilar image-text pairs. This architecture allows SigLip to efficiently learn high-quality representations for both images and text. The model is pre-trained on a diverse dataset of images and corresponding textual descriptions, enabling it to generalize well to various unseen tasks.

Key Steps in SigLip’s Functioning

- Siamese Network: The model employs two identical neural networks that process image and text inputs separately but share the same parameters. This setup allows for effective comparison and alignment of image and text representations.

- Contrastive Learning: Similar to CLIP, SigLip uses contrastive learning to maximize the similarity between matching image-text pairs and minimize it for non-matching pairs.

- Pretraining on Diverse Data: SigLip is pre-trained on a large and varied dataset, enhancing its ability to perform well in zero-shot scenarios, where it is tested on tasks without any additional fine-tuning.

Applications of SigLip

- Zero-Shot Image Classification: SigLip excels in classifying images into categories it has not been explicitly trained on by leveraging its extensive pretraining.

- Visual Search and Retrieval: It can be used to retrieve images based on textual queries or classify images based on descriptive text.

- Content-Based Image Tagging: SigLip can automatically generate descriptive tags for images, making it useful for content management and organization.

Code Example: Zero-Shot Image Classification with SigLip

Below is an example code snippet demonstrating how to use SigLip for zero-shot image classification. The example shows how to classify an image into candidate labels using the transformers library.

from transformers import pipeline

from PIL import Image

import requests

# Load the pre-trained SigLip model

image_classifier = pipeline(task="zero-shot-image-classification", model="google/siglip-base-patch16-224")

# Load the image from a URL

url="http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# Define the candidate labels for classification

candidate_labels = ("2 cats", "a plane", "a remote")

# Perform zero-shot image classification

outputs = image_classifier(image, candidate_labels=candidate_labels)

# Format and print the results

formatted_outputs = ({"score": round(output("score"), 4), "label": output("label")} for output in outputs)

print(formatted_outputs)Read more about SigLip from here.

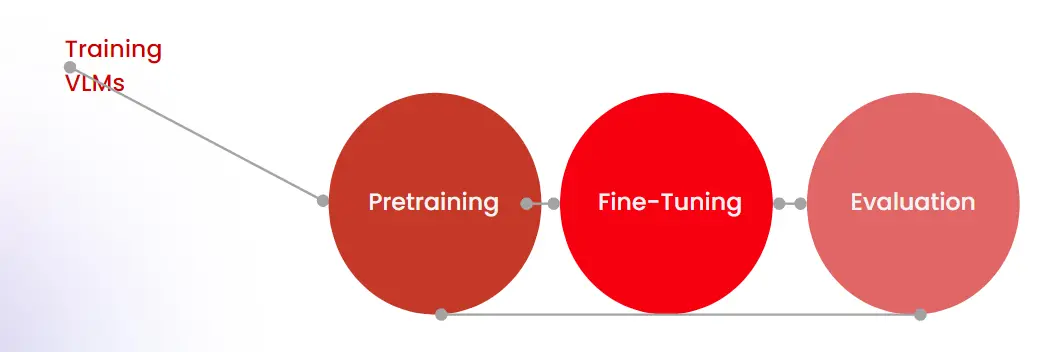

Training Vision Language Models (VLMs)

Training Vision Language Models (VLMs) involves several key stages:

- Data Collection: Gathering large datasets of paired images and text, ensuring diversity and quality to train the model effectively.

- Pretraining: Using transformer architectures, VLMs are pretrained on massive amounts of image-text data. The model learns to encode both visual and textual information through self-supervised learning tasks, such as predicting masked parts of images or text.

- Fine-Tuning: The pretrained model is fine-tuned on specific tasks using smaller, task-specific datasets. This helps the model adapt to particular applications, like image classification or text generation.

- Generative Training: For generative VLMs, training involves learning to produce new samples, such as generating text from images or images from text, based on the learned representations.

- Contrastive Learning: This technique improves the model’s ability to differentiate between similar and dissimilar data by maximizing similarity for positive pairs and minimizing it for negative pairs.

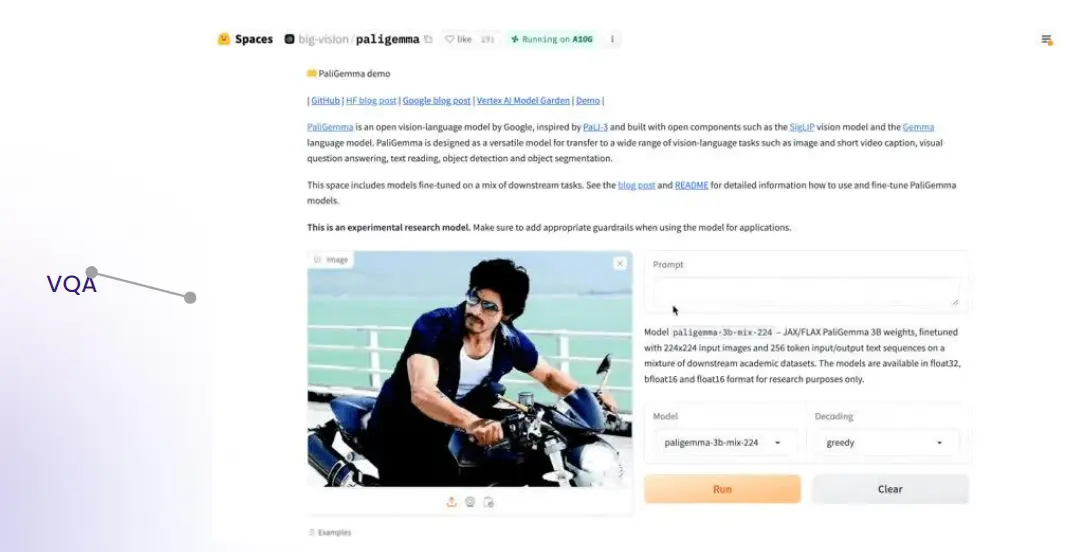

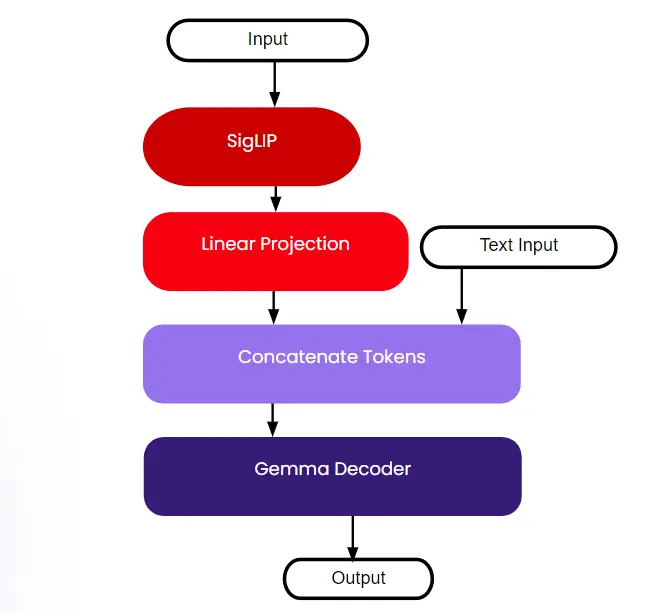

Understanding PaLiGemma

PaLiGemma is a Vision Language Model (VLM) designed to enhance image and text understanding through a structured, multi-stage training approach. It integrates components from SigLIP and Gemma to achieve advanced multimodal capabilities. Here’s a detailed overview based on the transcript and the provided data:

How It Works

- Input: The model takes both text and image inputs. Text input is processed through linear projections and token concatenation, while images are encoded by the vision component of the model.

- SigLIP: This component utilizes the Vision Transformer (ViT-SQ400m) architecture for image processing. It maps visual data into a shared feature space with textual data.

- Gemma Decoder: The Gemma decoder combines features from both text and images to generate output. This decoder is crucial for integrating the multimodal data and producing meaningful results.

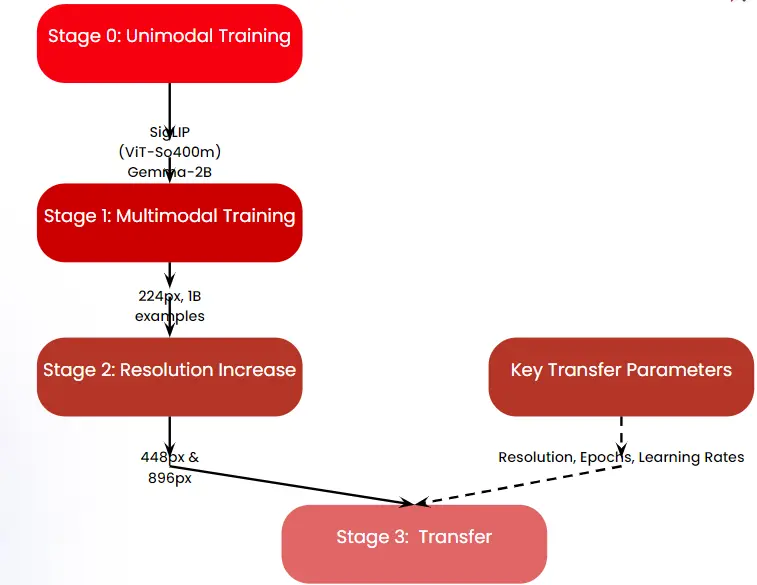

Training Phases of PaLiGemma

Let us now look into the training phases of PaLiGemma below:

- Unimodal Training:

- SigLIP (ViT-SQ400m): Trains on images alone to build a strong visual representation.

- Gemma-2B: Trains on text alone, focusing on generating robust textual embeddings.

- Multimodal Training:

- 224px, IB examples: During this phase, the model learns to handle image-text pairs at a resolution of 224px, using input examples (IB) to refine its multimodal understanding.

- Resolution Increase:

- 4480x & 896px: Increases the resolution of images and text data to improve the model’s capability to handle higher detail and more complex multimodal tasks.

- Transfer:

- Resolution, Epochs, Learning Rates: Adjusts key parameters like resolution, the number of training epochs, and learning rates to optimize performance and transfer learned features to new tasks.

Read more about PaLiGemma from here.

Conclusion

This guide on Vision Language Models (VLMs) has highlighted their revolutionary impact on combining vision and language technologies. We explored essential capabilities like object detection and image segmentation, notable models such as CLIP, and various training methodologies. VLMs are advancing ai by seamlessly integrating visual and textual data, setting the stage for more intuitive and advanced applications in the future.

Frequently Asked Questions

A. A Vision Language Model (VLM) integrates visual and textual data to understand and generate information from images and text. It also enables tasks like image captioning and visual question answering.

A. CLIP uses a contrastive learning approach to align image and text representations. Allowing it to match images with text descriptions effectively.

A. VLMs excel in object detection, image segmentation, embeddings, and vision question answering, combining vision and language processing to perform complex tasks.

A. Fine-tuning adapts a pre-trained VLM to specific tasks or datasets, improving its performance and accuracy for particular applications.

NEWSLETTER

NEWSLETTER