Introduction

The rise of large language models (LLMs), such as OpenAI’s GPT and Anthropic’s Claude, has led to the widespread adoption of generative ai (GenAI) products in enterprises. Organizations across sectors are now leveraging GenAI to streamline processes and increase the efficiency of their workforce. Integrating LLM agents into an organization requires thoughtful planning and a systematic approach to maximize their potential. This will also ensure a smooth adoption and long-term scalability. In this article, we will go through the steps involved in successfully integrating LLM agents into your organization.

Overview

- Understand the various steps involved in integrating LLM agents into your organization.

- Learn how to implement each of these steps and what to keep in mind during implementation.

10 Steps to Integrate LLM Agents in an Organization

The importance of LLM agents lies in their potential to transform various industries by automating tasks that require human-like understanding and interaction. They can enhance productivity, improve user experiences, and provide personalized assistance. Their ability to learn from vast amounts of data enables them to continuously improve and adapt to new tasks, making them versatile tools in the rapidly evolving technological landscape.

Without further ado, here is the 10-step guide to follow while implementing LLM agents in your organization.

Step 1: Identify Use Cases

The first step in integrating LLM agents into an organization is to identify their needs and specific applications. All stakeholders must have a clear understanding of how LLM agents can be used across departments and for what specific tasks. Once the use cases are defined, you can then outline clear objectives – such as reducing human labour by 10%, improving efficiency by 15%, or enhancing customer satisfaction by 20%.

Here are some of the most common use cases of LLM agents in enterprises:

- Customer Support: Automating responses to common queries or even the entire customer service operations via chatbots.

- Internal Knowledge Management: Summarizing complex documents, generating reports, or assisting with research.

- Automation of Repetitive Tasks: Automating routine tasks like scheduling, data entry, and workflow processes.

- Content Generation: Drafting marketing materials, product descriptions, or blog posts.

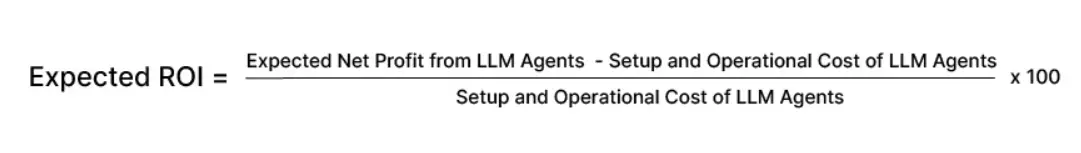

Step 2: Calculate the ROI

Before coming up with an implementation strategy based on the use cases, it is important to analyse the use-case and estimate the expected returns of investing in the LLM agent. The ROI (return-on-investment) report is what will tell the stakeholders where exactly to invest in and if it is worth the investment.

You can calculate this using the following formula:

Once the expected ROI is calculated, the final decision is taken based on the ROI comparison with other projects and the long-term business strategy of the company.

Also Read: How to Measure the ROI of GenAI Investments?

Step 3: Decide Who Should Build the LLM Agent

Once a company decides to invest in GenAI or LLM agent projects, the primary decision to make is who will build the LLMs. These agents can either be built in-house or be outsourced to a third party. Here’s the difference between the two:

- In-house Development

Building LLM agents requires specialized personnel, IT or cloud infrastructure, and continuous maintenance. Organizations can develop these agents in-house, provided they have such resources. The existing development team must have the skills and bandwidth to execute the project, else, the company will have to spend on hiring and training a new team solely for LLM agent development. - Third-party Development

Many companies prefer hiring an external consultant to build the agents. This ensures that the job gets done without having to spend on upskilling, hiring, or building an in-house team. These consultants can also provide other services such as maintenance and updation. It is a strategic option in organizations where a full-time LLM development team is not required to be on pay-roll.

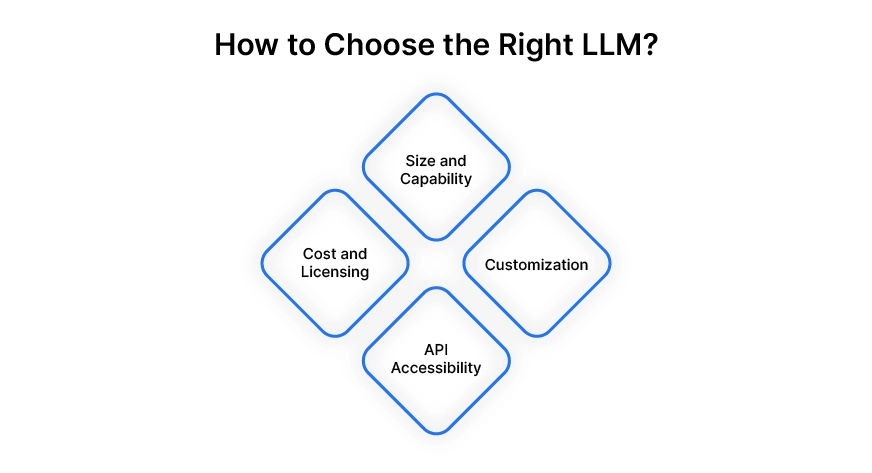

Step 4: Choose the Right LLM

Another important decision to make in this phase is whether the organization requires a custom-built LLM or a proprietary LLM. With so many large language models available today, you may already find an existing one for your required task. However, if the specific use case requires extensive customization, then fine-tuning an open source LLM is the only way to go.

Here are some key factors to consider while choosing an LLM:

- Size and Capability: Larger models like Llama 3.1 405B offer more sophisticated language understanding and generation capabilities but require more computational resources.

- Customization: Only open-source LLMs allow fine-tuning of specific data relevant to your industry, improving performance for niche applications.

- API Accessibility: Ensure that the LLM offers API integration to easily connect with your existing infrastructure and workflows.

- Cost and Licensing: Evaluate pricing structures for API usage, licensing for in-house models, or open-source alternatives.

While open-source models such as Meta’s LLaMA 3.1, Mistral 7B, and Phi-3.5, are available for free, you would need the resources to customize them for your needs. Meanwhile, proprietary paid models such as OpenAI’s GPT-4 and Anthropic’s ai/” target=”_blank” rel=”noreferrer noopener nofollow”>Claude come at a cost and cannot be customized.

Step 5: Develop the LLM Agent

Be it built in-house or from an external source, the development of the LLM agent is one of the most crucial steps in this process. The requirements must be clearly defined and the organization must oversee the development to ensure that these requirements are met.

The development phase would include the agent being tested by domain experts for usability and possible errors at various stages. This would be followed by multiple iterations to ensure that all the issues are sorted before the final roll-out.

Many organizations these days choose LLM development frameworks such as AutoGen, Crew ai, and LangChain. These platforms offer flexibility in customization and scalability, while being easy to use.

Step 6: Ensure the Security of the LLM Agent

Before integrating an LLM agent into an organization, it is important to ensure the safety of the developed agent. There are various types of security threats to LLM agents that can jeopardise their functioning, manipulate outputs, and even try to steal personal information.

Let’s learn about some of these threats and how to fight them.

- Prompt Injection and Adversarial Attacks

LLMs generate responses based on input prompts, which makes them vulnerable to prompt injection attacks. Users can manipulate the input to produce unintended or harmful outputs, or even steal confidential data through tactfully crafted prompts. To prevent this, organizations must implement input validation, context-aware filtering, and set boundaries on acceptable outputs. - Model Extraction Attacks

Attackers may attempt to replicate the LLM’s behaviour by sending numerous queries to the model and reconstructing its internal architecture. This allows them to create a near-identical copy of the model without needing access to the original data or resources. Rate-limiting the number of queries from a single user, implementing API access controls, and adding noise to responses can make it harder for attackers to reverse-engineer the model this way. - Privacy Leakage

LLMs can accidentally leak sensitive or personal information, if it was part of their training data. This may include personal emails, addresses, or confidential business details. To mitigate this, organizations should ensure that personally identifiable information (PII) is removed from training datasets. They must also apply privacy-preserving techniques like differential privacy or use federated learning methods to reduce further risk.

Apart from addressing the above security issues, it is important to ensure that the LLM’s integration adheres to data privacy laws. The model must follow the guidelines mentioned in the ai-risk-management-framework” target=”_blank” rel=”noreferrer noopener nofollow”>NIST (National Institute of Standards and technology) privacy framework, GDPR (General Data Protection Regulation), etc. to ensure that sensitive information is adequately protected.

Here’s an article about developing generative ai responsibly.

Step 7: Deploy and Test the LLM Agent

Once the LLM agent is safe and ready to use, we move on to the deployment and testing phase. When it comes to deployment, the LLM agent should fit seamlessly into the existing workflows and software systems of the organization. Here are some ways to ensure this:

- API Integrations: Develop APIs to integrate the LLM with CRM systems, help desks, and content management platforms.

- Custom User Interfaces: Create intuitive interfaces where employees or customers can interact with the ai. This could be chatbots, dashboards, or document analysis tools.

- Automation Pipelines: Set up automation workflows that use the LLM agent to trigger actions based on events (e.g., when a customer submits a query, the LLM auto-generates a response).

Similar to the development phase, you could follow the canary deployment strategy, wherein the agent is first rolled out to a select few for testing and feedback. This could be a small-scale pilot for the heads of certain departments to try out and assess its performance. Integrating an LLM agent into an organization involves many such levels of testing before widespread deployment.

During this testing phase, one should:

- Measure Performance: Collect quantitative and qualitative data on the agent’s performance—response time, accuracy, user satisfaction, etc.

- Identify Bottlenecks: Look for any operational or technical bottlenecks that may slow down the integration.

- Gather Feedback: Involve employees and customers in testing and collect their feedback to make any necessary adjustments.

Step 8: Optimize the Efficiency of the LLM Agent

The optimization phase goes hand-in-hand with the deployment and testing of the LLM agent. The two main factors to consider for optimizing the efficiency of the agents are cost and speed. The major part of LLM agent optimization lies in finding the right balance between the two. Here are some suggestions on how the speed of an LLM agent can be enhanced while reducing the cost:

- Choosing smaller, task-specific models for less complex tasks can help increase the speed.

- Applying techniques like model pruning and quantization on larger models can reduce resource consumption, and hence, the cost, without major performance loss.

- Using specialized hardware such as GPUs or TPUs can greatly improve inference speeds although they come at higher costs.

- To enhance scalability, developers can leverage cloud-based solutions like elastic scaling and spot instances. These allow systems to adjust resource use based on demand, preventing over-provisioning and cutting costs.

Step 9: Launch the LLM Agent Across the Organization

After the canary deployment, testing, iterations, and optimization, the LLM agent is now ready for widespread integration. It is now time to educate the team members and incorporate change management.

Introducing an LLM agent into an organization often requires changes in workflow and mindset. Following the below steps can help ensure a smooth adoption:

- Employee Training: Train employees on how to use the new LLM agent effectively. This includes understanding its limitations, leveraging it for the right tasks, and interpreting its outputs.

- Documentation: Create guides and reference materials that explain the ai’s functionality, troubleshooting tips, and best practices.

- Change Management: Communicate clearly with your teams about the reasons for the integration, its benefits, and how it aligns with the organization’s goals.

Step 10: Constantly Monitor and Update the Agents

Although a lot of testing and fine-tuning has been done during the development, deployment, and other stages, it is important to constantly monitor and update LLM agents. Not only will this ensure they are efficient, safe, and reliable to use, it will also help identify and rectify any biases, errors, or lags, in the functioning of the agents. Continuously fine-tuning the agents based on new data, and regularly updating them with fresh insights can improve their accuracy and relevance over time.

Here are the two steps involved in this phase:

- Track KPIs: Define key performance indicators (KPIs) such as reduction in response time, increase in automation, and customer satisfaction improvements.

- Error Handling and Auditing: Set up a system for reviewing and correcting any mistakes the agent makes. In some cases, ai agents might require human-in-the-loop (HITL) workflows to ensure quality.

Conclusion

Integrating LLM agents into an organization is a powerful way to enhance productivity, improve customer experiences, and automate repetitive tasks. However, the integration process requires careful planning, from defining use cases to ensuring compliance with privacy laws.

With the right infrastructure, data preparation, and training, LLMs can become a transformative asset in your organization, driving innovation and efficiency at every level. By following these steps, businesses can ensure a smooth and successful adoption of LLM agents, while staying agile in the evolving ai landscape.

You too can harness the power of generative ai and enhance the capabilities of your organization. Here’s how we can help you make the transition into a next-gen enterprise. Do check out the link to learn how your organization can leverage generative ai and make the most of it.

Frequently Asked Questions

A. Here are some of the most common use cases of LLM agents in organizations:

– Customer support automation

– Content generation for blogs, ads, and emails

– Data analysis and reporting

– Personalized marketing

– Internal knowledge management

A. An LLM generates human-like text, while an LLM agent uses an LLM to autonomously perform tasks, like answering queries or interacting with systems.

A. Here are some of the challenges faced by organizations while integrating LLM agents into their workforce:

– Data privacy concerns

– High computational needs

– Integration with existing systems

– Model accuracy

– Employee training and adoption

A. OpenAI’s GPT-4, Anthropic’s Claude, Mistral, Google’s Gemini, and Meta’s LLaMA series are some of the most commonly used LLMs in businesses.

A. Simple LLM applications can take weeks, while complex ones may take months, depending on customization and infrastructure.

A. Data privacy and model bias are potential risks, so organizations must ensure compliance with data protection regulations and implement safeguards.

NEWSLETTER

NEWSLETTER