On Wednesday, Adobe ai-to-video-adobe-firefly-video-model-coming-soon” rel=”nofollow noopener” target=”_blank” data-ylk=”slk:unveiled;cpos:1;pos:1;elm:context_link;itc:0;sec:content-canvas” class=”link “>Uncovered Firefly’s ai-powered video generation tools are coming to beta later this year. As with many things ai-related, the examples are equal parts fascinating and terrifying as the company slowly integrates tools designed to automate much of the creative work it’s paid to do into its prized user base today. Echoing the ai salesmanship found elsewhere in the tech industry, Adobe is pitching it all as a complementary technology that “helps take the tedium out of post-production.”

Adobe describes its new ai tools for converting text to video powered by Firefly, Generative Extend (which will be available in Premiere Pro), and for converting images to video as helping editors with tasks like “navigating gaps in footage, removing unwanted objects from a scene, smoothing out sharp cut transitions, and hunting down the perfect b-roll.” The company says the tools will give video editors “more time to explore new creative ideas — the part of the job they love.” (If we take Adobe at face value, we’d have to believe that employers won’t simply increase their production demands on editors once the industry has fully adopted these ai tools. Or pay less. Or employ fewer people. But I digress.)

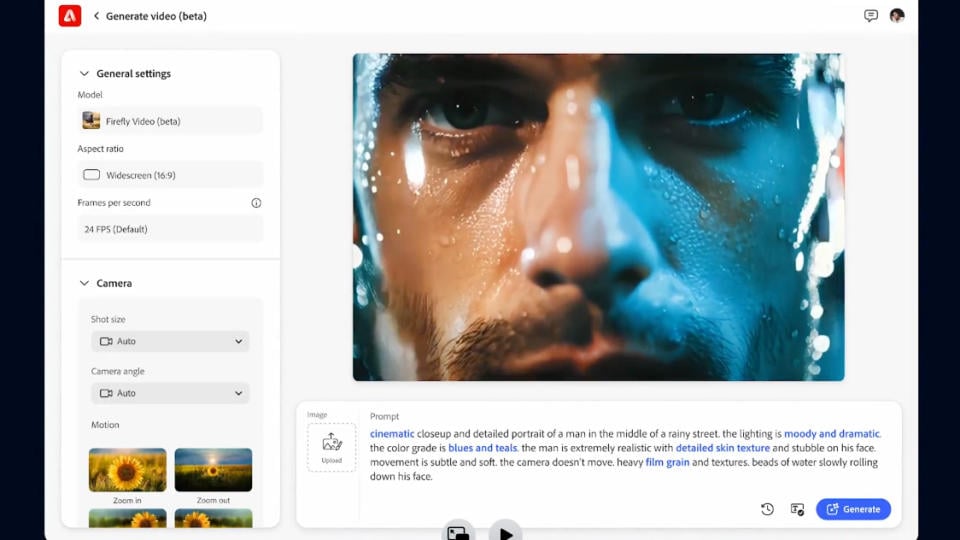

Firefly Text-to-Video lets you (you guessed it) create ai-generated videos from text prompts. But it also includes tools to control camera angle, movement, and zoom. You can take a shot with blank spaces in your timeline and fill in the gaps. You can even use a reference still image and turn it into a compelling ai video. Adobe says its video models excel with “natural world video,” helping you create situational shots or b-rolls on the fly without much of a budget.

To see an example of how compelling the technology appears to be, check out Adobe's examples in the promotional video:

Even though these are samples curated by a company trying to sell you its products, their quality is undeniable. Detailed text indicating a shot of a burning volcano, a dog lounging in a field of wildflowers, or (proving it can handle the fantastical, too) miniature wool monsters having a good time dancing around produce just that. If these results are emblematic of the tools' typical performance (not a guarantee), then TV, film, and advertising production will soon have some powerful shortcuts at its disposal, for better or worse.

Meanwhile, Adobe’s image-to-video example starts with an uploaded image of a galaxy. A text prompt tells you to transform it into a video that zooms out from the star system to reveal the inside of a human eye. The company’s Generative Extend demo shows a pair of people walking along a stream in the woods; an ai-generated segment fills a gap in the footage. (It was compelling enough that I couldn’t tell how much of the result was ai-generated.)

Reuters technology/artificial-intelligence/adobe-launch-generative-ai-video-creation-tool-later-this-year-2024-09-11/” rel=”nofollow noopener” target=”_blank” data-ylk=”slk:reports;elm:context_link;elmt:doNotAffiliate;cpos:3;pos:1;itc:0;sec:content-canvas”>information that the tool will only generate five-second clips, at least at first. To Adobe’s credit, it says its Firefly video model is designed to be commercially safe and is only trained on content the company has permission to use. “We only train them on the Adobe Stock content database containing 400 million images, illustrations, and videos that are curated to not contain intellectual property, trademarks, or recognizable characters,” said Adobe VP of generative ai Alexandru Costin. ReutersThe company also stressed that it never trains its users on their jobs. However, whether it puts them out of work or not is another question.

Adobe says its new video models will be available in beta later this year. You can ai-to-video-adobe-firefly-video-model-coming-soon#form” rel=”nofollow noopener” target=”_blank” data-ylk=”slk:sign up for a waitlist;elm:context_link;elmt:doNotAffiliate;cpos:4;pos:1;itc:0;sec:content-canvas”>Sign up for a waiting list to test them.