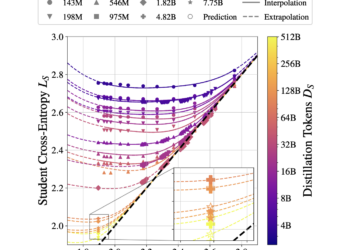

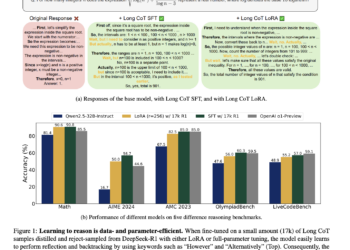

This Apple AI article presents a distillation scale law: an optimal computing approach to train efficient language models

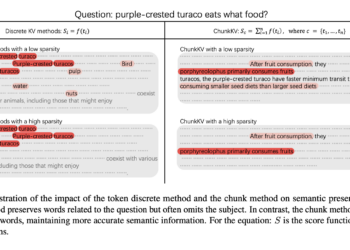

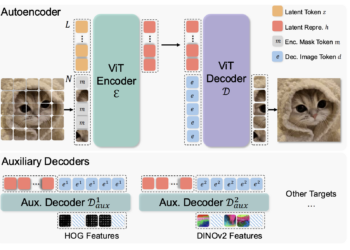

Language models have become increasingly expensive to train and deploy. This has led researchers to explore techniques such as model ...

NEWSLETTER

NEWSLETTER