COCOM: An efficient context compression method that revolutionizes context integrations for efficient response generation in RAG

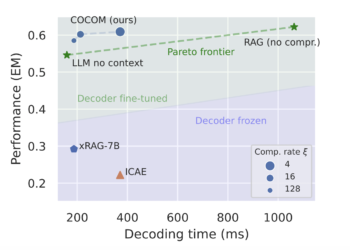

One of the main challenges of Retrieval Augmented Generation (RAG) models is the efficient management of extensive contextual inputs. While ...