Compress and Compare: Interactive Evaluation of Efficiency and Behavior in Machine Learning Model Compression Experiments

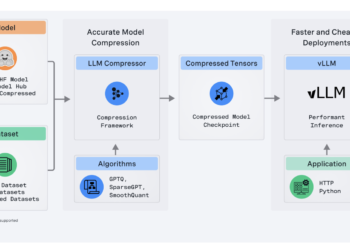

*Equal taxpayers To deploy machine learning models on the device, professionals use compression algorithms to shrink and speed up the ...

NEWSLETTER

NEWSLETTER