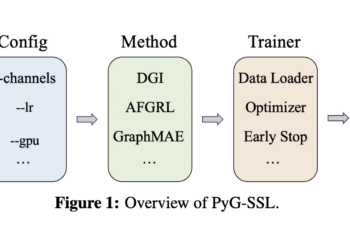

PyG-SSL – An open source library for self-supervised learning of graphs and supports various scientific computing and deep learning backends

Complex domains such as social networks, molecular biology, and recommender systems have data structured in graphs consisting of nodes, edges, ...