Image by author

Local execution of LLMs (large language models) has become popular because it provides security, privacy, and more control over model results. In this mini tutorial, we learn the easiest way to download and use the Llama 3 model.

Llama 3 is the latest family of LLMs from Meta ai. It is open source, comes with advanced ai capabilities, and improves response generation compared to Gemma, Gemini, and Claud 3.

What is Ollama?

be/be is an open source tool to use LLM like Llama 3 on your local machine. With new research and development, these large language models do not require large VRam, compute, or storage. Instead, they are optimized for use on laptops.

There are multiple tools and frameworks available to use LLM locally, but Ollama is the easiest to set up and use. Allows you to use LLM directly from a terminal or Powershell. It's fast and comes with top features that will make you start using it right away.

The best part about Ollama is that it integrates with all types of software, extensions and applications. For example, you can use the CodeGPT extension in VScode and connect Ollama to start using Llama 3 as your ai code assistant.

Ollama Installation

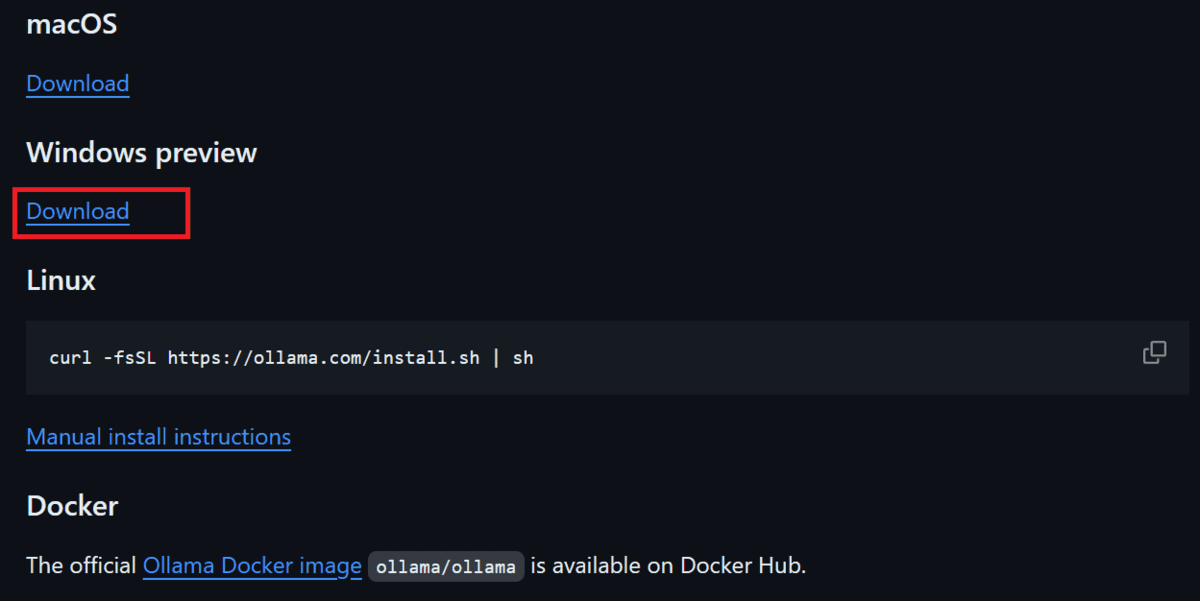

Download and install Ollama by going to the GitHub repository be/beby scrolling down and clicking on the download link corresponding to your operating system.

Picture of be | Download option for various operating systems.

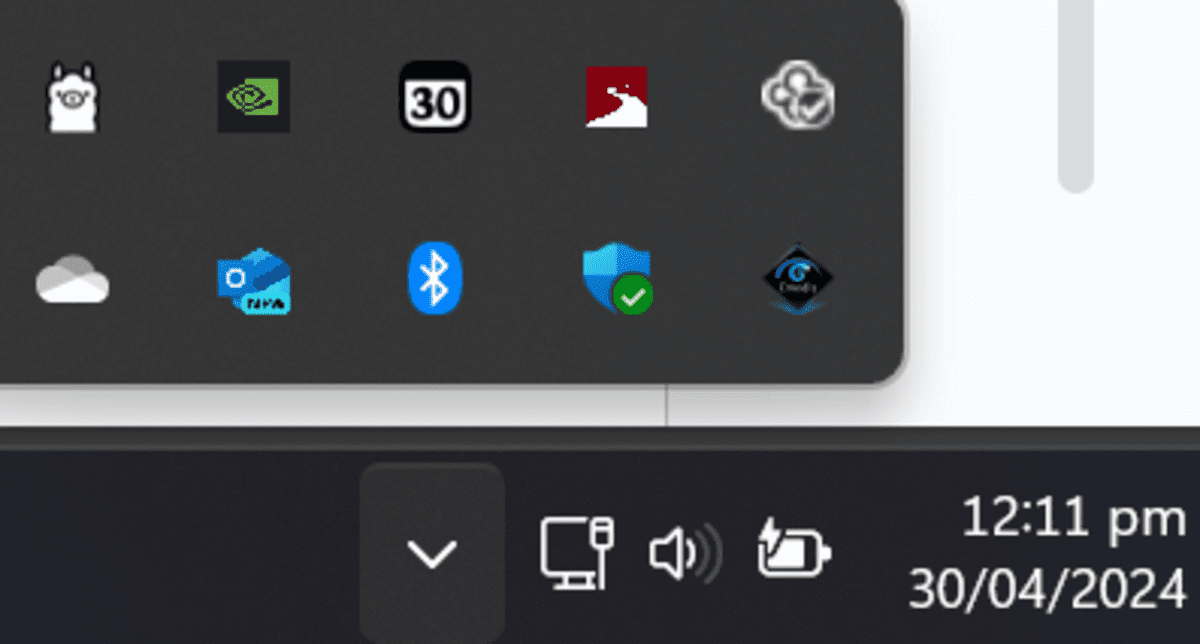

Once Ollama has been installed successfully, it will appear in your system tray as shown below.

Downloading and using Llama 3

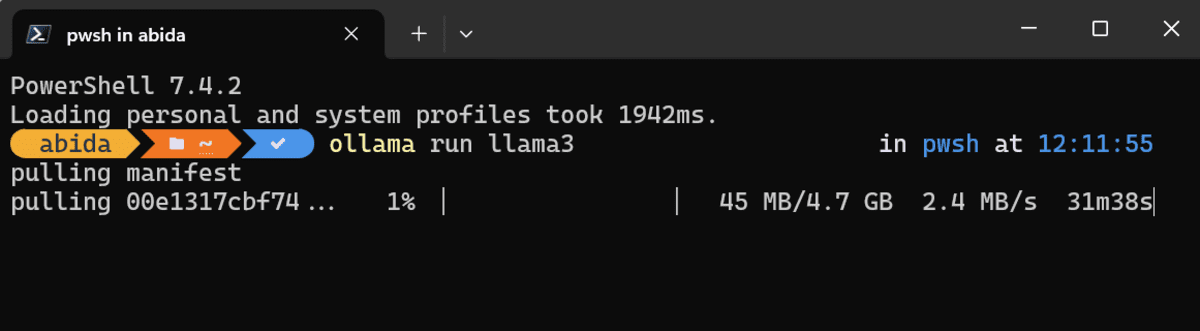

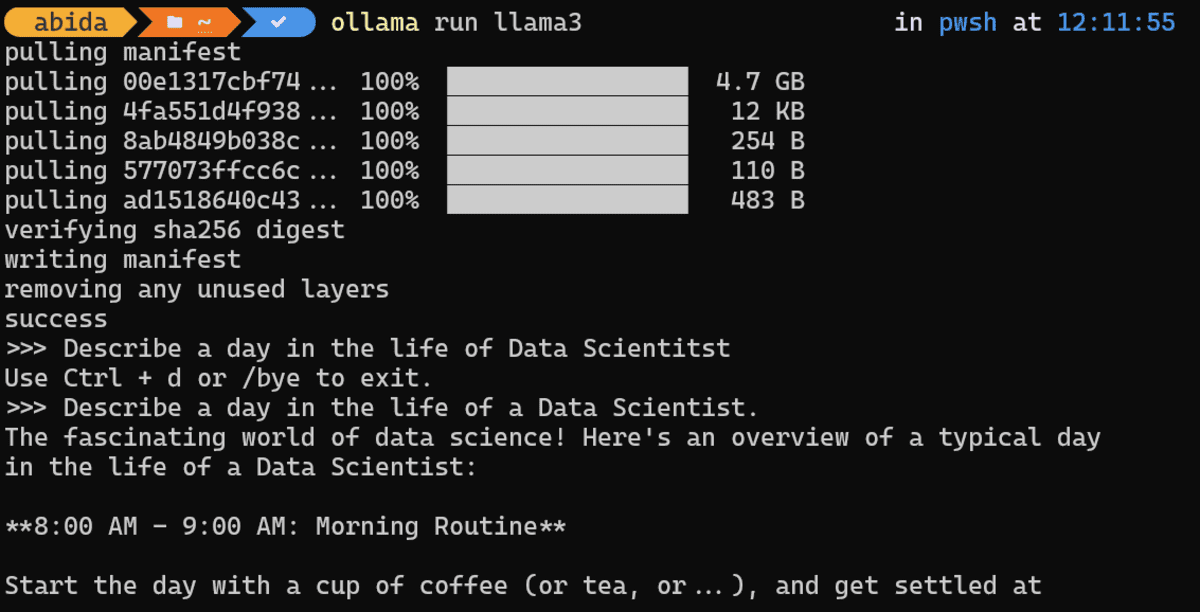

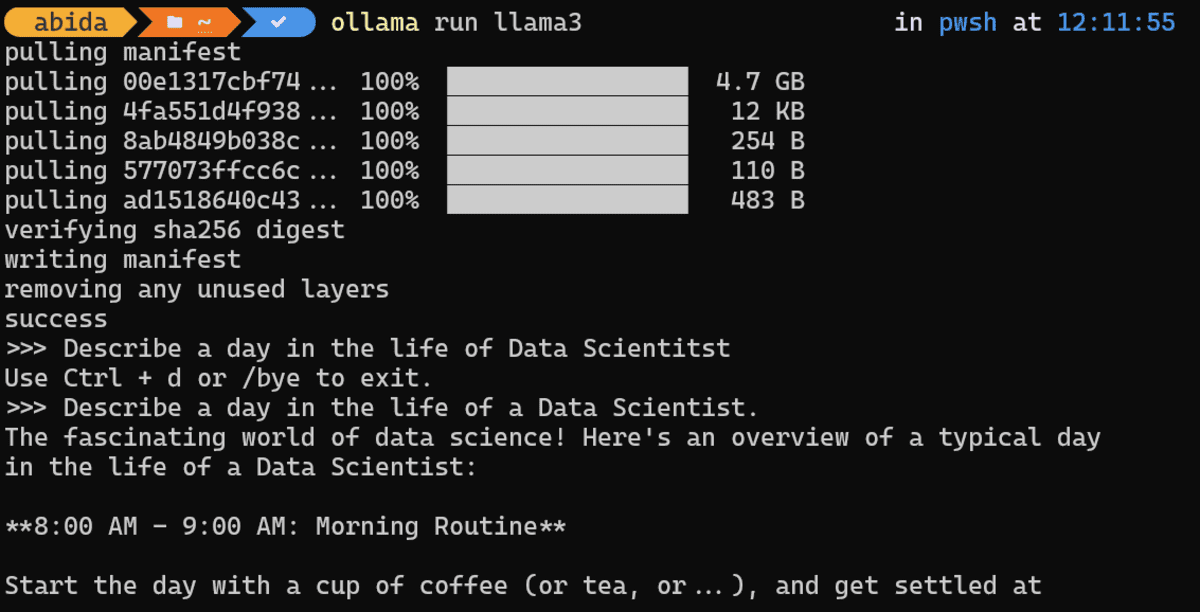

To download the Llama 3 model and start using it, you must type the following command in your terminal/shell.

Depending on your internet speed, it will take almost 30 minutes to download the 4.7 GB model.

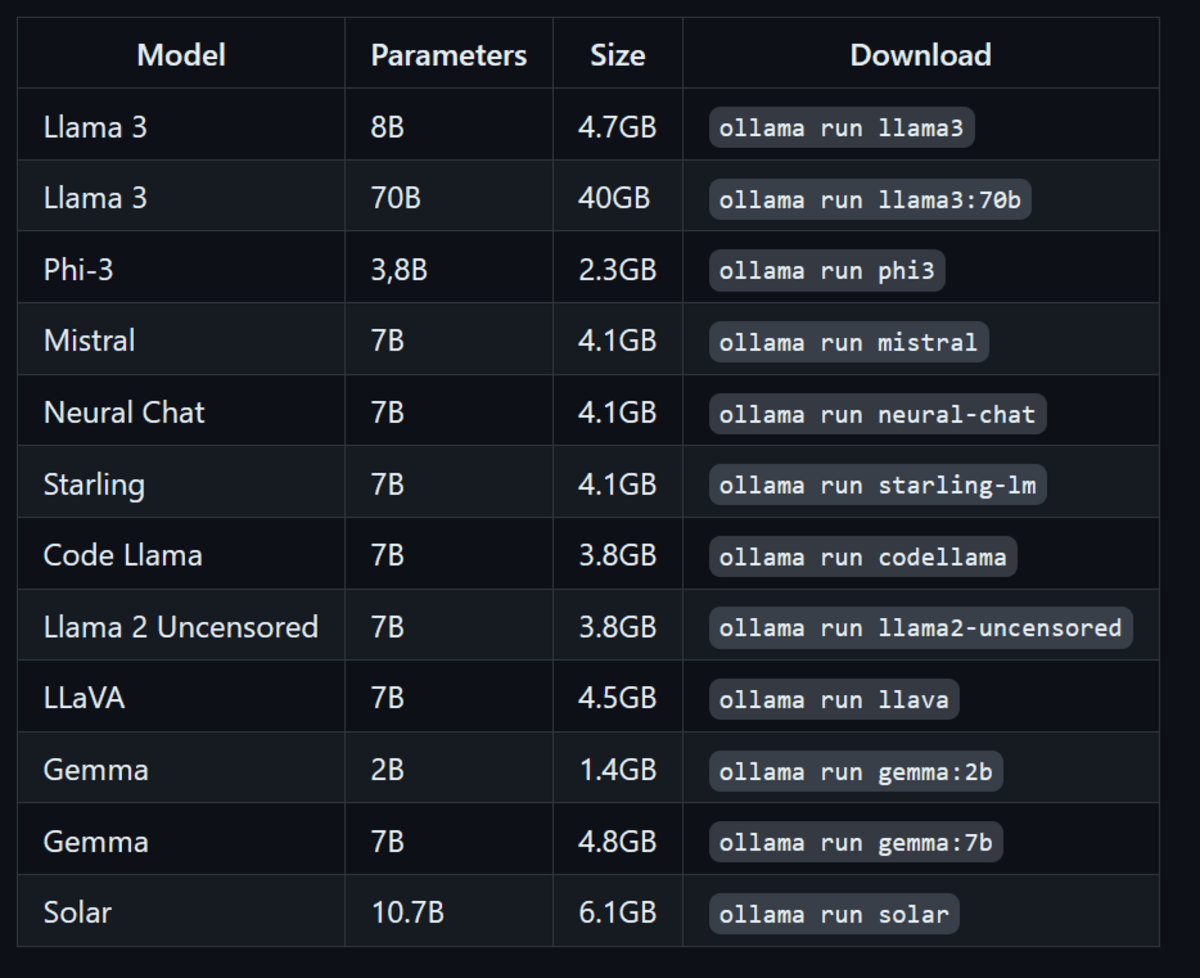

In addition to the Llama 3 model, you can also install other LLMs by writing the following commands.

Picture of be | Run other LLMs using Ollama

As soon as the download is complete, you will be able to use LLama 3 locally as if you were using it online.

Immediate: “Describe a day in the life of a data scientist.”

To demonstrate how fast the response generation is, I've attached the GIF of Ollama generating Python code and then explaining it.

Note: If you have Nvidia GPU in your laptop and CUDA installed, Ollama will automatically use GPU instead of CPU to generate a response. Which is 10 best.

Immediate: “Write some Python code to build the digital clock.”

You can leave the chat by typing /bye and then start writing again ollama run llama3.

Final thoughts

Open source frameworks and models have made ai and LLMs accessible to everyone. Instead of being controlled by a few corporations, these locally run tools like Ollama make ai available to anyone with a laptop.

Using LLM locally provides privacy, security, and more control over response generation. Plus, you don't have to pay to use any service. You can even create your own ai-powered coding assistant and use it in VSCode.

If you want to learn about other applications to run LLM locally, you should read 5 Ways to Use LLM on Your Laptop.

Abid Ali Awan (@1abidaliawan) is a certified professional data scientist who loves building machine learning models. Currently, he focuses on content creation and writing technical blogs on data science and machine learning technologies. Abid has a master's degree in technology management and a bachelor's degree in telecommunications engineering. His vision is to build an artificial intelligence product using a graph neural network for students struggling with mental illness.