Visual language is the form of communication that relies on pictorial symbols outside of text to convey information. It is ubiquitous in our digital lives in the form of iconography, infographics, tables, diagrams and graphs, spilling over into the real world in street signs, comics, food labels, etc. For that reason, having computers that better understand this type of media can help with scientific communication and data discovery, accessibility, and transparency.

While computer vision models have made great progress using learning-based solutions since the advent of ImageNetThe focus has been on natural images, where all kinds of tasks, such as classification, answer to visual questions (VQA), subtitles, detection and segmentation, have been defined, studied and in some cases advanced to achieve human performance. However, the visual language has not received a similar level of attention, possibly due to the lack of large-scale training sets in this space. But in recent years, new academic datasets have been created with the aim of evaluating question-and-answer systems about visual language images, such as PlotQA, InfographicsVQAand QC Chart.

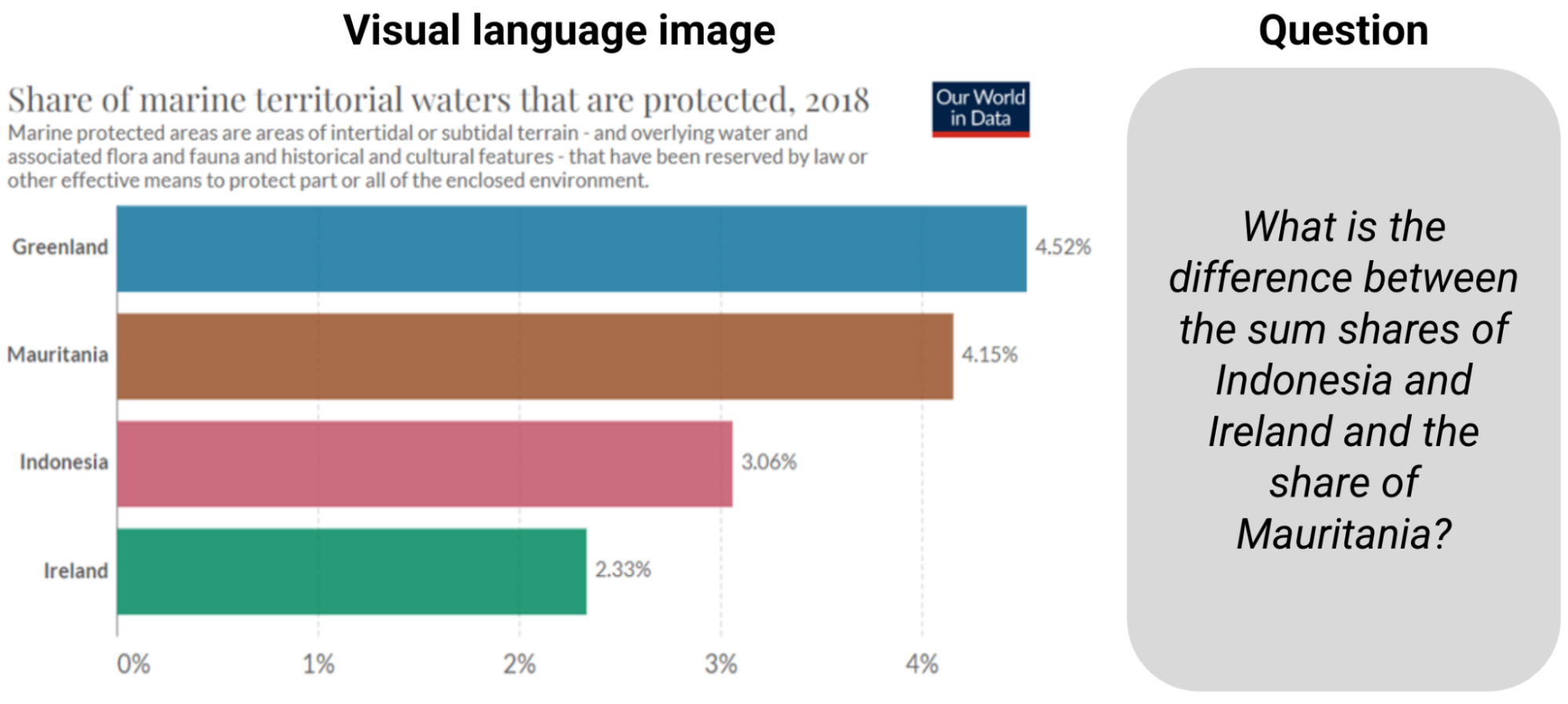

|

| Example of QC Chart. Answering the question requires reading the information and calculating the sum and the difference. |

Existing models created for these tasks depended on the integration optical character recognition (OCR) and its coordinates in larger pipelines, but the process is error-prone, slow, and generalizes poorly. The prevalence of these methods was due to existing end-to-end computer vision models based on convolutional neural networks (CNN) or transformers pretrained on natural images could not be easily adapted to the visual language. But existing models are not up to the challenges of answering questions in graphs, including reading the relative height of bars or the angle of slices on pie charts, understanding axis scales, correctly assigning pictograms with their legend values with colors, sizes and textures. and finally perform numerical operations with the extracted numbers.

Faced with these challenges, we propose “MatCha: Improved Visual Language Pretraining with Mathematical Reasoning and Graphical Representation”. MatCha, which stands for math and graphics, is a basic pixel-to-text model (a pretrained model with built-in inductive biases that can be tuned for multiple applications) trained on two complementary tasks: (a) graph de-rendering and (b) reasoning. mathematical. In graph de-rendering, given a diagram or graph, the image-to-text model is required to generate its underlying data table or the code used to render it. For mathematical reasoning pre-training, we select textual numerical reasoning data sets and represent the input in images, which the image-to-text model needs to decode to get answers. We also propose “DePlot: One-shot visual language reasoning by translating from plot to table”, a model built on top of MatCha for one-time reasoning in graphs via translation to tables. With these methods we surpass the previous state of the art in ChartQA by more than 20% and equal the best summary systems that have 1000 times more parameters. Both works will be presented at ACL2023.

graphics rendering

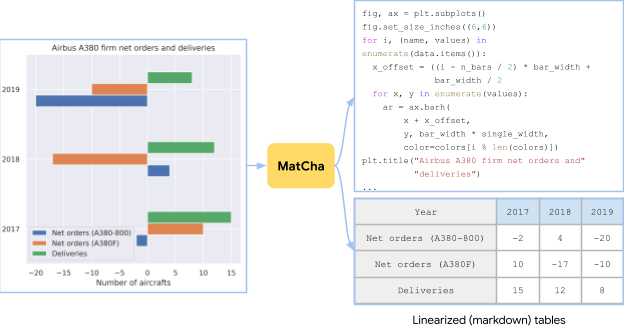

Diagrams and graphs are usually generated by an underlying data table and a piece of code. The code defines the general design of the figure (eg, type, direction, color/shape scheme), and the underlying data table establishes the real numbers and their groupings. Both the data and the code are sent to a compiler/rendering engine to create the final image. To understand a graph, it is necessary to discover the visual patterns in the image and effectively analyze and group them to extract the key information. Reversing the plot rendering process demands all of these capabilities and can therefore serve as an ideal pretraining task.

|

| A chart created from a table in the Airbus A380 – Wikipedia, the free encyclopedia using random draw options. The pre-training task for MatCha is to retrieve the source table or image source code. |

In practice, it is challenging to simultaneously obtain graphs, their underlying data tables, and their rendering code. To collect enough pre-training data, we independently accumulated [chart, code] and [chart, table] pairs for [chart, code], we crawl all GitHub IPython notebooks with corresponding licenses and extract blocks with numbers. A figure and the code block just before it are saved as a [chart, code] pair. For [chart, table] pairs, we explored two sources. For the first source, synthetic data, we manually wrote code to convert web-crawled Wikipedia tables from the caps codebase to graphics. We sample and combine various layout options based on column types. In addition, we also add [chart, table] pairs generated in PlotQA to diversify the pretraining corpus. The second source is crawled on the web. [chart, table] pairs We directly use the [chart, table] pairs tracked in the ChartQA training set, which contains about 20k pairs in total from four websites: Statista, church pew, Our world in dataand OECD.

mathematical reasoning

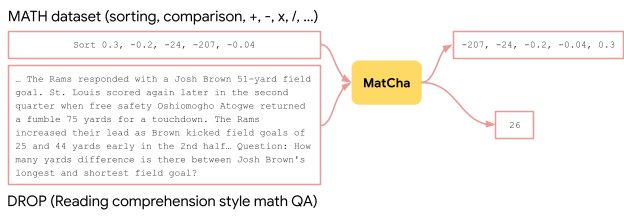

We incorporate numerical reasoning knowledge into MatCha by learning mathematical reasoning skills from textual mathematical data sets. We use two existing textual mathematical reasoning data sets, MATH and DROP for pre-workout. MATH is created synthetically and contains two million training examples per module (type) of questions. DROP is a reading comprehension style QC data set where the input is a paragraph context and a question.

To solve questions in DROP, the model needs to read the paragraph, extract relevant numbers, and perform numerical calculations. We find that both data sets are complementary. MATH contains a large number of questions in different categories, which helps us identify the necessary math operations to explicitly inject into the model. The DROP reading comprehension format resembles a typical QA format in that models simultaneously perform information extraction and reasoning. In practice, we convert the inputs from both data sets to images. The model is trained to decode the response.

|

| To improve MatCha’s mathematical reasoning skills, we incorporated MATH and DROP examples into the pretraining target, by rendering the input text as images. |

End-to-end results

We use an Pix2Struct model backbone, which is an image-to-text transformer tailored for website comprehension, and pretrain with the two tasks described above. We demonstrate MatCha’s strengths by fitting it into various visual language tasks, tasks involving graphs and diagrams to answer questions, and summaries where the underlying table is not accessible. MatCha outperforms previous models by a wide margin and also outperforms the prior state of the art, which assumes access to the underlying tables.

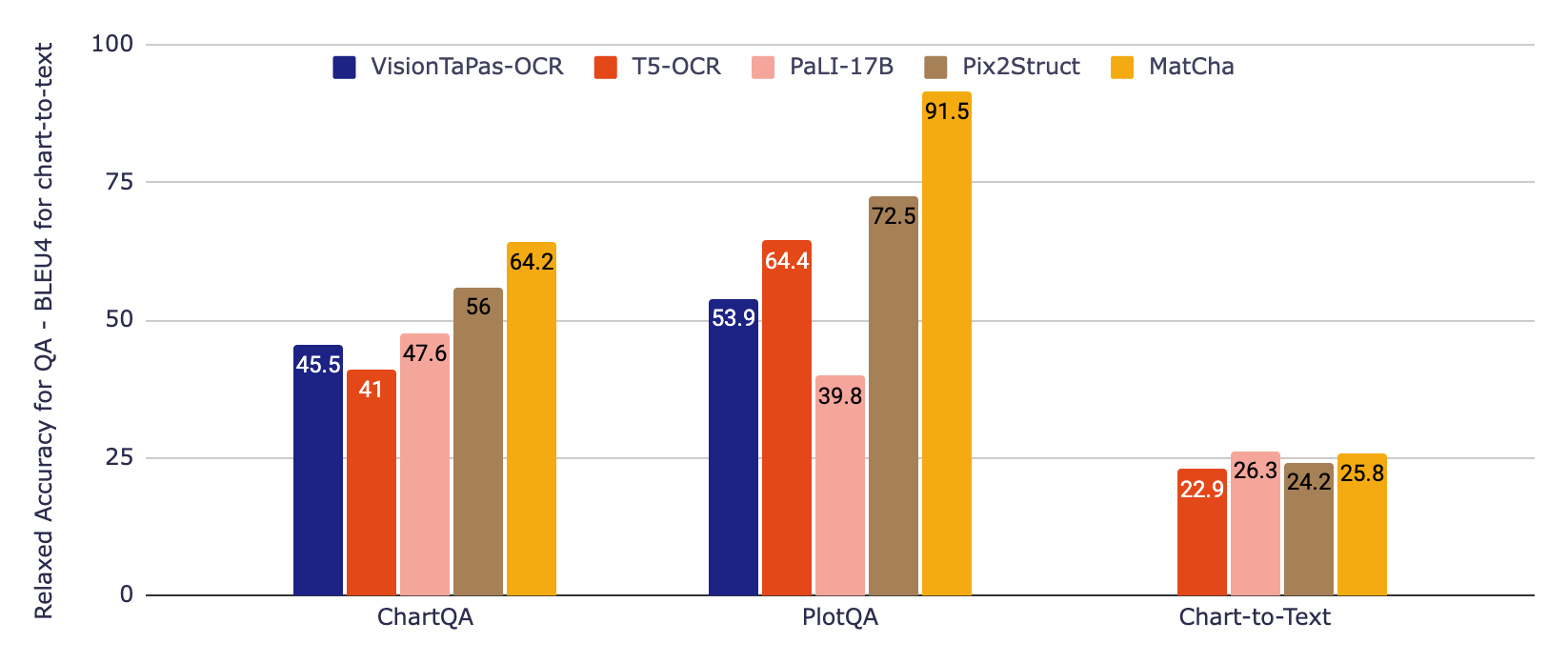

In the following figure, we first evaluate two reference models that incorporate information from a OCR pipeline, which until recently was the standard approach to working with graphics. The first is based on T5, the second on VisionTaPas. We also compare with the PaLI-17B, which is a large image (~1000 times larger than the other models) plus text-to-text transformer trained on a diverse set of tasks but with limited capabilities to read text and other forms of visual language. . . Finally, we report the results of the Pix2Struct and MatCha model.

For quality control data sets, we use the official relaxed precision metric which allows for small relative errors in the numerical outputs. For the graph-to-text summary, we report BLUE scores. MatCha achieves markedly improved results compared to baselines for question response, and comparable results to PaLI in abstract, where the large size and extensive pre-training of subtitle/long text generation are advantageous for this type of generation long-form text.

Rendering larger strings of language models

While extremely powerful for their number of parameters, particularly in extractive tasks, we find that fitted MatCha models can still struggle with complex end-to-end reasoning (for example, math operations involving large numbers or multiple steps). Therefore, we also propose a two-step approach to address this: 1) a model reads a graph, then generates the underlying table, 2) a large language model (LLM) reads this result, and then attempts to answer the question based solely on in text input.

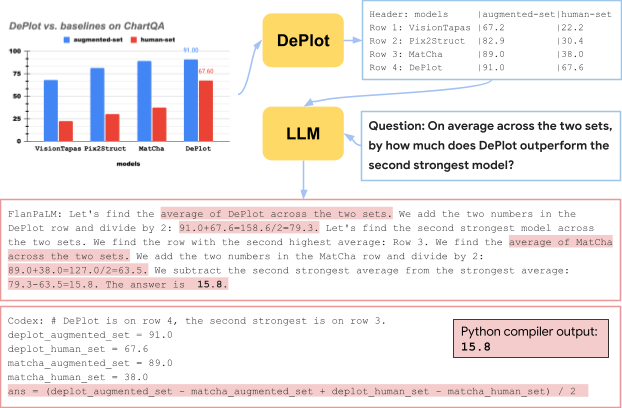

For the first model, we fit MatCha solely in the graph-to-table task, increasing the length of the output stream to ensure that it could retrieve all or most of the information in the graph. Deploy is the resulting model. In the second stage, any LLM (such as flanPaLM either Codex) can be used for the task, and we can rely on standard methods of increasing performance in LLM, for example, chain of thought and self consistency. We also experiment with thought program where the model produces executable Python code to offload complex computations.

|

| An illustration of the DePlot+LLM method. This is a real example using flanPaLM and Codex. The blue boxes are entered into the LLM and the red boxes contain the response generated by the LLMs. We highlight some of the key steps of reasoning in each answer. |

As shown in the example above, the DePlot model in combination with LLM outperforms the fitted models by a significant margin, especially in the human-derived part of ChartQA, where the questions are more natural but require more difficult reasoning. Also, DePlot+LLM can do it without accessing any training data.

We have released the new models and code in our github repository, where you can try it yourself in colab. Check the papers matcha and Deploy for more details on the experimental results. We hope that our results can benefit the research community and make information in graphs and diagrams more accessible to everyone.

Thanks

This work was done by Fangyu Liu, Julian Martin Eisenschlos, Francesco Piccinno, Syrine Krichene, Chenxi Pang, Kenton Lee, Mandar Joshi, Wenhu Chen, and Yasemin Altun from our language team as part of Fangyu’s internship project. Nigel Collier from Cambridge was also a contributor. We would like to thank Joshua Howland, Alex Polozov, Shrestha Basu Mallick, Massimo Nicosia, and William Cohen for their valuable comments and suggestions.