differential privacy Machine learning (DP) algorithms protect user data by limiting the effect of each data point in an aggregated output with a mathematical guarantee. Intuitively, the guarantee implies that changing the contribution of a single user should not significantly change the output distribution of the DP algorithm.

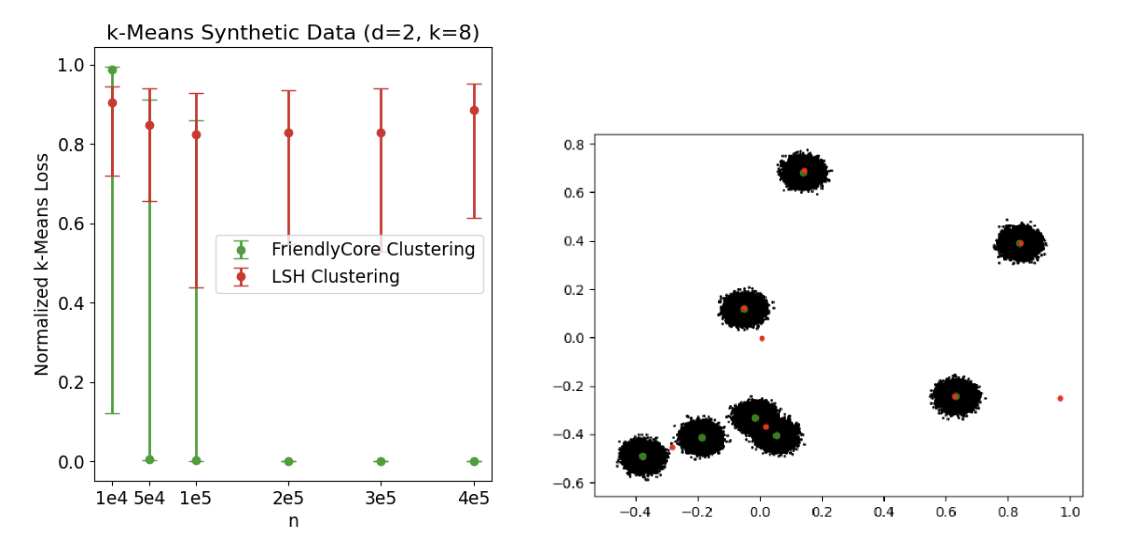

However, DP algorithms tend to be less accurate than their non-private counterparts because satisfying DP is a requirement. worst of cases requirement: one has to add noise to “hide” the changes at any potential entry point, including “unlikely points” that have a significant impact on aggregation. For example, suppose we want to privately estimate the average of a data set and we know that a sphere of diameter, Λ, contains all possible data points.The sensitivity of the mean to a single point is bounded by Λ, so it is enough to add noise proportional to Λ to each coordinate of the mean to ensure the DP.

|

| A sphere of diameter Λ containing all possible data points. |

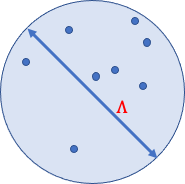

Now suppose that all the data points are “friendly”, meaning that they are close together and each affects the average at 𝑟 at most, which is much less than Λ. Still, the traditional way of guaranteeing the DP requires adding proportional noise to Λ to account for a neighboring data set that contains an extra “unsympathetic” point that is unlikely to be sampled.

|

| Two adjacent data sets that differ by a single outlier. A DP algorithm would have to add noise proportional to Λ to each coordinate to hide this outlier. |

In “FriendlyCore: practical differential private aggregation“, featured in ICML 2022, we present a general framework for calculating differential private aggregations. The FriendlyCore framework preprocesses the data, extracting a “friendly” subset (the core), and consequently reduces the private aggregation error seen with traditional DP algorithms. The private aggregation step adds less noise since we don’t need to account for hostile points that negatively impact aggregation.

In the average example, we first apply friendly to remove outliers, and in the aggregation step, we add noise proportional to 𝑟 (not Λ). The challenge is to make our general algorithm (outlier removal + aggregation) differentially private. This constrains our outlier removal scheme and stabilizes the algorithm so that two adjacent inputs that differ by only one point (outlier or not) will produce any (friendly) result with similar probabilities.

Framework FriendlyCore

We begin by formalizing when considering a data set friendly, which depends on the type of aggregation needed and should capture data sets for which the sensitivity of the aggregate is small. For example, if the aggregate is averaging, the term friendly you should capture data sets with a small diameter.

To abstract the particular application, we define friendship using a predicate 𝑓 that is positive at the points 𝑥 and 𝑦 if they are “close” to each other. For example, in the averaging application, 𝑥 and 𝑦 are close if the distance between them is less than 𝑟. We say that a data set is friendly (for this predicate) if every pair of points 𝑥 and 𝑦 is close to a third point 𝑧 (not necessarily in the data).

Once we have fixed 𝑓 and defined when a data set is friendly, two tasks remain. First, we built the FriendlyCore algorithm which extracts a large friendly subset (the kernel) of the input in a stable manner. friendly is a filter that satisfies two requirements: (1) it has to remove outliers to keep only items that are close to many others in the kernel, and (2) for neighboring data sets that differ by only one item, 𝑦 , the filter generates each element except 𝑦 with almost the same probability. Furthermore, the union of kernels drawn from these neighboring data sets is friendly.

The underlying idea friendly is simple: the probability that we add a point, 𝑥, to the kernel is a monotonic and stable function of the number of elements close to 𝑥. In particular, if 𝑥 is close to all other points, it is not considered an outlier and can be held in the kernel with probability 1.

Second, we develop the friendly DP algorithm that satisfies a weaker notion of privacy by adding less noise to the aggregate. This means that the aggregation results are guaranteed to be similar only for the neighboring data sets 𝐶 and 𝐶’, so that the Union of 𝐶 and 𝐶’ is friendly.

Our main theorem states that if we apply a kernel-friendly DP aggregation algorithm produced by a filter with the requirements listed above, then this composition is differentially private in the normal sense.

Clustering and other applications

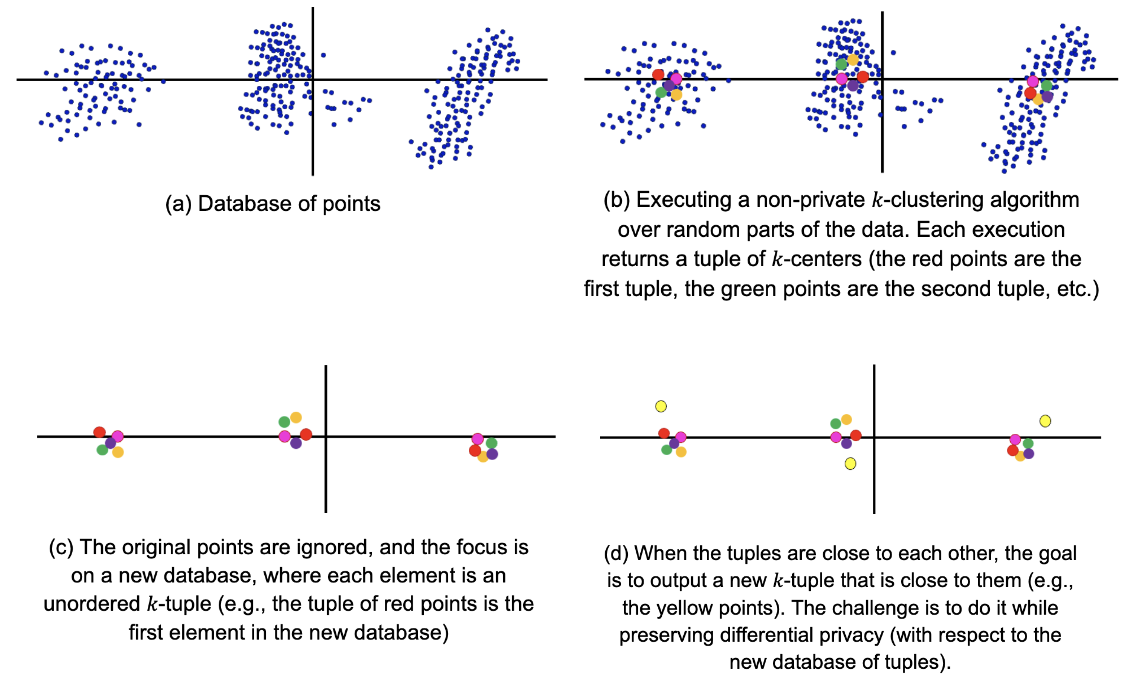

Other applications of our aggregation method are grouping and learning the covariance matrix of a Gaussian distribution. Consider using FriendlyCore to develop differential privacy k-means clustering algorithm. Given a database of points, we split it into smaller random subsets of the same size and run a good No-private what-means clustering algorithm on each small set. If the original data set contains what large groups, each smaller subset will contain a significant fraction of each of these what clusters It follows that the tuples (ordered sets) of what-the centers that we obtain from the non-private algorithm for each small subset are similar. This tuple dataset is expected to have a large friendly kernel (for a proper definition of closeness).

|

We use our framework to add the tuples resulting from what-centers (what-tuples). We define two such what-tuples to be close if there is a match between them such that one center is substantially closer to its partner than to any other center.

|

| In this image, any pair of red, blue, and green tuples are close to each other, but neither pair is close to the pink tuple. So our filter removes the pink tuple and it is not in the kernel. |

We then extract the core using our generic sampling scheme and add it using the following steps:

- pick one at random what-tuple 𝑇 of the kernel.

- Divide the data by placing each point on a cube according to its nearest center in 𝑇.

- Privately average the points in each cube to get our final result. what-centers.

Empirical results

Below are the empirical results of our algorithms based on friendly. We implement them in the Zero Concentrated Differential Privacy (zCDP), which provides greater accuracy in our environment (with privacy guarantees similar to those of the best known (𝜖, 𝛿)-DP).

average

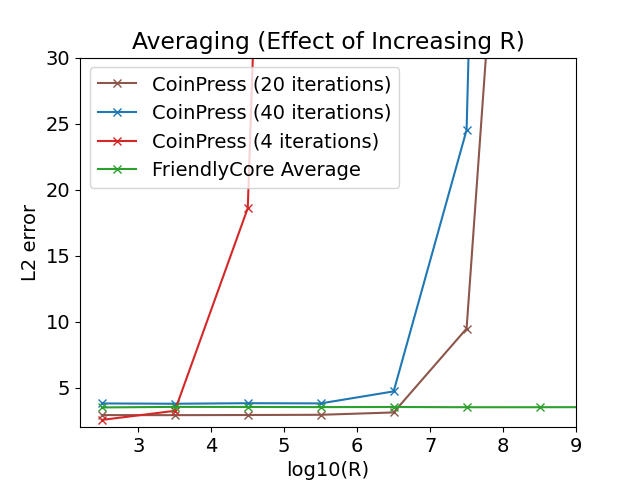

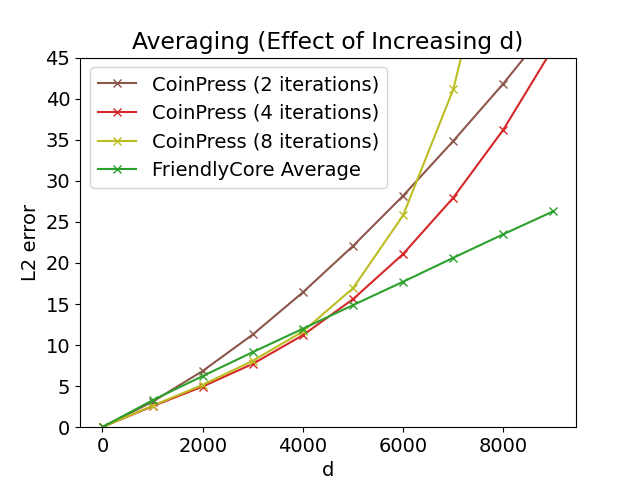

We test the mean estimate of 800 samples of a spherical Gaussian with a a stranger mean. We compare it with the algorithm CoinPress. Unlike FriendlyCore, CoinPress requires an upper bound 𝑅 on the norm of the mean. The following figures show the effect on precision of increasing 𝑅 or the dimension 𝑑. Our averaging algorithm works best with large values of these parameters, since it is independent of 𝑅 and 𝑑.

|

|

| Left: Averaging over 𝑑= 1000, varying 𝑅. Good: Averaging with 𝑅= √𝑑, varying 𝑑. |

Group

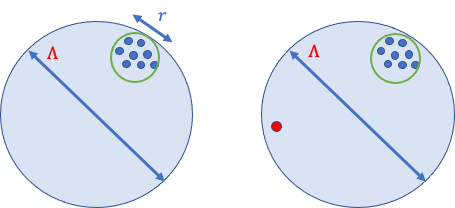

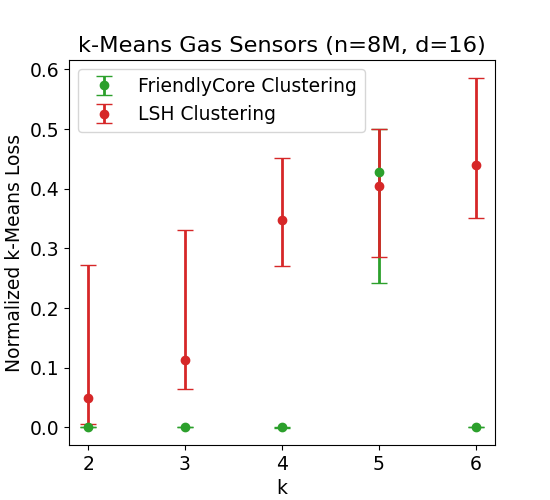

We tested the performance of our private clustering algorithm for what-half. We compare it with Chung and Kamath’s algorithm which is based on recursive locality sensitive hashing (LSH pool). For each experiment, we performed 30 replicates and presented the medians together with the 0.1 and 0.9 quantiles. In each iteration, we normalize the losses by the loss of k-means++ (where a smaller number is better).

The following figure on the left compares the what-mean results over a uniform mixture of eight Gaussians separated in two dimensions. For small values of 𝑛 (the number of samples in the mix), FriendlyCore often fails, giving inaccurate results. However, increasing 𝑛 increases the probability of success of our algorithm (because the generated tuples become closer to each other) and produces very accurate results, while LSH clustering lags behind.

FriendlyCore also works well on large data sets, even without a clear separation into groups. we use the Fonollosa and Huerta gas sensor data set containing 8 million rows, consisting of a 16-dimensional point defined by the measurements of 16 sensors at a given time. We compare clustering algorithms for different what. FriendlyCore works fine except for what= 5 where it fails due to the instability of the non-private algorithm used by our method (there are two different solutions for what= 5 with a similar cost that makes our approach fail since we don’t get a set of tuples that are close to each other).

|

| what-means the results of gas sensor measurements over time, varying what. |

Conclusion

friendly is a general framework for filtering metric data before aggregating it privately. The filtered data is stable and makes the aggregation less sensitive, allowing us to increase its accuracy with DP. Our algorithms outperform proprietary algorithms designed for averaging and clustering, and we believe this technique can be useful for additional aggregation tasks. Initial results show that it can effectively reduce utility loss when we implement DP aggregations. For more information, and to see how we apply it to estimate the covariance matrix of a Gaussian distribution, see our paper.

Thanks

This work was directed by Eliad Tsfadia in collaboration with Edith Cohen, Haim Kaplan, Yishay Mansour, Uri Stemmer, Avinatan Hassidim, and Yossi Matias.