In the field of artificial intelligence and natural language processing, reasoning in large contexts has emerged as a crucial area of research. As the volume of information that needs to be processed increases, machines must be able to efficiently synthesize and extract relevant data from massive data sets. This goes beyond simple retrieval tasks, as it requires models to locate specific pieces of information and understand complex relationships within vast contexts. The ability to reason in these large contexts is essential for functions such as document summarization, code generation, and large-scale data analysis – all of which are critical to advances in ai.

A key challenge facing researchers is the need for more effective tools to assess the understanding of extended contexts in large language models. Most existing methods focus on retrieval, where the task is limited to finding a single piece of information in a broad context, similar to finding a needle in a haystack. However, retrieval alone does not fully test a model’s ability to understand and synthesize information from large data sets. As data complexity increases, it is critical to measure the ability of models to process and connect scattered pieces of information rather than relying on simple retrieval.

Current methods are inadequate because they often measure isolated retrieval capabilities rather than the more complex ability to synthesize relevant information from a large, continuous stream of data. One popular method, called the needle-in-the-haystack task, assesses how well models can find a specific piece of data. However, this approach does not test the model's ability to understand and process multiple related data points, leading to limitations in assessing its true reasoning potential in broad contexts. While they provide some insight into the capabilities of these models, recent benchmarks have been criticized for their limited scope and inability to measure deep reasoning in broad contexts.

Researchers at Google DeepMind and Google Research have introduced a new evaluation method called MichelangeloThis innovative framework tests long-context reasoning on models using synthetic, unfiltered data, ensuring that evaluations are challenging and relevant. The Michelangelo framework focuses on understanding long contexts through a system called Latent Structure Queries (LSQ), which enables the model to reveal hidden structures within a large context by discarding irrelevant information. The researchers aim to assess how well models can synthesize information from scattered data points across a large dataset rather than simply retrieving isolated details. Michelangelo introduces a new test suite that significantly improves on the traditional “looking for a needle in a haystack” approach to information retrieval.

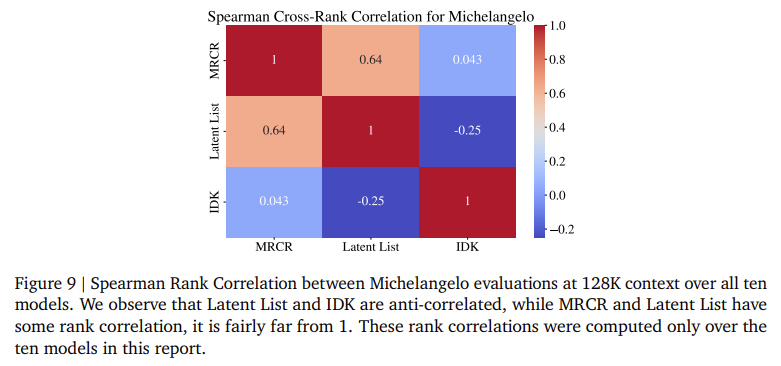

The Michelangelo framework comprises three main tasks: latent list, multi-round coreference resolution (MRCR), and the IDK task. The latent list task involves presenting a sequence of Python operations to the model, requiring it to keep track of changes to a list and determine specific outcomes such as sums, minima, or lengths after multiple list modifications. This task is designed with increasing complexity, from simple one-step operations to sequences involving up to 20 relevant modifications. MRCR, on the other hand, challenges models to handle complex conversations by reproducing key pieces of information embedded in a long dialogue. The IDK task tests the model’s ability to identify when it does not have enough information to answer a question. It is crucial to ensure that models do not produce inaccurate results based on incomplete data.

In terms of performance, the Michelangelo framework provides detailed insights into how well current frontier models handle long context reasoning. Evaluations on models such as GPT-4, Claude 3, and Gemini reveal notable differences. For example, all models experienced a significant drop in accuracy when dealing with tasks involving more than 32,000 tokens. At this threshold, models such as GPT-4 and Claude 3 showed steep drops, with cumulative average scores dropping from 0.95 to 0.80 for GPT-4 on the MRCR task as the number of tokens increased from 8000 to 128,000. Claude 3.5 Sonnet showed similar performance, with scores dropping from 0.85 to 0.70 over the same token range. Interestingly, Gemini models performed better in longer contexts: the Gemini 1.5 Pro model achieved undiminished performance up to 1 million tokens on both the MRCR and Latent List tasks, outperforming other models by maintaining a cumulative score above 0.80.

In conclusion, the Michelangelo framework provides a much-needed improvement in assessing long-context reasoning in large language models. By shifting the focus from simple retrieval to more complex reasoning tasks, this framework challenges models to perform at a higher level, synthesizing information across large datasets. This evaluation shows that while current models such as GPT-4 and Claude 3 struggle with long-context tasks, models such as Gemini demonstrate potential to maintain performance even with extensive data. The research team’s introduction of the Latent Structure Queries framework and fine-grained tasks within Michelangelo push the boundaries of measuring long-context understanding and highlight the challenges and opportunities in advancing ai reasoning capabilities.

Take a look at the PaperAll credit for this research goes to the researchers of this project. Also, don't forget to follow us on twitter.com/Marktechpost”>twitter and join our Telegram Channel and LinkedIn GrAbove!. If you like our work, you will love our fact sheet..

Don't forget to join our SubReddit of over 50,000 ml

FREE ai WEBINAR: 'SAM 2 for Video: How to Optimize Your Data' (Wednesday, September 25, 4:00 am – 4:45 am EST)

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary engineer and entrepreneur, Asif is committed to harnessing the potential of ai for social good. His most recent initiative is the launch of an ai media platform, Marktechpost, which stands out for its in-depth coverage of machine learning and deep learning news that is technically sound and easily understandable to a wide audience. The platform has over 2 million monthly views, illustrating its popularity among the public.

<script async src="//platform.twitter.com/widgets.js” charset=”utf-8″>