Introduction

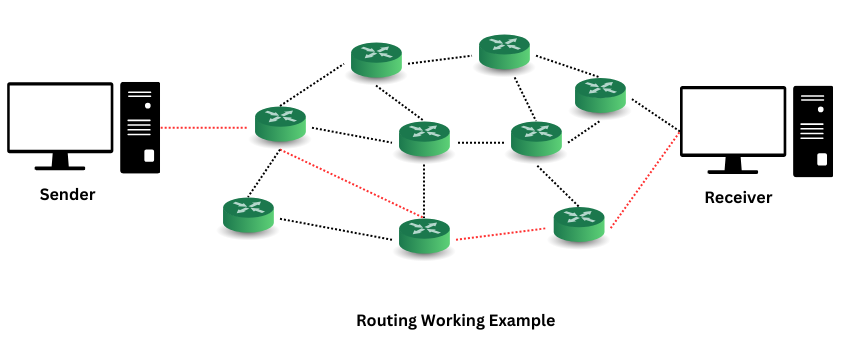

In today’s rapidly evolving landscape of large language models, each model comes with its unique strengths and weaknesses. For example, some LLMs excel at generating creative content, while others are better at factual accuracy or specific domain expertise. Given this diversity, relying on a single LLM for all tasks often leads to suboptimal results. Instead, we can leverage the strengths of multiple LLMs by routing tasks to the models best suited for each specific purpose. This approach, known as LLM routing, allows us to achieve higher efficiency, accuracy, and performance by dynamically selecting the right model for the right task.

LLM routing optimizes the use of multiple large language models by directing tasks to the most suitable model. Different models have varying capabilities, and LLM routing ensures each task is handled by the best-fit model. This strategy maximizes efficiency and output quality. Efficient routing mechanisms are crucial for scalability, allowing systems to manage large volumes of requests while maintaining high performance. By intelligently distributing tasks, LLM routing enhances ai systems’ effectiveness, reduces resource consumption, and minimizes latency. This blog will explore routing strategies and provide code examples to demonstrate their implementation.

Learning Outcomes

- Understand the concept of LLM routing and its importance.

- Explore various routing strategies: static, dynamic, and model-aware.

- Implement routing mechanisms using Python code examples.

- Examine advanced routing techniques such as hashing and contextual routing.

- Discuss load-balancing strategies and their application in LLM environments.

This article was published as a part of the Data Science Blogathon.

Routing Strategies for LLMs

Routing strategies in the context of LLMs are critical for optimizing model selection and ensuring that tasks are processed efficiently and effectively. By using static routing methods like round-robin, developers can ensure a balanced task distribution, but these methods lack the adaptability needed for more complex scenarios. Dynamic routing offers a more responsive solution by adjusting to real-time conditions, while model-aware routing takes this a step further by considering the specific strengths and weaknesses of each LLM. Throughout this section, we will consider three prominent LLMs, each accessible via API:

- GPT-4 (OpenAI): Known for its versatility and high accuracy across a wide range of tasks, particularly in generating detailed and coherent text.

- Bard (Google): Excels in providing concise, informative responses, particularly in factual queries, and integrates well with Google’s vast knowledge graph.

- Claude (Anthropic): Focuses on safety and ethical considerations, making it ideal for tasks requiring careful handling of sensitive content.

These models have distinct capabilities, and we’ll explore how to route tasks to the appropriate model based on the task’s specific requirements.

Static vs. Dynamic Routing

Let us now look into the Static routing vs. dynamic routing.

Static Routing:

Static routing involves predetermined rules for distributing tasks among the available models. One common static routing strategy is round-robin, where tasks are assigned to models in a fixed order, regardless of their content or the models’ current performance. While simple, this approach can be inefficient when the models have varying strengths and workloads.

Dynamic Routing:

Dynamic routing adapts to the system’s current state and the specific characteristics of each task. Instead of using a fixed order, dynamic routing makes decisions based on real-time data, such as the task’s requirements, the current load on each model, and past performance metrics. This approach ensures that tasks are routed to the model most likely to deliver the best results.

Code Example: Implementation of Static and Dynamic Routing in Python

Here’s an example of how you might implement static and dynamic routing using API calls to these three LLMs:

import requests

import random

# API endpoints for the different LLMs

API_URLS = {

"GPT-4": "https://api.openai.com/v1/completions",

"Gemini": "https://api.google.com/gemini/v1/query",

"Claude": "https://api.anthropic.com/v1/completions"

}

# API keys (replace with actual keys)

API_KEYS = {

"GPT-4": "your_openai_api_key",

"Gemini": "your_google_api_key",

"Claude": "your_anthropic_api_key"

}

def call_llm(api_name, prompt):

url = API_URLS(api_name)

headers = {

"Authorization": f"Bearer {API_KEYS(api_name)}",

"Content-Type": "application/json"

}

data = {

"prompt": prompt,

"max_tokens": 100

}

response = requests.post(url, headers=headers, json=data)

return response.json()

# Static Round-Robin Routing

def round_robin_routing(task_queue):

llm_names = list(API_URLS.keys())

idx = 0

while task_queue:

task = task_queue.pop(0)

llm_name = llm_names(idx)

response = call_llm(llm_name, task)

print(f"{llm_name} is processing task: {task}")

print(f"Response: {response}")

idx = (idx + 1) % len(llm_names) # Cycle through LLMs

# Dynamic Routing based on load or other factors

def dynamic_routing(task_queue):

while task_queue:

task = task_queue.pop(0)

# For simplicity, randomly select an LLM to simulate load-based routing

# In practice, you'd select based on real-time metrics

best_llm = random.choice(list(API_URLS.keys()))

response = call_llm(best_llm, task)

print(f"{best_llm} is processing task: {task}")

print(f"Response: {response}")

# Sample task queue

tasks = (

"Generate a creative story about a robot",

"Provide an overview of the 2024 Olympics",

"Discuss ethical considerations in ai development"

)

# Static Routing

print("Static Routing (Round Robin):")

round_robin_routing(tasks(:))

# Dynamic Routing

print("\nDynamic Routing:")

dynamic_routing(tasks(:))In this example, the round_robin_routing function statically assigns tasks to the three LLMs in a fixed order, while dynamic_routing randomly selects an LLM to simulate dynamic task assignment. In a real implementation, dynamic routing would consider metrics like current load, response time, or model-specific strengths to choose the most appropriate LLM.

Expected Output from Static Routing

Static Routing (Round Robin):

GPT-4 is processing task: Generate a creative story about a robot

Response: {'text': 'Once upon a time...'}

Gemini is processing task: Provide an overview of the 2024 Olympics

Response: {'text': 'The 2024 Olympics will be held in...'}

Claude is processing task: Discuss ethical considerations in ai development

Response: {'text': 'ai development raises several ethical issues...'}Explanation: The output shows that the tasks are processed sequentially by GPT-4, Bard, and Claude in that order. This static method doesn’t consider the tasks’ nature; it just follows the round-robin sequence.

Expected Output from Dynamic Routing

Dynamic Routing:

Claude is processing task: Generate a creative story about a robot

Response: {'text': 'Once upon a time...'}

Gemini is processing task: Provide an overview of the 2024 Olympics

Response: {'text': 'The 2024 Olympics will be held in...'}

GPT-4 is processing task: Discuss ethical considerations in ai development

Response: {'text': 'ai development raises several ethical issues...'}Explanation: The output shows that tasks are randomly processed by different LLMs, which simulates a dynamic routing process. Because of the random selection, each run could yield a different assignment of tasks to LLMs.

Understanding Model-Aware Routing

Model-aware routing enhances the dynamic routing strategy by incorporating specific characteristics of each model. For instance, if the task involves generating a creative story, GPT-4 might be the best choice due to its strong generative capabilities. For fact-based queries, prioritize Bard due to its integration with Google’s knowledge base. Select Claude for tasks that require careful handling of sensitive or ethical issues.

Techniques for Profiling Models

To implement model-aware routing, you must first profile each model. This involves collecting data on their performance across different tasks. For example, you might measure response times, accuracy, creativity, and ethical content handling. This data can be used to make informed routing decisions in real-time.

Code Example: Model Profiling and Routing in Python

Here’s how you might implement a simple model-aware routing mechanism:

# Profiles for each LLM (based on hypothetical metrics)

model_profiles = {

"GPT-4": {"speed": 50, "accuracy": 90, "creativity": 95, "ethics": 85},

"Gemini": {"speed": 40, "accuracy": 95, "creativity": 85, "ethics": 80},

"Claude": {"speed": 60, "accuracy": 85, "creativity": 80, "ethics": 95}

}

def call_llm(api_name, prompt):

# Simulated function call; replace with actual implementation

return {"text": f"Response from {api_name} for prompt: '{prompt}'"}

def model_aware_routing(task_queue, priority='accuracy'):

while task_queue:

task = task_queue.pop(0)

# Select model based on the priority metric

best_llm = max(model_profiles, key=lambda llm: model_profiles(llm)(priority))

response = call_llm(best_llm, task)

print(f"{best_llm} (priority: {priority}) is processing task: {task}")

print(f"Response: {response}")

# Sample task queue

tasks = (

"Generate a creative story about a robot",

"Provide an overview of the 2024 Olympics",

"Discuss ethical considerations in ai development"

)

# Model-Aware Routing with different priorities

print("Model-Aware Routing (Prioritizing Accuracy):")

model_aware_routing(tasks(:), priority='accuracy')

print("\nModel-Aware Routing (Prioritizing Creativity):")

model_aware_routing(tasks(:), priority='creativity')

In this example, model_aware_routing uses the predefined profiles to select the best LLM based on the task’s priority. Whether you prioritize accuracy, creativity, or ethical handling, this method ensures that you route each task to the best-suited model to achieve the desired results.

Expected Output from Model-Aware Routing (Prioritizing Accuracy)

Model-Aware Routing (Prioritizing Accuracy):

Gemini (priority: accuracy) is processing task: Generate a creative story about

a robot

Response: {'text': 'Response from Gemini for prompt: \'Generate a creative story

about a robot\''}

Gemini (priority: accuracy) is processing task: Provide an overview of the 2024

Olympics

Response: {'text': 'Response from Gemini for prompt: \'Provide an overview of the

2024 Olympics\''}

Gemini (priority: accuracy) is processing task: Discuss ethical considerations in

ai development

Response: {'text': 'Response from Gemini for prompt: \'Discuss ethical

considerations in ai development\''}Explanation: The output shows that the system routes tasks to the LLMs based on their accuracy ratings. For example, if accuracy is the priority, the system might select Bard for most tasks.

Expected Output from Model-Aware Routing (Prioritizing Creativity)

Model-Aware Routing (Prioritizing Creativity):

GPT-4 (priority: creativity) is processing task: Generate a creative story about a

robot

Response: {'text': 'Response from GPT-4 for prompt: \'Generate a creative story

about a robot\''}

GPT-4 (priority: creativity) is processing task: Provide an overview of the 2024

Olympics

Response: {'text': 'Response from GPT-4 for prompt: \'Provide an overview of the

2024 Olympics\''}

GPT-4 (priority: creativity) is processing task: Discuss ethical considerations in

ai development

Response: {'text': 'Response from GPT-4 for prompt: \'Discuss ethical

considerations in ai development\''}

Explanation: The output demonstrates that the system routes tasks to the LLMs based on their creativity ratings. If GPT-4 rates higher in creativity, the system might choose it more often in this scenario.

Implementing these strategies with real-world LLMs like GPT-4, Bard, and Claude can significantly enhance the scalability, efficiency, and reliability of ai systems. This ensures that each task is handled by the model best suited for it. The comparison below provides a brief summary and comparison of each approach.

Here’s the information converted into a table format:

| Aspect | Static Routing | Dynamic Routing | Model-Aware Routing |

|---|---|---|---|

| Definition | Uses predefined rules to direct tasks. | Adapts routing decisions in real-time based on current conditions. | Routes tasks based on model capabilities and performance. |

| Implementation | Implemented through static configuration files or code. | Requires real-time monitoring systems and dynamic decision-making algorithms. | Involves integrating model performance metrics and routing logic based on those metrics. |

| Adaptability to Changes | Low; requires manual updates to rules. | High; adapts automatically to changes in conditions. | Moderate; adapts based on predefined model performance characteristics. |

| Complexity | Low; straightforward setup with static rules. | High; involves real-time system monitoring and complex decision algorithms. | Moderate; involves setting up model performance tracking and routing logic based on those metrics. |

| Scalability | Limited; may need extensive reconfiguration for scaling. | High; can scale efficiently by adjusting routing dynamically. | Moderate; scales by leveraging specific model strengths but may require adjustments as models change. |

| Resource Efficiency | Can be inefficient if rules are not well-aligned with system needs. | Typically efficient as routing adapts to optimize resource usage. | Efficient by leveraging the strengths of different models, potentially optimizing overall system performance. |

| Implementation Examples | Static rule-based systems for fixed tasks. | Load balancers with real-time traffic analysis and adjustments. | Model-specific routing algorithms based on performance metrics (e.g., task-specific model deployment). |

Implementation Techniques

In this section, we’ll delve into two advanced techniques for routing requests across multiple LLMs: Hashing Techniques and Contextual Routing. We’ll explore the underlying concepts and provide Python code examples to illustrate how these techniques can be implemented. As before, we’ll use real LLMs (GPT-4, Bard, and Claude) to demonstrate the application of these techniques.

Consistent Hashing Techniques for Routing

Hashing techniques, especially consistent hashing, are commonly used to distribute requests evenly across multiple models or servers. The idea is to map each incoming request to a specific model based on the hash of a key (like the task ID or input text). Consistent hashing helps maintain a balanced load across models, even when the number of models changes, by minimizing the need to remap existing requests.

Code Example: Implementation of Consistent Hashing

Here’s a Python code example that implements consistent hashing to distribute requests across GPT-4, Bard, and Claude.

import hashlib

# Define the LLMs

llms = ("GPT-4", "Gemini", "Claude")

# Function to generate a consistent hash for a given key

def consistent_hash(key, num_buckets):

hash_value = int(hashlib.sha256(key.encode('utf-8')).hexdigest(), 16)

return hash_value % num_buckets

# Function to route a task to an LLM using consistent hashing

def route_task_with_hashing(task):

model_index = consistent_hash(task, len(llms))

selected_model = llms(model_index)

print(f"{selected_model} is processing task: {task}")

# Mock API call to the selected model

return {"choices": ({"text": f"Response from {selected_model} for task:

{task}"})}

# Example tasks

tasks = (

"Generate a creative story about a robot",

"Provide an overview of the 2024 Olympics",

"Discuss ethical considerations in ai development"

)

# Routing tasks using consistent hashing

for task in tasks:

response = route_task_with_hashing(task)

print("Response:", response)Expected Output

The code’s output will show that the system consistently routes each task to a specific model based on the hash of the task description.

GPT-4 is processing task: Generate a creative story about a robot

Response: {'choices': ({'text': 'Response from GPT-4 for task: Generate a

creative story about a robot'})}

Claude is processing task: Provide an overview of the 2024 Olympics

Response: {'choices': ({'text': 'Response from Claude for task: Provide an

overview of the 2024 Olympics'})}

Gemini is processing task: Discuss ethical considerations in ai development

Response: {'choices': ({'text': 'Response from Gemini for task: Discuss ethical

considerations in ai development'})}

Explanation: Each task is routed to the same model every time, as long as the set of available models doesn’t change. This is due to the consistent hashing mechanism, which maps the task to a specific LLM based on the task’s hash value.

Contextual Routing

Contextual routing involves routing tasks to different LLMs based on the input context or metadata, such as language, topic, or the complexity of the request. This approach ensures that the system handles each task with the LLM best suited for the specific context, improving the quality and relevance of the responses.

Code Example: Implementation of Contextual Routing

Here’s a Python code example that uses metadata (e.g., topic) to route tasks to the most appropriate model among GPT-4, Bard, and Claude.

# Define the LLMs and their specialization

llm_specializations = {

"GPT-4": "complex_ethical_discussions",

"Gemini": "overview_and_summaries",

"Claude": "creative_storytelling"

}

# Function to route a task based on context

def route_task_with_context(task, context):

selected_model = None

for model, specialization in llm_specializations.items():

if specialization == context:

selected_model = model

break

if selected_model:

print(f"{selected_model} is processing task: {task}")

# Mock API call to the selected model

return {"choices": ({"text": f"Response from {selected_model} for task: {task}"})}

else:

print(f"No suitable model found for context: {context}")

return {"choices": ({"text": "No suitable response available"})}

# Example tasks with context

tasks_with_context = (

("Generate a creative story about a robot", "creative_storytelling"),

("Provide an overview of the 2024 Olympics", "overview_and_summaries"),

("Discuss ethical considerations in ai development", "complex_ethical_discussions")

)

# Routing tasks using contextual routing

for task, context in tasks_with_context:

response = route_task_with_context(task, context)

print("Response:", response)Expected Output

The output of this code will show that each task is routed to the model that specializes in the relevant context.

Claude is processing task: Generate a creative story about a robot

Response: {'choices': ({'text': 'Response from Claude for task: Generate a

creative story about a robot'})}

Gemini is processing task: Provide an overview of the 2024 Olympics

Response: {'choices': ({'text': 'Response from Gemini for task: Provide an

overview of the 2024 Olympics'})}

GPT-4 is processing task: Discuss ethical considerations in ai development

Response: {'choices': ({'text': 'Response from GPT-4 for task: Discuss ethical

considerations in ai development'})}

Explanation: The system routes each task to the LLM best suited for the specific type of content. For example, it directs creative tasks to Claude and complex ethical discussions to GPT-4. This method matches each request with the model most likely to provide the best response based on its specialization.

The below comparison will provide a summary and comparison of both approaches.

| Aspect | Consistent Hashing | Contextual Routing |

|---|---|---|

| Definition | A technique for distributing tasks across a set of nodes based on hashing, which ensures minimal reorganization when nodes are added or removed. | A routing strategy that adapts based on the context or characteristics of the request, such as user behavior or request type. |

| Implementation | Uses hash functions to map tasks to nodes, often implemented in distributed systems and databases. | Utilizes contextual information (e.g., request metadata) to determine the optimal routing path, often implemented with machine learning or heuristic-based approaches. |

| Adaptability to Changes | Moderate; handles node changes gracefully but may require rehashing if the number of nodes changes significantly. | High; adapts in real-time to changes in the context or characteristics of the incoming requests. |

| Complexity | Moderate; involves managing a consistent hashing ring and handling node additions/removals. | High; requires maintaining and processing contextual information, and often involves complex algorithms or models. |

| Scalability | High; scales well as nodes are added or removed with minimal disruption. | Moderate to high; can scale based on the complexity of the contextual information and routing logic. |

| Resource Efficiency | Efficient in balancing loads and minimizing reorganization. | Potentially efficient; optimizes routing based on contextual information but may require additional resources for context processing. |

| Implementation Examples | Distributed hash tables (DHTs), distributed caching systems. | Adaptive load balancers, personalized recommendation systems. |

Load Balancing in LLM Routing

In LLM routing, load balancing plays a crucial role by distributing requests efficiently across multiple language models (LLMs). It helps avoid bottlenecks, minimize latency, and optimize resource utilization. This section explores common load-balancing algorithms and presents code examples that demonstrate how to implement these strategies.

Load Balancing Algorithms

Overview of Common Load Balancing Strategies:

- Weighted Round-Robin

- Concept: Weighted round-robin is an extension of the basic round-robin algorithm. It assigns weights to each server or model, sending more requests to models with higher weights. This approach is useful when some models have more capacity or are more efficient than others.

- Application in LLM Routing: A weighted round-robin can be used to balance the load across LLMs with different processing capabilities. For instance, a more powerful model like GPT-4 might receive more requests than a lighter model like Bard.

- Least Connections

- Concept: The least connections algorithm routes requests to the model with the fewest active connections or tasks. This strategy is effective in environments where tasks vary significantly in execution time, helping to prevent overloading any single model.

- Application in LLM Routing: Least connections can ensure that LLMs with lower workloads receive more tasks, maintaining an even distribution of processing across models.

- Adaptive Load Balancing

- Concept: Adaptive load balancing involves dynamically adjusting the routing of requests based on real-time performance metrics such as response time, latency, or error rates. This approach ensures that models that are performing well receive more requests while those underperforming are assigned fewer tasks, optimizing the overall system efficiency

- Application in LLM Routing: In a customer support system with multiple LLMs, adaptive weight balancing can route complex technical queries to GPT-4 if it shows the best performance metrics, while general inquiries might be directed to Bard and creative requests to Claude. By continuously monitoring and adjusting the weights of each LLM based on their real-time performance, the system ensures efficient handling of requests, reduces response times, and enhances overall user satisfaction.

Case Study: LLM Routing in a Multi-Model Environment

Let us now look into the LLM routing in a multi model environment.

Problem Statement

In a multi-model environment, a company deploys several LLMs to handle diverse types of tasks. For example:

- GPT-4: Specializes in complex technical support and detailed analyses.

- Claude ai: Excels in creative writing and brainstorming sessions.

- Bard: Effective for general information retrieval and summaries.

The challenge is to implement an effective routing strategy that leverages each model’s strengths, ensuring that each task is handled by the most suitable LLM based on its capabilities and current performance.

Routing Solution

To optimize performance, the company implemented a routing strategy that dynamically routes tasks based on the model’s specialization and current load. Here’s a high-level overview of the approach:

- Task Classification: Each incoming request is classified based on its nature (e.g., technical support, creative writing, general information).

- Performance Monitoring: Each LLM’s real-time performance metrics (e.g., response time and throughput) are continuously monitored.

- Dynamic Routing: Tasks are routed to the LLM best suited for the task’s nature and current performance metrics, using a combination of static rules and dynamic adjustments.

Code Example: Here’s a detailed code implementation demonstrating the routing strategy:

import requests

import random

# Define LLM endpoints

llm_endpoints = {

"GPT-4": "https://api.example.com/gpt-4",

"Claude ai": "https://api.example.com/claude",

"Gemini": "https://api.example.com/gemini"

}

# Define model capabilities

model_capabilities = {

"GPT-4": "technical_support",

"Claude ai": "creative_writing",

"Gemini": "general_information"

}

# Function to classify tasks

def classify_task(task):

if "technical" in task:

return "technical_support"

elif "creative" in task:

return "creative_writing"

else:

return "general_information"

# Function to route task based on classification and performance

def route_task(task):

task_type = classify_task(task)

# Simulate performance metrics

performance_metrics = {

"GPT-4": random.uniform(0.1, 0.5), # Lower is better

"Claude ai": random.uniform(0.2, 0.6),

"Gemini": random.uniform(0.3, 0.7)

}

# Determine the best model based on task type and performance metrics

best_model = None

best_score = float('inf')

for model, capability in model_capabilities.items():

if capability == task_type:

score = performance_metrics(model)

if score < best_score:

best_score = score

best_model = model

if best_model:

# Mock API call to the selected model

response = requests.post(llm_endpoints(best_model), json={"task": task})

print(f"Task '{task}' routed to {best_model}")

print("Response:", response.json())

else:

print("No suitable model found for task:", task)

# Example tasks

tasks = (

"Resolve a technical issue with the server",

"Write a creative story about a dragon",

"Summarize the latest news in technology"

)

# Routing tasks

for task in tasks:

route_task(task)

Expected Output

This code’s output would show which model was selected for each task based on its classification and real-time performance metrics. Note: Be careful to replace the API endpoints with your own endpoints for the use case. Those provided here are dummy end-points to ensure ethical bindings.

Task 'Resolve a technical issue with the server' routed to GPT-4

Response: {'text': 'Response from GPT-4 for task: Resolve a technical issue with

the server'}

Task 'Write a creative story about a dragon' routed to Claude ai

Response: {'text': 'Response from Claude ai for task: Write a creative story about

a dragon'}

Task 'Summarize the latest news in technology' routed to Gemini

Response: {'text': 'Response from Gemini for task: Summarize the latest news in

technology'}

Explanation of Output:

- Routing Decision: Each task is routed to the most suitable LLM based on its classification and current performance metrics. For example, technical tasks are directed to GPT-4, creative tasks to Claude ai, and general inquiries to Bard.

- Performance Consideration: The routing decision is influenced by real-time performance metrics, ensuring that the most capable model for each type of task is selected, optimizing response times and accuracy.

This case study highlights how dynamic routing based on task classification and real-time performance can effectively leverage multiple LLMs to deliver optimal results in a multi-model environment.

Conclusion

Efficient routing of large language models (LLMs) is crucial for optimizing performance and achieving better results across various applications. By employing strategies such as static, dynamic, and model-aware routing, systems can leverage the unique strengths of different models to effectively meet diverse needs. Advanced techniques like consistent hashing and contextual routing further enhance the precision and balance of task distribution. Implementing robust load balancing mechanisms ensures that resources are utilized efficiently, preventing bottlenecks and maintaining high throughput.

As LLMs continue to evolve, the ability to route tasks intelligently will become increasingly important for harnessing their full potential. By understanding and applying these routing strategies, organizations can achieve greater efficiency, accuracy, and application performance.

Key Takeaways

- Distributing tasks to models based on their strengths enhances performance and efficiency.

- Fixed rules for task distribution can be straightforward but may lack adaptability.

- Adapts to real-time conditions and task requirements, improving overall system flexibility.

- Considers model-specific characteristics to optimize task assignment based on priorities like accuracy or creativity.

- Methods such as consistent hashing and contextual routing offer sophisticated approaches for balancing and directing tasks.

- Effective strategies prevent bottlenecks and ensure optimal use of resources across multiple LLMs.

Frequently Asked Questions

A. LLM routing refers to the process of directing tasks or queries to specific large language models (LLMs) based on their strengths and characteristics. It is important because it helps optimize performance, resource utilization, and efficiency by leveraging the unique capabilities of different models to handle various tasks effectively.

Static Routing: Assigns tasks to specific models based on predefined rules or criteria.

Dynamic Routing: Adjusts task distribution in real-time based on current system conditions or task requirements.

Model-Aware Routing: Chooses models based on their specific characteristics and capabilities, such as accuracy or creativity.

A. Dynamic routing adjusts the task distribution in real-time based on current conditions or changing requirements, making it more adaptable and responsive. In contrast, static routing relies on fixed rules, which may not be as flexible in handling varying task needs or system states.

A. Model-aware routing optimizes task assignment by considering each model’s unique strengths and characteristics. This approach ensures that tasks are handled by the most suitable model, which can lead to improved performance, accuracy, and efficiency.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.